You’re out of free articles.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Sign In or Create an Account.

By continuing, you agree to the Terms of Service and acknowledge our Privacy Policy

Welcome to Heatmap

Thank you for registering with Heatmap. Climate change is one of the greatest challenges of our lives, a force reshaping our economy, our politics, and our culture. We hope to be your trusted, friendly, and insightful guide to that transformation. Please enjoy your free articles. You can check your profile here .

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Subscribe to get unlimited Access

Hey, you are out of free articles but you are only a few clicks away from full access. Subscribe below and take advantage of our introductory offer.

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Create Your Account

Please Enter Your Password

Forgot your password?

Please enter the email address you use for your account so we can send you a link to reset your password:

Is international cooperation or technological development the answer to an apocalyptic threat?

Christopher Nolan’s film Oppenheimer is about the great military contest of the Second World War, but only in the background. It’s really about a clash of visions for a postwar world defined by the physicist J. Robert Oppenheimer’s work at Los Alamos and beyond. The great power unleashed by the bombs at Hiroshima and Nagasaki could be dwarfed by what knowledge of nuclear physics could produce in the coming years, risking a war more horrifying than the one that had just concluded.

Oppenheimer, and many of his fellow atomic scientists, would spend much of the postwar period arguing for international cooperation, scientific openness, and nuclear restriction. But there was another cadre of scientists, exemplified by a former colleague turned rival, Edward Teller, that sought to answer the threat of nuclear annihilation with new technology — including even bigger bombs.

As the urgency of the nuclear question declined with the end of the Cold War, the scientific community took up a new threat to global civilization: climate change. While the conflict mapped out in Oppenheimer was over nuclear weapons, the clash of visions, which ended up burying Oppenheimer and elevating Teller, also maps out to the great debate over global warming: Should we reach international agreements to cooperatively reduce carbon emissions or should we throw our — and specifically America’s — great resources into a headlong rush of technological development? Should we massively overhaul our energy system or make the sun a little less bright?

Oppenheimer’s dream of international cooperation to prevent a nuclear arms race was born even before the Manhattan Project culminated with the Trinity test. Oppenheimer and Danish physicist Niels Bohr “believed that an agreement between the wartime allies based upon the sharing of information, including the existence of the Manhattan Project, could prevent the surfacing of a nuclear-armed world,” writes Marco Borghi in a Wilson Institute working paper.

Oppenheimer even suggested that the Soviets be informed of the Manhattan Project’s efforts and, according to Martin Sherwin and Kai Bird’s American Prometheus, had “assumed that such forthright discussions were taking place at that very moment” at the conference in Potsdam where, Oppenheimer “was later appalled to learn” that Harry Truman had only vaguely mentioned the bomb to Joseph Stalin, scotching the first opportunity for international nuclear cooperation.

Oppenheimer continued to take up the cause of international cooperation, working as the lead advisor for Dean Acheson and David Lilienthal on their 1946 nuclear control proposal, which would never get accepted by the United Nations and, namely, the Soviet Union after it was amended by Truman’s appointed U.N. representative Bernard Baruch to be more favorable to the United States.

In view of the next 50 years of nuclear history — further proliferation, the development of thermonuclear weapons that could be mounted on missiles that were likely impossible to shoot down — the proposals Oppenheimer developed seem utopian: The U.N. would "bring under its complete control world supplies of uranium and thorium," including all mining, and would control all nuclear reactors. This scheme would also make the construction of new weapons impossible, lest other nations build their own.

By the end of 1946, the Baruch proposal had died along with any prospect of international control of nuclear power, all the while the Soviets were working intensely to disrupt America’s nuclear monopoly — with the help of information ferried out of Los Alamos — by successfully testing a weapon before the end of the decade.

With the failure of international arms control and the beginning of the arms race, Oppenheimer’s vision of a post-Trinity world would come to shambles. For Teller, however, it was a great opportunity.

While Oppenheimer planned to stave off nuclear annihilation through international cooperation, Teller was trying to build a bigger deterrent.

Since the early stages of the Manhattan Project, Teller had been dreaming of a fusion weapon many times more powerful than the first atomic bombs, what was then called the “Super.” When the atomic bomb was completed, he would again push for the creation of a thermonuclear bomb, but the efforts stalled thanks to technical and theoretical issues with Teller’s proposed design.

Nolan captures Teller’s early comprehension of just how powerful nuclear weapons can be. In a scene that’s pulled straight from accounts of the Trinity blast, most of the scientists who view the test are either in bunkers wearing welding goggles or following instructions to lie down, facing away from the blast. Not so for Teller. He lathers sunscreen on his face, straps on a pair of dark goggles, and views the explosion straight on, even pursing his lips as the explosion lights up the desert night brighter than the sun.

And it was that power — the sun’s — that Teller wanted to harness in pursuit of his “Super,” where a bomb’s power would be derived from fusing together hydrogen atoms, creating helium — and a great deal of energy. It would even use a fission bomb to help ignite the process.

Oppenheimer and several scientific luminaries, including Manhattan Project scientists Enrico Fermi and Isidor Rabi, opposed the bomb, issuing in their official report on their positions advising the Atomic Energy Commission in 1949 statements that the hydrogen bomb was infeasible, strategically useless, and potentially a weapon of “genocide.”

But by 1950, thanks in part to Teller and the advocacy of Lewis Strauss, a financier turned government official and the approximate villain of Nolan’s film, Harry Truman would sign off on a hydrogen bomb project, resulting in the 1952 “Ivy Mike” test where a bomb using a design from Teller and mathematician Stan Ulam would vaporize the Pacific Island Elugelab with a blast about 700 times more powerful than the one that destroyed Hiroshima.

The success of the project re-ignited doubts around Oppenheimer’s well-known left-wing political associations in the years before the war and, thanks to scheming by Strauss, he was denied a renewed security clearance.

While several Manhattan Project scientists testified on his behalf, Teller did not, saying, “I thoroughly disagreed with him in numerous issues and his actions frankly appeared to me confused and complicated.”

It was the end of Oppenheimer’s public career. The New Deal Democrat had been eclipsed by Teller, who would become the scientific avatar of the Reagan Republicans.

For the next few decades, Teller would stay close to politicians, the military, and the media, exercising a great deal of influence over arms policy for several decades from the Lawrence Livermore National Laboratory, which he helped found, and his academic perch at the University of California.

He pooh-poohed the dangers of radiation, supported the building of more and bigger bombs that could be delivered by longer and longer range missiles, and opposed prohibitions on testing. When Dwight Eisenhower was considering a negotiated nuclear test ban, Teller faced off against future Nobel laureate and Manhattan Project alumnus Hans Bethe over whether nuclear tests could be hidden from detection by conducting them underground in a massive hole; the eventual 1963 test ban treaty would exempt underground testing.

As the Cold War settled into a nuclear standoff with both the United States and the Soviet Union possessing enough missiles and nuclear weapons to wipe out the other, Teller didn’t look to treaties, limitations, and cooperation to solve the problem of nuclear brinksmanship, but instead to space: He wanted to neutralize the threat of a Soviet first strike using x-ray lasers from space powered by nuclear explosions (he was again opposed by Bethe and the x-ray lasers never came to fruition).

He also notoriously dreamed up Project Plowshare, the civilian nuclear project which would get close to nuking out a new harbor in Northern Alaska and actually did attempt to extract gas in New Mexico and Colorado using nuclear explosions.

Yet, in perhaps the strangest turn of all, Teller also became something of a key figure in the history of climate change research, both in his relatively early awareness of the problem and the conceptual gigantism he brought to proposing to solve it.

While publicly skeptical of climate change later in his life, Teller was starting to think about climate change, decades before James Hansen’s seminal 1988 Congressional testimony.

The researcher and climate litigator Benajmin Franta made the startling archival discovery that Teller had given a speech at an oil industry event in 1959 where he warned “energy resources will run short as we use more and more of the fossil fuels,” and, after explaining the greenhouse effect, he said that “it has been calculated that a temperature rise corresponding to a 10 percent increase in carbon dioxide will be sufficient to melt the icecap and submerge New York … I think that this chemical contamination is more serious than most people tend to believe.”

Teller was also engaged with issues around energy and other “peaceful” uses of nuclear power. In response to concerns about the dangers of nuclear reactors, he in the 1960s began advocating putting them underground, and by the early 1990s proposed running said underground nuclear reactors automatically in order to avoid the human error he blamed for the disasters at Chernobyl and Three Mile Island.

While Teller was always happy to find some collaborators to almost throw off an ingenious-if-extreme solution to a problem, there is a strain of “Tellerism,” both institutionally and conceptually, that persists to this day in climate science and energy policy.

Nuclear science and climate science had long been intertwined, Stanford historian Paul Edwards writes, including that the “earliest global climate models relied on numerical methods very similar to those developed by nuclear weapons designers for solving the fluid dynamics equations needed to analyze shock waves produced in nuclear explosions.”

Where Teller comes in is in the role that Lawrence Livermore played in both its energy research and climate modeling. “With the Cold War over and research on nuclear weapons in decline, the national laboratories faced a quandary: What would justify their continued existence?” Edwards writes. The answer in many cases would be climate change, due to these labs’ ample collection of computing power, “expertise in numerical modeling of fluid dynamics, and their skills in managing very large data sets.”

One of those labs was Livermore, the institution founded by Teller, a leading center of climate and energy modeling and research since the late 1980s. “[Teller] was very enthusiastic about weather control,” early climate modeler Cecil “Chuck” Leith told Edwards in an oral history.

The Department of Energy writ large, which inherited much of the responsibilities of the Atomic Energy Commission, is now one of the lead agencies on climate change policy and energy research.

Which brings us to fusion.

It was Teller’s Lawrence Livermore National Laboratory that earlier this year successfully got more power out of a controlled fusion reaction than it put in — and it was Energy Secretary Jennifer Granholm who announced it, calling it the “holy grail” of clean energy development.

Teller’s journey with fusion is familiar to its history: early cautious optimism followed by a realization that it would likely not be achieved soon. As early as 1958, he said in a speech that he had been discussing “controlled fusion” at Los Alamos and that “thermonuclear energy generation is possible,” although he admitted that “the problem is not quite easy” and by 1987 had given up on seeing it realized during his lifetime.

Still, what controlled fusion we do have at Livermore’s National Ignition Facility owes something to Teller and the technology he pioneered in the hydrogen bomb, according to physicist NJ Fisch.

While fusion is one infamous technological fix for the problem of clean and cheap energy production, Teller and the Livermore cadres were also a major influence on the development of solar geoengineering, the idea that global warming could be averted not by reducing the emissions of greenhouse gas into the atmosphere, but by making the sun less intense.

In a mildly trolling column for the Wall Street Journal in January 1998, Teller professed agnosticism on climate change (despite giving that speech to oil executives three decades prior) but proposed an alternative policy that would be “far less burdensome than even a system of market-allocated emissions permits”: solar geoengineering with “fine particles.”

The op-ed placed in the conservative pages of the Wall Street Journal was almost certainly an effort to oppose the recently signed Kyoto Protocol, but the ideas have persisted among thinkers and scientists whose engagement with environmental issues went far beyond their own opinion about Al Gore and by extension the environmental movement as a whole (Teller’s feelings about both were negative).

But his proposal would be familiar to the climate debates of today: particle emissions that would scatter sunlight and thus lower atmospheric temperatures. If climate change had to be addressed, Teller argued, “let us play to our uniquely American strengths in innovation and technology to offset any global warming by the least costly means possible.”

A paper he wrote with two colleagues that was an early call for spraying sulfates in the stratosphere also proposed “deploying electrically-conducting sheeting, either in the stratosphere or in low Earth orbit.” These were “literally diaphanous shattering screens,” that could scatter enough sunlight in order to reduce global warming — one calculation Teller made concludes that 46 million square miles, or about 1 percent of the surface area of the Earth, of these screens would be necessary.

The climate scientist and Livermore alumnus Ken Caldeira has attributed his own initial interest in solar geoengineering to Lowell Wood, a Livermore researcher and Teller protégé. While often seen as a centrist or even a right wing idea in order to avoid the more restrictionist policies on carbon emissions, solar geoengineering has sparked some interest on the left, including in socialist science fiction author Kim Stanley Robinson’s The Ministry for the Future, which envisions India unilaterally pumping sulfates into the atmosphere in response to a devastating heat wave.

The White House even quietly released a congressionally-mandated report on solar geoengineering earlier this spring, outlining avenues for further research.

While the more than 30 years since the creation of the Intergovernmental Panel on Climate Change and the beginnings of Kyoto Protocol have emphasized international cooperation on both science and policymaking through agreed upon goals in emissions reductions, the technological temptation is always present.

And here we can perhaps see that the split between the moralized scientists and their pleas for addressing the problems of the arms race through scientific openness and international cooperation and those of the hawkish technicians, who wanted to press the United States’ technical advantage in order to win the nuclear standoff and ultimately the Cold War through deterrence.

With the IPCC and the United Nations Climate Conference, through which emerged the Kyoto Protocol and the Paris Agreement, we see a version of what the postwar scientists wanted applied to the problem of climate change. Nations come together and agree on targets for controlling something that may benefit any one of them but risks global calamity. The process is informed by scientists working with substantial resources across national borders who play a major role in formulating and verifying the policy mechanisms used to achieve these goals.

But for almost as long as climate change has been an issue of international concern, the Tellerian path has been tempting. While Teller’s dreams of massive sun-scattering sheets, nuclear earth engineering, and automated underground reactors are unlikely to be realized soon, if at all, you can be sure there are scientists and engineers looking straight into the light. And they may one day drag us into it, whether we want to or not.

Editor’s note: An earlier version of this article misstated the name of a climate modeler. It’s been corrected. We regret the error.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

The One Big Beautiful Bill Act is one signature away from becoming law and drastically changing the economics of renewables development in the U.S. That doesn’t mean decarbonization is over, experts told Heatmap, but it certainly doesn’t help.

What do we do now?

That’s the question people across the climate change and clean energy communities are asking themselves now that Congress has passed the One Big Beautiful Bill Act, which would slash most of the tax credits and subsidies for clean energy established under the Inflation Reduction Act.

Preliminary data from Princeton University’s REPEAT Project (led by Heatmap contributor Jesse Jenkins) forecasts that said bill will have a dramatic effect on the deployment of clean energy in the U.S., including reducing new solar and wind capacity additions by almost over 40 gigawatts over the next five years, and by about 300 gigawatts over the next 10. That would be enough to power 150 of Meta’s largest planned data centers by 2035.

But clean energy development will hardly grind to a halt. While much of the bill’s implementation is in question, the bill as written allows for several more years of tax credit eligibility for wind and solar projects and another year to qualify for them by starting construction. Nuclear, geothermal, and batteries can claim tax credits into the 2030s.

Shares in NextEra, which has one of the largest clean energy development businesses, have risen slightly this year and are down just 6% since the 2024 election. Shares in First Solar, the American solar manufacturer, are up substantially Thursday from a day prior and are about flat for the year, which may be a sign of investors’ belief that buyer demand for solar panels will persist — or optimism that the OBBBA’s punishing foreign entity of concern requirements will drive developers into the company’s arms.

Partisan reversals are hardly new to climate policy. The first Trump administration gleefully pulled the rug from under the Obama administration’s power plant emissions rules, and the second has been thorough so far in its assault on Biden’s attempt to replace them, along with tailpipe emissions standards and mileage standards for vehicles, and of course, the IRA.

Even so, there are ways the U.S. can reduce the volatility for businesses that are caught in the undertow. “Over the past 10 to 20 years, climate advocates have focused very heavily on D.C. as the driver of climate action and, to a lesser extent, California as a back-stop,” Hannah Safford, who was director for transportation and resilience in the Biden White House and is now associate director of climate and environment at the Federation of American Scientists, told Heatmap. “Pursuing a top down approach — some of that has worked, a lot of it hasn’t.”

In today’s environment, especially, where recognition of the need for action on climate change is so politically one-sided, it “makes sense for subnational, non-regulatory forces and market forces to drive progress,” Safford said. As an example, she pointed to the fall in emissions from the power sector since the late 2000s, despite no power plant emissions rule ever actually being in force.

“That tells you something about the capacity to deliver progress on outcomes you want,” she said.

Still, industry groups worry that after the wild swing between the 2022 IRA and the 2025 OBBA, the U.S. has done permanent damage to its reputation as a business-friendly environment. Since continued swings at the federal level may be inevitable, building back that trust and creating certainty is “about finding ballasts,” Harry Godfrey, the managing director for Advanced Energy United’s federal priorities team, told Heatmap.

The first ballast groups like AEU will be looking to shore up is state policy. “States have to step up and take a leadership role,” he said, particularly in the areas that were gutted by Trump’s tax bill — residential energy efficiency and electrification, transportation and electric vehicles, and transmission.

State support could come in the form of tax credits, but that’s not the only tool that would create more certainty for businesses — considering the budget cuts states will face as a result of Trump’s tax bill, it also might not be an option. But a lot can be accomplished through legislative action, executive action, regulatory reform, and utility ratemaking, Godfrey said. He cited new virtual power plant pilot programs in Virginia and Colorado, which will require further regulatory work to “to get that market right.”

A lot of work can be done within states, as well, to make their deployment of clean energy more efficient and faster. Tyler Norris, a fellow at Duke University's Nicholas School of the Environment, pointed to Texas’ “connect and manage” model for connecting renewables to the grid, which allows projects to come online much more quickly than in the rest of the country. That’s because the state’s electricity market, ERCOT, does a much more limited study of what grid upgrades are needed to connect a project to the grid, and is generally more tolerant of curtailing generation (i.e. not letting power get to the grid at certain times) than other markets.

“As Texas continues to outpace other markets in generator and load interconnections, even in the absence of renewable tax credits, it seems increasingly plausible that developers and policymakers may conclude that deeper reform is needed to the non-ERCOT electricity markets,” Norris told Heatmap in an email.

At the federal level, there’s still a chance for, yes, bipartisan permitting reform, which could accelerate the buildout of all kinds of energy projects by shortening their development timelines and helping bring down costs, Xan Fishman, senior managing director of the energy program at the Bipartisan Policy Center, told Heatmap. “Whether you care about energy and costs and affordability and reliability or you care about emissions, the next priority should be permitting reform,” he said.

And Godfrey hasn’t given up on tax credits as a viable tool at the federal level, either. “If you told me in mid-November what this bill would look like today, while I’d still be like, Ugh, that hurts, and that hurts, and that hurts, I would say I would have expected more rollbacks. I would have expected deeper cuts,” he told Heatmap. Ultimately, many of the Inflation Reduction Act’s tax credits will stick around in some form, although we’ve yet to see how hard the new foreign sourcing requirements will hit prospective projects.

While many observers ruefully predicted that the letter-writing moderate Republicans in the House and Senate would fold and support whatever their respective majorities came up with — which they did, with the sole exception of Pennsylvania Republican Brian Fitzpatrick — the bill also evolved over time with input from those in the GOP who are not openly hostile to the clean energy industry.

“You are already seeing people take real risk on the Republican side pushing for clean energy,” Safford said, pointing to Alaska Republican Senator Lisa Murkowski, who opposed the new excise tax on wind and solar added to the Senate bill, which earned her vote after it was removed.

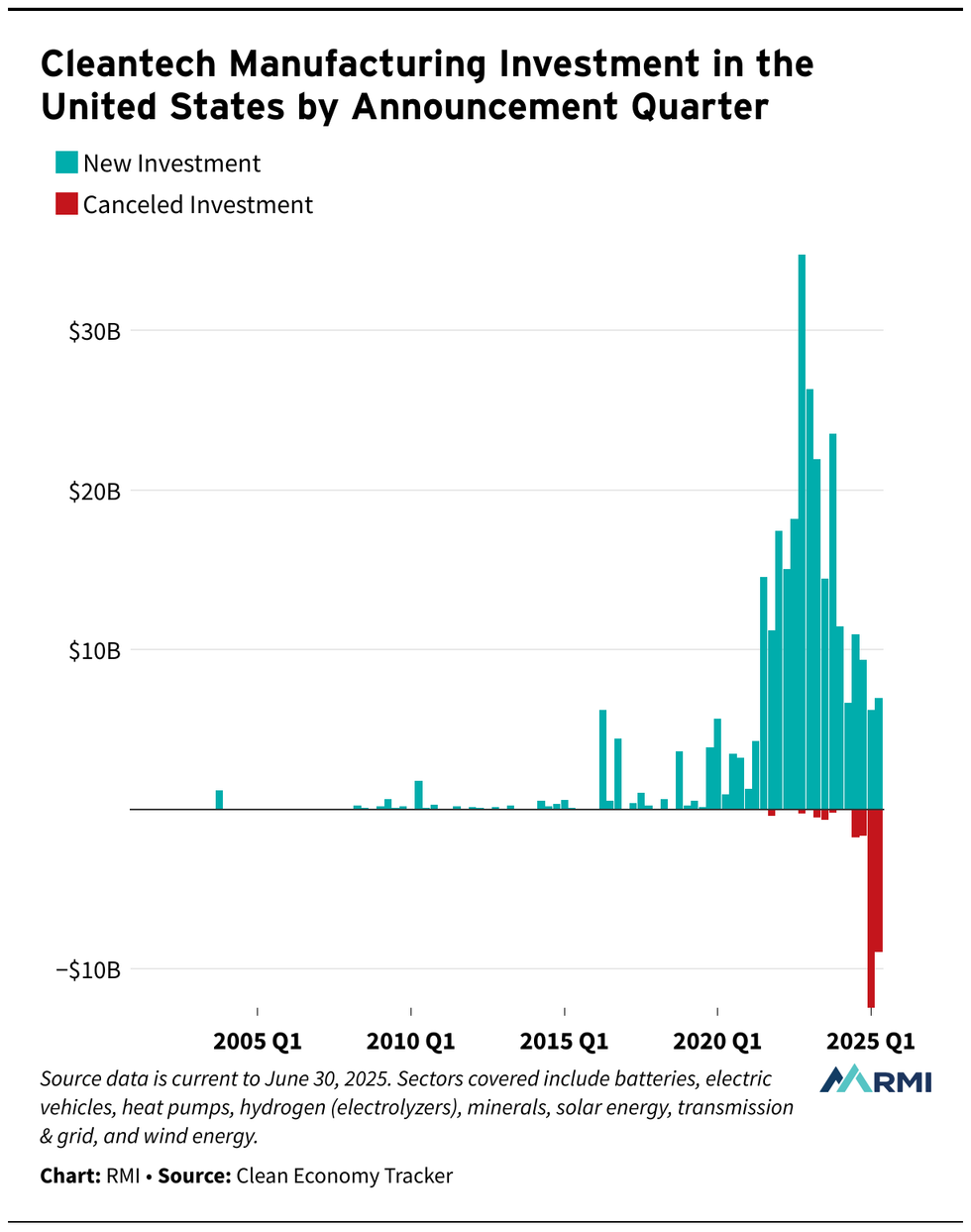

Some damage has already been done, however. Canceled clean energy investments adds up to $23 billion so far this year, compared to just $3 billion in all of 2024, according to the decarbonization think tank RMI. And that’s before OBBBA hits Trump’s desk.

The start-and-stop nature of the Inflation Reduction Act may lead some companies, states, local government and nonprofits to become leery of engaging with a big federal government climate policy again.

“People are going to be nervous about it for sure,” Safford said. “The climate policy of the future has to be polycentric. Even if you have the political opportunity to make a big swing again, people will be pretty gun shy. You will need to pursue a polycentric approach.”

But to Godfrey, all the back and forth over the tax credits, plus the fact that Republicans stood up to defend them in the 11th hour, indicates that there is a broader bipartisan consensus emerging around using them as a tool for certain energy and domestic manufacturing goals. A future administration should think about refinements that will create more enduring policy but not set out in a totally new direction, he said.

Albert Gore, the executive director of the Zero Emissions Transportation Alliance, was similarly optimistic that tax credits or similar incentives could work again in the future — especially as more people gain experience with electric vehicles, batteries, and other advanced clean energy technologies in their daily lives. “The question is, how do you generate sufficient political will to implement that and defend it?” he told Heatmap. “And that depends on how big of an economic impact does it have, and what does it mean to the American people?”

Ultimately, Fishman said, the subsidy on-off switch is the risk that comes with doing major policy on a strictly partisan basis.

“There was a lot of value in these 10-year timelines [for tax credits in the IRA] in terms of business certainty, instead of one- or two- year extensions,” Fishman told Heatmap. “The downside that came with that is that it became affiliated with one party. It was seen as a partisan effort, and it took something that was bipartisan and put a partisan sheen on it.”

The fight for tax credits may also not be over yet. Before passage of the IRA, tax credits for wind and solar were often extended in a herky-jerky bipartisan fashion, where Democrats who supported clean energy in general and Republicans who supported it in their districts could team up to extend them.

“You can see a world where we have more action on clean energy tax credits to enhance, extend and expand them in a future congress,” Fishman told Heatmap. “The starting point for Republican leadership, it seemed, was completely eliminating the tax credits in this bill. That’s not what they ended up doing.”

On a late-night House vote, Tesla’s slump, and carbon credits

Current conditions: Tropical storm Chantal has a 40% chance of developing this weekend and may threaten Florida, Georgia, and the Carolinas • French far-right leader Marine Le Pen is campaigning on a “grand plan for air conditioning” amid the ongoing record-breaking heatwave in Europe • Great fireworks-watching weather is in store tomorrow for much of the East and West Coasts.

The House moved closer to a final vote on President Trump’s “big, beautiful bill” after passing a key procedural vote around 3 a.m. ET on Thursday morning. “We have the votes,” House Speaker Mike Johnson told reporters after the rule vote, adding, “We’re still going to meet” Trump’s self-imposed July 4 deadline to pass the megabill. A floor vote on the legislation is expected as soon as Thursday morning.

GOP leadership had worked through the evening to convince holdouts, with my colleagues Katie Brigham and Jael Holzman reporting last night that House Freedom Caucus member Ralph Norman of North Carolina said he planned to advance the legislation after receiving assurances that Trump would “deal” with the Inflation Reduction Act’s clean energy tax credits, particularly for wind and solar energy projects, which the Senate version phases out more slowly than House Republicans wanted. “It’s not entirely clear what the president could do to unilaterally ‘deal with’ tax credits already codified into law,” Brigham and Holzman write, although another Republican holdout, Representative Chip Roy of Texas, made similar allusions to reporters on Wednesday.

Tesla delivered just 384,122 cars in the second quarter of 2025, a 13.5% slump from the 444,000 delivered in the same quarter of 2024, marking the worst quarterly decline in the company’s history, Barron’s reports. The slump follows a similarly disappointing Q1, down 13% year-over-year, after the company’s sales had “flatlined for the first time in over a decade” in 2024, InsideEVs adds.

Despite the drop, Tesla stock rose 5% on Wednesday, with Wedbush analyst Dan Ives calling the Q2 results better than some had expected. “Fireworks came early for Tesla,” he wrote, although Barron’s notes that “estimates for the second quarter of 2025 started at about 500,000 vehicles. They started to drop precipitously after first-quarter deliveries fell 13% year over year, missing Wall Street estimates by some 40,000 vehicles.”

The European Commission proposed its 2040 climate target on Wednesday, which, for the first time, would allow some countries to use carbon credits to meet their emissions goals. EU Commissioner for Climate, Net Zero, and Clean Growth Wopke Hoekstra defended the decision during an appearance on Euronews on Wednesday, saying the plan — which allows developing nations to meet a limited portion of their emissions goals with the credits — was a chance to “build bridges” with countries in Africa and Latin America. “The planet doesn’t care about where we take emissions out of the air,” he separately told The Guardian. “You need to take action everywhere.” Green groups, which are critical of the use of carbon credits, slammed the proposal, which “if agreed [to] by member states and passed by the EU parliament … is then supposed to be translated into an international target,” The Guardian writes.

Around half of oil executives say they expect to drill fewer wells in 2025 than they’d planned for at the start of the year, according to a Federal Reserve Bank of Dallas survey. Of the respondents at firms producing more than 10,000 barrels a day, 42% said they expected a “significant decrease in the number of wells drilled,” Bloomberg adds. The survey further indicates that Republican policy has been at odds with President Trump’s “drill, baby, drill” rhetoric, as tariffs have increased the cost of completing a new well by more than 4%. “It’s hard to imagine how much worse policies and D.C. rhetoric could have been for U.S. E&P companies,” one anonymous executive said in the report. “We were promised by the administration a better environment for producers, but were delivered a world that has benefited OPEC to the detriment of our domestic industry.”

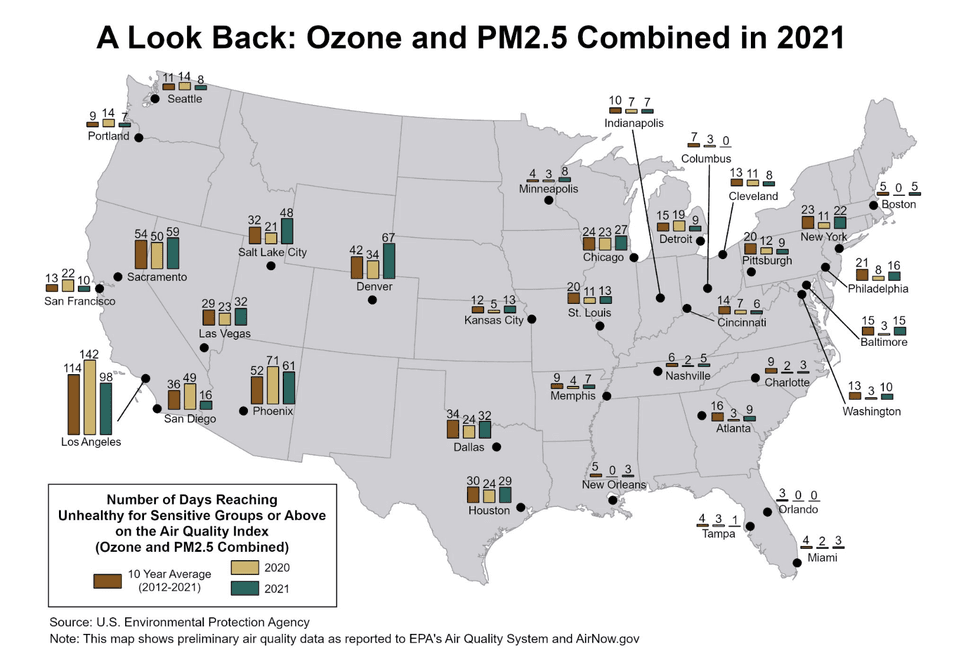

Fine-particulate air pollution is strongly associated with lung cancer-causing DNA mutations that are more traditionally linked to smoking tobacco, a new study by researchers at the University of California, San Diego, and the National Cancer Institute has found. The researchers looked at the genetic code of 871 non-smokers’ lung tumors in 28 regions across Europe, Africa, and Asia and found that higher levels of local air pollution correlated with more cancer-driving mutations in the respective tumors.

Surprisingly, the researchers did not find a similar genetic correlation among non-smokers exposed to secondhand smoke. George Thurston, a professor of medicine and population health at New York University, told Inside Climate News that a potential reason for this result is that fine-particulate air pollution — which is emitted by cars, industrial activities, and wildfires — is more widespread than exposure to secondhand smoke. “We are engulfed in fossil-fuel-burning pollution every single day of our lives, all day long, night and day,” he said, adding, “I feel like I’m in the Matrix, and I’m the only one that took the red pill. I know what’s going on, and everybody else is walking around thinking, ‘This stuff isn’t bad for your health.’” Today, non-smokers account for up to 25% of lung cancer cases globally, with the worst air quality pollution in the United States primarily concentrated in the Southwest.

National TV news networks aired a combined 4 hours and 20 minutes of coverage about the record-breaking late-June temperatures in the Midwest and East Coast — but only 4% of those segments mentioned the heat dome’s connection to climate change, a new report by Media Matters found.

“We had enough assurance that the president was going to deal with them.”

A member of the House Freedom Caucus said Wednesday that he voted to advance President Trump’s “big, beautiful bill” after receiving assurances that Trump would “deal” with the Inflation Reduction Act’s clean energy tax credits – raising the specter that Trump could try to go further than the megabill to stop usage of the credits.

Representative Ralph Norman, a Republican of North Carolina, said that while IRA tax credits were once a sticking point for him, after meeting with Trump “we had enough assurance that the president was going to deal with them in his own way,” he told Eric Garcia, the Washington bureau chief of The Independent. Norman specifically cited tax credits for wind and solar energy projects, which the Senate version would phase out more slowly than House Republicans had wanted.

It’s not entirely clear what the president could do to unilaterally “deal with” tax credits already codified into law. Norman declined to answer direct questions from reporters about whether GOP holdouts like himself were seeking an executive order on the matter. But another Republican holdout on the bill, Representative Chip Roy of Texas, told reporters Wednesday that his vote was also conditional on blocking IRA “subsidies.”

“If the subsidies will flow, we’re not gonna be able to get there. If the subsidies are not gonna flow, then there might be a path," he said, according to Jake Sherman of Punchbowl News.

As of publication, Roy has still not voted on the rule that would allow the bill to proceed to the floor — one of only eight Republicans yet to formally weigh in. House Speaker Mike Johnson says he’ll, “keep the vote open for as long as it takes,” as President Trump aims to sign the giant tax package by the July 4th holiday. Norman voted to let the bill proceed to debate, and will reportedly now vote yes on it too.

Earlier Wednesday, Norman said he was “getting a handle on” whether his various misgivings could be handled by Trump via executive orders or through promises of future legislation. According to CNN, the congressman later said, “We got clarification on what’s going to be enforced. We got clarification on how the IRAs were going to be dealt with. We got clarification on the tax cuts — and still we’ll be meeting tomorrow on the specifics of it.”

Neither Norman nor Roy’s press offices responded to a request for comment.