You’re out of free articles.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Sign In or Create an Account.

By continuing, you agree to the Terms of Service and acknowledge our Privacy Policy

Welcome to Heatmap

Thank you for registering with Heatmap. Climate change is one of the greatest challenges of our lives, a force reshaping our economy, our politics, and our culture. We hope to be your trusted, friendly, and insightful guide to that transformation. Please enjoy your free articles. You can check your profile here .

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Subscribe to get unlimited Access

Hey, you are out of free articles but you are only a few clicks away from full access. Subscribe below and take advantage of our introductory offer.

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Create Your Account

Please Enter Your Password

Forgot your password?

Please enter the email address you use for your account so we can send you a link to reset your password:

Same goes for the Midwest, according to Stanford air quality researcher Marshall Burke.

It’s not just you: Summers are getting smokier.

For the third year in a row, cities like Detroit, Minneapolis, Boston, and New York are experiencing dangerously polluted air for days at a time as smoke drifts into the U.S. from wildfires in Canada.

Smoke has traveled to these places in the past, Stanford University researcher Marshall Burke told me. But the data is clear that the haze is becoming more severe.

“The worst days are worse,” said Burke, “and you can see that in the averages, the last couple of years are much, much higher across the Midwest and the East Coast than we’ve observed in the past many decades.”

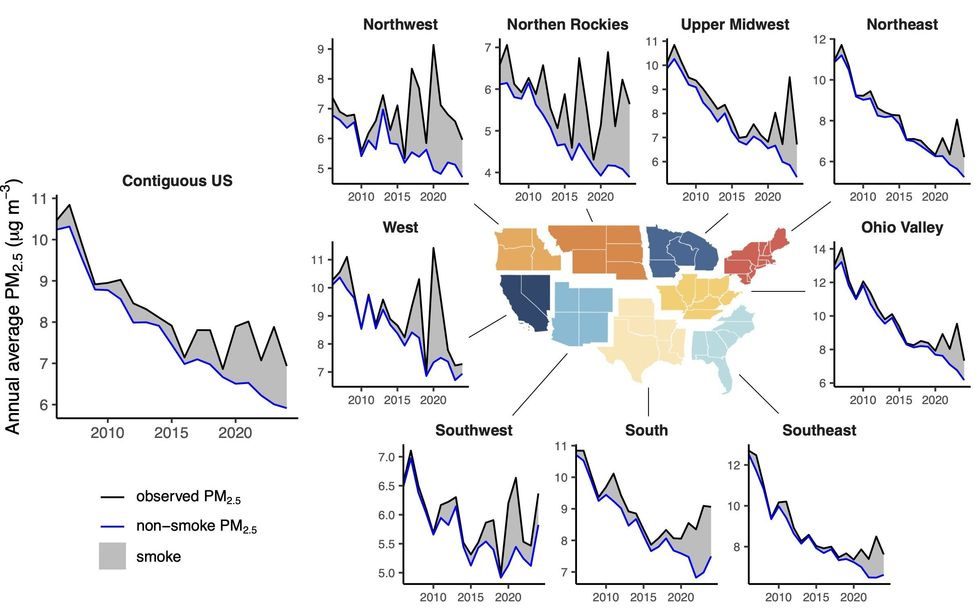

Burke is one of the leading scholars studying wildfire smoke, investigating everything from its effect on air quality, public health, and behavior, to preventative and adaptive public policy responses. In one of his most recent papers, which has not yet been peer reviewed, he and his co-authors analyzed the influence of smoke on air quality over the past two decades, using satellite imagery of smoke plumes to disentangle how much of the fine particulate matter, or PM2.5, measured by air monitoring stations came from fires versus more typical sources like cars and furnaces.

The study shows a sharp increase in the amount of smoke in the air around the U.S. in just the past few years. From 2020 to 2023, the average American breathed in concentrations of smoke-related PM2.5 that were between 2.6 and 6.7 times higher than the 2006 to 2019 average.

The paper also contains a stunning set of charts that show that wildfires are eroding decades of air quality gains — and the efficacy of air quality regulation in general — and that without these smoke events, PM2.5 levels would have been significantly lower.

I caught up with Burke to better understand what we know about this seemingly sudden escalation of smoke events, and what we can do to better protect ourselves from them moving forward. Our conversation has been lightly edited for clarity.

Given the smoke events we’ve seen in the last three years, can we say anything about the next three years?

I don’t think you want to make bets on any specific years. The long run trend, unfortunately, suggests that the last few years are going to be more representative than the sorts of years we got 10 to 15 to 20 years ago. And that is due to the underlying physical climate that’s warming and drying out fuels and making fire spread faster and fires much larger. Larger fires generate more smoke.

Has it all been driven by Canadian wildfires?

No. The East Coast and the Midwest will get exposure from fires as far as California, often in the Northern Rockies. But the recent very bad exposure — 2023 was by far the worst year in the Midwest and East Coast — that was nearly all from Canadian fires. This year, again, it’s nearly all from Canadian fires.

Why is that?

The reason we’ve seen a lot more Canadian fires is the same reason we’ve seen a lot more fires in the U.S. West — increasing fuel aridity. As temperatures warm, forests dry out. And so when you get lightning strikes, which tend to start most of the large fires in Canada, you get faster fire spread and much larger fires.

Interestingly, we’ve seen in Canada fewer total fires over time. Often I see people posting this on Twitter — Climate change is not a problem, we’re getting fewer fires in Canada — and that’s true. I think they’ve reduced other sources of ignitions. But you still get lightning ignitions.

Burned area has gone the other way — you’ve seen an increase in burned area. So, fewer fires, but much larger fires, and these larger fires are the ones that put out a lot more smoke, and the smoke gets pushed into population centers in Canada and into the U.S.

There were really large wildfires in California before 2023. Why weren’t places on the East Coast having smoky days as a result of those?

It’s the way the wind blows and how far it has to go. In the large 2020 and 2021 fire seasons we had in the U.S. West, some of that smoke certainly was making it to the East Coast, but given the prevailing wind patterns and the distance the smoke had to travel, the influence of those fires on air quality was not as big as the recent Canadian fires.

Are there other events that cause comparable air quality degradation to wildfires?

You can get really specific things — if a train crashes and lights on fire and a given town is exposed to really high levels of whatever pollutant for a few days. Sometimes you can get dust events that have broad scale exposure. But basically never do you reach the AQI levels that we see in wildfires. Wildfires are pretty unique in their ability to expose very large numbers of people to a very high level of pollutants for days, or unfortunately now, weeks, at a time. Nothing else compares in the U.S.

If you go to other parts of the world where you have large anthropogenic sources — Indian cities, Chinese cities — it can be quite different. There’s some exceptions. Salt Lake City and places where you get inversions and you get pollution trapped for many days, you can get pretty high levels of exposure, but typically nowhere close to what you get during these acute wildfire events.

When the AQI goes back down to levels that are more common in a city after a smoke event and people feel safer going outside, are you able to measure how much of the PM2.5 remaining in the air is from a wildfire? Does it matter?

We try to measure that directly — on any given day, how much of the PM that you’re experiencing is from wildfires versus from other sources. What you see is these events can turn on really quickly, and they can also turn off really quickly, either because the wind direction changes or because it rains — if it rains, you rain out a lot of these pollutants, and then you’re breathing mostly clean air right away.

We also try to measure, how does human health respond? One thing that science doesn’t give us a crisp answer to yet is, is one day of 100 micrograms better or worse than 10 days of 10 micrograms of exposure? We don’t actually really know. What we do see is people respond very differently to those two scenarios in ways that likely affect health outcomes. On really bad days, people tend to stay inside. In California, total emergency department visits go down instead of up, and that’s because people are not getting in their cars, they’re not getting in car accidents, they’re not spraining their ankle playing football or whatever because they’re staying at home.

On lower smoke days, we see emergency department visits go up. That’s probably because people are not changing their behavior. But, maybe surprisingly, we still don’t have a crisp answer if you’re thinking about asthma or mortality or other cardiovascular outcomes.

What are some of the other questions researchers are trying to answer as this becomes more of a national issue?

All sorts of things. The immediate health impacts that you think about — respiratory outcomes have been the one that’s been measured best in a lot of different settings. Cardiovascular outcomes, I would say the evidence is surprisingly more mixed on that. There’s a long-standing literature that shows cardiovascular mortality impacts of exposure to PM, but for wildfire PM, specifically, that evidence is less clear. Sorting that out and trying to understand whether there are differences is important.

Cognitive outcomes — does it increase your risk of dementia? Does student learning go down? Does it reduce cognitive performance at work? I think there’s emerging evidence that smoke is pretty important. Exposure to air pollution, more broadly, is important, but wildfire smoke, specifically, can impact these outcomes.

Birth outcomes is another one we and others have looked at. You see a pretty clear signature of wildfire smoke in birth outcomes — increases to the risk of pre-term birth, for instance. We used to just think about sensitive populations as elderly populations or people with pre-existing conditions. And basically what the research is showing is, no, actually, everyone is sensitive in some way. The list of people who are likely affected probably includes most, if not all of us.

What are the potential policy responses to this in places that haven’t had to deal with it in the past?

I think there’s three policy buckets. This is more true in the U.S. than Canada, but our fire problem is a combination of a warming climate and a century of fire suppression that has left abundant fuel in our landscapes, so number one is dealing with climate change as best we can, and two is doing something about the accumulated fuel loads. There’s a lot we can do there — prescribed burning is one approach that we and others are studying a lot; mechanical thinning, where you go out and actually remove the fuel. Understanding when and where to do that and what the benefits are is an ongoing scientific challenge, but I think most of the evidence would suggest we’re going to need a lot more of that than we’ve done, historically.

But even if we do a lot of that, we’re going to get more of these smoke events, unfortunately. And so we need to protect ourselves when these events happen. Indoor air filtration works really well, so we need to make sure people have access to filters of various types. The evidence would suggest that we see health impacts even at pretty low levels of exposure, and so if you have a portable filter — I drive my family crazy, I’m turning ours on all the time. You should basically just be running them all the time.

What about in terms of messaging? I’m thinking about city officials or state officials, when a smoke event is coming — and maybe this is still an active area of research — but what’s the current thinking on what message to send to people?

Yeah, I think it is an ongoing area, in terms of exactly how to do this and who to target with the information. The way we typically do this is to set these thresholds, right? So, above some threshold, you get a notice, and below, you don’t. That is understandable.

But what we see in the data is that there’s not some level below which you’re fine and above which you’re screwed. What we see is the more smoke you’re exposed to, the worse off you are, and so our goal should just be to reduce our exposure as best we can. How to message that effectively is not something we have a crisp social scientific answer to yet.

A lot of the advice has historically been that you should stay at home with your windows and doors closed. In California homes that is not very protective because California homes tend to be not very tight. In my view, just telling people to close their windows and doors is not sufficient for protecting health. They need some sort of active filtration — portable air filter, central air — to do that.

The other thing that’s happened in California, and I’ve seen this with my own kids — should we cancel school on really bad days? The assumption is that kids are better protected at home than they would be in the school environment, and that’s just not obviously true. It could be the case that for many kids, schools are better. We don’t know, because we do not have comprehensive measurement of indoor air quality, and this is a huge failing that we need to fix. Just as we measure it pretty comprehensively outside, we’ve got to do the same thing inside, and we just haven’t done this.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

On Tesla’s solar factory, Bolivia’s protests, and China’s hydrogen motorcycle

Current conditions: The East Coast heat wave is exposing more than 80 million Americans to temperatures near or above 90 degrees Fahrenheit through at least the end of today, putting grid operators who run PJM Interconnection and the New York electrical systems on high alert • Thunderstorms are drenching the United States’ southernmost capital city, Pago Pago, American Samoa, and driving temperatures up near 90 degrees • Some 3,600 miles north in the Pacific, Guam’s capital city of Hagåtña is in the midst of a week of even worse lightning storms.

American investment in low-carbon energy and transportation has fallen for a second consecutive quarter, ending an unbroken growth trend stretching back to 2019. In the first three months of 2026, total investment in those green sectors reached $61 billion, according to a Rhodium Group analysis published this morning. That’s a 3% drop from the previous quarter — and a 9% decline from the first three months of 2025. Contrary to the Trump administration’s claims to be overseeing a resounding revival of U.S. manufacturing, investments in clean technologies fell for a sixth consecutive quarter to $8 billion, down a whopping 34% from the first quarter of 2025. With federal tax credits for electric vehicles eliminated, investments into battery manufacturing plunged 47% year over year. At the state level, there’s been some progress. Virginia, Colorado, New Mexico, Oklahoma, Michigan, and New York all recorded their largest year-over-year increases over the past four quarters as clean electricity investments at least doubled in each state. “Wind was the primary driver in Virginia, New Mexico, New York, and Colorado; and solar in Michigan and Oklahoma,” the report noted. Sales of electric vehicles, at least on a worldwide level, are also gaining momentum: the International Energy Agency released a report this morning that forecast 30% of global new car sales will be battery electric this year.

The Tuesday night primary elections in six U.S. states, meanwhile, offered mixed results for clean energy supporters. Representative Thomas Massie, the dissident Republican from northern Kentucky who repeatedly broke with his party to criticize President Donald Trump and boasted of his off-grid home’s solar and battery system, lost by double digits to his White House-backed rival. Pennsylvania’s state Representative Chris Rabb, a progressive would-be “Squad” member whose platform mirrors the Green New Deal movement’s key policy demands, won the Democratic primary for the 3rd Congressional District spanning parts of Philadelphia.

During an appearance on Fox News last week, investor and “Shark Tank” star Kevin O’Leary vowed to release documents showing that opponents of the data center complex he proposed building in the Utah desert received funding from China, suggesting the protesters seeking to thwart his $100 billion megaproject were useful idiots in Beijing’s bid to hamper America’s technological progress. Now Secretary of the Interior Doug Burgum is echoing those claims. “It’s not organic and local,” he said Thursday on stage at the Alaska Sustainable Energy Conference in Anchorage, where he was the keynote speaker. “Some of this is foreign-sourced dark money coming in.” The link between rising electricity prices and data centers, he said, was “specious.” He went on to cite a specific example of a small town in North Dakota, from when he served as the state’s governor, where a billion-dollar data center project ended up reducing costs for ratepayers by paying a premium to “buy down” the price households paid. It wasn’t immediately obvious which project he was referring to. But my best guess from some cursory research is that he may have meant the Applied Digital data center in Ellendale, along the southeastern border with South Dakota. In 2023, Prairie Public reported that the facility helped bring down transmission costs, reducing ratepayers’ bills by as much as $61 per year.

Burgum also suggested that Democrats were inflaming the data center issue for political gain. But opposition spans the political spectrum. Tom Steyer, the billionaire progressive running for governor of California, on Monday walked back a response to a candidate questionnaire published by Greenpeace, in which he said he supported a pause on data center development. In a statement to Politico, campaign spokesperson Kevin Liao said that while Steyer wants to ensure protections for electricity prices and water resources, he does not support a temporary ban.

It appears Elon Musk is more likely to follow through on his promise to build enough manufacturing capacity to churn out 100 gigawatts of solar panels in the U.S. than to sell 500,000 Cybertrucks a year. Tesla has selected a site just outside Houston for a new factory that will expand the company’s capacity to churn out panels in its home market. That’s according to Electrek, which said it had independently confirmed a tip from a source pointing the publication to the Brookshire, Texas, site. The plant will be co-located with a battery factory that is already under construction at the same site.

“Any level of commitment to onshore the entire supply chain is a positive sign for American solar manufacturing and supply chain security,” Yogin Kothari, the chief strategy officer at the SEMA Coalition trade group that advocates for U.S. solar manufacturers against cheap Chinese imports, told me in a text message Tuesday night. “We can make solar panels here — we just have to have the commitment to do it.”

Sign up to receive Heatmap AM in your inbox every morning:

New Yorkers could receive $200 rebates from the state as part of Albany’s effort to soothe the pinch of rising electricity prices. On Tuesday, Newsday reported that the program would be part of the state budget agreement, which Democratic Governor Kathy Hochul and the Democrat-led legislature are still working to finalize. It wouldn’t be the first check the Hochul administration is sending out to voters as the former lieutenant governor, who initially came to power when former Governor Andrew Cuomo resigned over alleged sexual misconduct, runs for reelection in November. Last year, in a bid to combat the sting of inflation, the state issued rebates ranging from $150 to $400 depending on filing status and adjusted gross income in 2023.

Though it’s home to the world’s largest known reserves of lithium, landlocked Bolivia’s vast resources have largely remained undeveloped after two decades of rule by a left-wing government leery of foreign investment. The right-wing government that finally broke the Movimiento al Socialismo party’s grip on power in La Paz last year has sought to tap the so-called white gold in its salt flats, particularly as Washington looks for new sources of metals outside of supply chains China largely controls. New documents published Tuesday by the left-wing journalist Ollie Vargas appear to show the Bolivia’s Public Prosecutors Office’s warrants to arrest protesters and labor leaders connected to recent nationwide strikes on charges that include terrorism. “Bolivia’s government has ordered the arrest of all the main leaders of the indigenous movements and mineworkers unions,” Vargas wrote in a post on X. “They’re being charged for Terrorism for having organised the general strike against hunger. Strike continues regardless, now in day 7.” Clashes between law enforcement and protesters started last week.

China’s hydrogen industry is booming. Its sales of electrolyzers are beating out domestic manufacturers in Europe. Fuel cell vehicles are hitting the roads. Hydrogen refueling stations are opening. But the Chinese hydrogen sector with the highest volume of orders coming from overseas is for something simpler: Two-wheeled, hydrogen-powered motorcycles. That’s according to the latest China Hydrogen Bulletin, in which analyst Jian Wu reported from the 6th China International Consumer Products Expo on the island province of Hainan that a maker of the motorcycles had secured $300 million in overseas orders.

The maker of smart panels is tapping into unused grid capacity to help power the AI boom.

The race for artificial intelligence is a race for electricity. Data centers are scrambling to find enough power to run their servers, and when they do, they often face long waits while utilities upgrade the grid to accommodate the added demand.

In the eyes of Arch Rao, the CEO and founder of the smart electrical panel company Span, however, there is a glut of electricity waiting to be exploited. That’s because the electric grid is already oversized, designed to satisfy spikes in demand that occur for just a few hours each year. By shifting when and where different users consume power, it’s possible to squeeze far more juice out of the existing system, faster, and for a lot less money, than it takes to make it bigger.

This is what Span’s smart panel does — it manages the energy drawn by household appliances to help homeowners integrate electric vehicle chargers and heat pumps without triggering the need for electrical upgrades.

Now the age of AI has opened up new opportunities for the company. Last month, Span announced the launch of XFRA, a device that works with Span’s smart panel to power AI applications by tapping into the unused electrical capacity available to homes and businesses.

The company refers to XFRA as a “distributed data center.” It’s sort of like if you chopped up a full-scale data center into washing machine-sized boxes and plugged them into peoples’ homes; Span’s smart panel then acts as a conductor, orchestrating XFRA’s energy consumption to take advantage of unused power capacity without stepping on the home’s other energy needs. In exchange for hosting one of these XFRA “nodes,” Span will offer homeowners and tenants deeply discounted, if not free electricity and internet service.

The idea sounded audacious, verging on fantastical, until I watched the economics play out in real time at one of Span’s labs in a warehouse south of San Francisco. Ryan Harris, the company’s chief revenue officer, showed me an XFRA prototype — a metal box about the size of a freezer chest stuffed with Dell servers and Nvidia liquid-cooled GPUs. Span was renting out the processing power from this node and six others to AI users through an online marketplace. On a computer screen next to the unit, a dashboard showed the revenue flowing in from the fleet — $500 over the past 24 hours, and more than $21,000 in the previous three weeks. The numbers continued to tick up as I stood there.

When I first planned to write about Span, XFRA was still a secret. I reached out because its smart panel business, which debuted in 2019, seemed to suddenly take off.

In February, Span announced that PG&E, the largest utility in California, would be installing its devices in thousands of homes beginning this summer. Then in March, the company revealed a partnership with Eaton, one of the biggest legacy electrical equipment companies in the world. Eaton is investing $75 million in Span and will begin selling co-branded electrical panels to its extensive network of distributors, installers, and homebuilders later this year. With the launch of XFRA, Span is becoming something like a utility itself. To date, the company has raised more than $400 million, and will soon close a nearly $200 million Series C.

Of course it will take more than smart electrical panels to serve data centers’ soaring power needs. In this era of unprecedented energy demand growth, building a bigger electrical system is unavoidable — but the size of the investment, and the cost impacts on everyday electricity customers, are malleable. Several recent studies have shown just how big the opportunity is to get more energy out of our existing infrastructure if the entire system can become a bit more flexible.

Last year, Duke University researchers found that on average, the U.S. is utilizing only about half of our electricity generation capacity. Nationwide, they estimated, the grid could accommodate at least 76 gigawatts of new load — close to the total generation capacity installed in California — without having to upgrade the electrical system or build new power plants, so long as those new end-users were somewhat flexible with when and how much electricity they used.

More recently, in a report commissioned by a coalition called Utilize, of which Span is a member, the Brattle Group found that milking just 10% more from our existing grid infrastructure on an annual basis could reduce electricity rates for all end users by 3.4%. Utilities can sell more energy, faster, and spread the fixed costs of running the system across more customers.

What all this meant in practice did not fully click for me until I saw a demonstration of Span’s panel at the lab a few weeks ago. Harris, the CRO, led me to a free-standing wall lined with household appliances, a stripped-down version of an all-electric home. A minisplit heat pump whirred while a high-speed electric vehicle charger was juicing up a Rivian parked on the warehouse floor. A TV screen displayed the amount of power going to each device, as measured by Span’s electric panel.

Together, the heat pump and charger were using about two-thirds of the electric capacity of this demonstration home, which was running on a 100-amp utility service connection. The charger alone was using 48 amps.

The owner of this theoretical home would typically not have been allowed to install such an energy-intensive EV charger without upgrading to 200-amp service. Electric codes require that residential electrical systems have room for the rare scenario that a home’s major appliances all run at once, for safety reasons. Otherwise, the occupants might accidentally try to draw more power than their utility connection can deliver, overheat their wires, and start a fire. 100-amp connections are exceedingly common in homes designed to use gas or propane for cooking and heating, but once you replace those appliances with electric versions, or add an EV charger, you start to push the limit.

A service upgrade to 200 amps can take many months and cost several thousands of dollars. The utility typically has to run new wiring to the house, and might even have to augment the grid infrastructure serving the neighborhood.

Span’s smart panel offers an alternative.

“Shall we turn on some load?” Harris said. An engineer on Span’s product team turned on the demo home’s electric water heater, and I watched as the chart on the screen adjusted. The water heater jumped from zero to 22 amps, while the EV charger’s amperage decreased from 48 to 33. When the engineer switched on the clothes dryer, drawing 24 amps, the EV charger’s amperage dropped further.

The electrical panel was tracking how much power was flowing to each of its circuits and throttling the EV charger in response. When the team dialed up the electric stove to heat a pot of water, the EV charger shut off altogether.

Next, Harris requested a boost to the “garage” sub-panel, simulating a hot tub or some power tools kicking on. Soon, the water heater shut off, too. “You have 50 gallons of hot water, so it’s not going to have any negative impact on the customer in that moment,” Harris told me. He showed me an alert that appeared on the Span phone app notifying the homeowner that the system was temporarily limiting power to the EV charger and water heater in order to power other devices.

Users can choose which appliances the system bumps first. While some devices, such as EV chargers, water heaters, and heat pumps, have the ability to be ramped up and down, others will simply shut off.

At $2,550 excluding labor for the smallest, most basic smart panel, and just over $4,000 for the biggest one, Span is more expensive than the average dumb panel, which can come in under $1,000. Depending on the home and the complexity of a service upgrade, however, it’s often cheaper to install Span than to move to 200 amps. It’s also almost certainly faster.

Span’s first generation product couldn’t do any of this. Initially, the company’s value proposition was just to give people more control over their energy usage. The original Span panel gave homeowners with batteries the ability to select which devices they wanted to power during an outage and ensure they didn’t accidentally lose charge on non-essentials. The company had to build an initial customer base and validate the technology in the real world, Rao told me, before it could earn the credibility (and the capital) to deploy the fully realized version of the product.

In 2023, Span debuted “PowerUp,” the software that makes what I witnessed at the lab possible. With PowerUp, Span’s smart panel went from being a cool gadget to a money-saver, helping homeowners skip utility service upgrades. The success of PowerUp opened the door for Span to engage with larger partners, starting with homebuilders.

“We had to demonstrate that we were safe and scalable in the home retrofit category to then get homebuilders — who are typically very, very cost sensitive, are not often at the tip of the spear in terms of technology adoption — to say, this is a proven technology, and it saves you money,” said Rao.

Residential developers face similar problems as homeowners, but on a bigger scale. While 200-amp connections have become more standard over the past few decades, new electrical codes that require either fully electric or electric-ready construction are pushing the limits.

“Now the load calculations will put them at 300 or 400 amps of service per home,” Rao told me. “Multiply that by a community of 500 homes, and suddenly you’ve doubled the amount of interconnection you need to bring from the utility.”

This raises the cost of development, and it can also increase the wait time — potentially by years — to get hooked up to the grid. Again, Span offers an alternative. To date, nearly half of the top 20 homebuilders across the U.S. have used the company’s technology, Rao told me. More broadly, its electrical panels have been installed in tens of thousands of homes in all 50 states.

I should note that Span is not the only solution on the market for homeowners or homebuilders to avoid service upgrades — the main alternative is just choosing appliances that don’t use so much power. There are water heaters, clothes dryers, and EV chargers on the market that run on lower amperage, and startups like Copper and Impulse Labs are making stoves with integrated batteries that enable them to do the same. There are also Span-adjacent technologies such as smart circuit splitters that let you plug two power-hungry devices, like an EV charger and a clothes dryer, into the same circuit, and the device will safely modulate power between the two.

“You can hack your way around both problems — one, of a panel upgrade, and two, a Span upgrade, which is also expensive — with cheaper solutions,” Brian Stewart, the co-founder of Electrify Now, a group that provides education and advocacy on home electrification, told me. “But it’s less elegant, let’s just say, than the Span solution.”

Though he started at the home level, Rao has always had his sights set on a much bigger customer — utilities. Several Span executives I spoke to referenced an “infamous” Powerpoint slide from the early days of the company with a bar chart that showed how the company would scale in three phases. First came “back-up,” referring to Span’s initial home battery management product. Next was “power-up,” the software that enabled electrification by avoiding service upgrades. The third was “fleet.”

The same safety principles that trigger service upgrades at individual homes also apply upstream at the neighborhood level. For example, the size of a neighborhood’s transformer, the equipment that changes the voltage of the electricity as it moves along the grid, depends on the combined amperage of the homes it serves. If all those homes are installing EV chargers or heat pumps or whatever else and starting to use more electricity, the utility will have to upgrade the transformer — a cost that gets spread across all of its customers. If a critical mass of the homes have Span panels, however, they can avoid this.

Partnering with major homebuilders earned Span “the right to sit at the table with utilities,” Rao told me, “and say, look, we’ve done this at the home level, at the community level. Imagine if you could do this at the grid level, where the benefit doesn’t just accrue to individual customers or home builders, it can accrue to all rate payers?”

I got a taste of what this looks like back at the lab, where Harris showed me Span’s “fleet capability.” There were actually three demonstration homes set up on the warehouse floor, and Harris showed me how a utility could coordinate a response across multiple Span panels to keep a neighborhood within its safe energy limits.

Imagine it’s a really hot day, and the utility is on the verge of having to institute rolling blackouts. Instead, it can implement what’s called a dynamic service rating event, sending a signal out to the Span panels served by a given transformer to reduce their electrical limit from 100 amps to 60, for example. Rather than the entire neighborhood losing power, a few homes would see their EV charging cut back or their thermostats go up by a few degrees. Of course, not everybody will want to give this kind of control to the utility; customers often cite concerns about comfort and convenience as reasons they are skeptical of these kinds of programs. When I asked Harris whether participating would require that Span customers opt in, he said it was more likely to be opt-out.

Span has done several pilot projects testing this capability. Installing electrical panels is too complex for utilities to do en masse, though. So the company developed Span Edge, a smaller version of its panel that can be installed at a building’s electricity meter. It does all the same things the larger electrical panel does, without needing to serve as the home’s central nervous system. It still enables homeowners to avoid service upgrades by throttling EV chargers or whatever other devices are hooked up to it, but it’s much simpler to install.

This is the device that the California utility PG&E will begin deploying in homes later this summer. The company will offer Span Edge to homeowners who are installing appliances that might trigger an electrical upgrade, or are considering doing so in the future, through a program called PanelBoost. It’s entirely voluntary, and while participants will have to pay for installation, the panel itself comes gratis.

“This is the first time that there’s a large-scale direct purchase of Span equipment by a utility,” Alex Pratt, Span’s vice president of business development, told me. “This has long been the North Star for the company.”

Paul Doherty, the manager for clean energy and innovation communications at PG&E, told me the company saw Span Edge as a “win, win, win for PG&E, for our customers, and for the environment.” It enables customers to electrify their homes more quickly and affordably, and for PG&E to sell more electrons without raising rates.

“We’re very bullish about the opportunity for this technology and the benefit that it will bring for the grid and for our customers here in California,” Doherty told me.

Rao sees XFRA as a natural evolution of Span’s basic premise. The company has found that 98% of its customers that have 200-amp service connections have about 80 amps available at any given time, Harris told me. Hosting an XFRA node enables homeowners to monetize that unused capacity.

To start, Span is prioritizing getting XFRA into newly built homes, where the developer handles customer acquisition and installing at scale is straightforward since every home is roughly the same. The company has partnered with the developer PulteGroup to roll out a 100-home pilot program for a total of over 1.2 megawatts of compute capacity. The partners have not specified where it will be yet or whether there will be a single offtaker for the compute.

In the longer term, Rao told me, XFRA could be the “unlock” that makes electrification more affordable for people. “There is a utopian end state in my mind where XFRA allows more of our customers to get free energy, free backup, and free internet,” he said.

First, the company will have to find out if anyone is actually willing to let XFRA into their home. During my final conversation with the CEO, after my lab visit, he showed me the infamous slide forecasting the company’s growth from “back-up to power-up to fleet.” The y-axis on the chart showed the number of homes per year the company could address at each stage. The bar for back-up systems landed at 5,000 per year, Power-up came to nearly 100,000. Suffice it to say, Span hasn’t hit these numbers.

“Are you where you want to be today?” I asked him.

Of course, he wasn’t going to say no. “We have contracts in place for hundreds of thousands of homes already with utilities,” he said. “Right now our focus is on execution — delivering on that scale, as opposed to finding that scale. It’s a deployed product, it’s not a downloadable app, so it takes time to physically deploy hundreds of thousands of endpoints. So I think that scale is coming.”

After years of dithering, the world’s biggest automaker is finally in the game.

The hottest contest in the electric car industry right now may be the race for third place.

Thanks to Tesla’s longtime supremacy (at least in this country), its two mainstays — the Model Y and Model 3 — sit comfortably atop the monthly list of best-selling EVs. Movement in the No. 3 spot, then, has become a signal for success from the automakers attempting to go electric. The original Chevy Bolt once occupied this position thanks to its band of diehard fans. Last year, the brand’s affordable Equinox EV grabbed third. And then, earlier this year, an unexpected car took over that spot on the leaderboard: the Toyota bZ.

The surprise is not so much the car itself, but rather its maker. Over the years, we’ve called out Toyota numerous times for dragging its feet about electric cars. The world’s largest automaker took the hybrid mainstream and still produces the hydrogen-powered Mirai. Nevertheless, Toyota publicly cast doubt about the viability of fully electric cars on several occasions and let other legacy car companies take the lead. Its first true EV, the bZ4X, was a disappointment, with driving range and power figures that lagged behind the rest of the industry.

Suddenly, though, the Toyota narrative looks different. Working at its trademark deliberate pace, the auto giant is revealing a batch of new EVs this year, just as competitors Ford, GM, Honda, and Hyundai-Kia are pulling back on their electric lines (and writing off billions of dollars to tilt their companies back toward fossil fuels). There is the Toyota bZ, which Car and Driver called “quicker, nicer inside, and better at being an EV” than the bZ4X, its predecessor. There is the C-HR, a small crossover that had been gas-powered before it became fully electric this year. And there is the large Highlander SUV, a popular nameplate that’s about to become EV-only.

To see what’s changed with the cars themselves, I test-drove the C-HR last week. A decade ago, I’d taken its gas-powered predecessor on a road trip down Long Island and found it to be a fun but frustrating vehicle. Toyota went way over the top with the exterior styling back then to make the little car scream “youthful,” but under the hood was a woefully underpowered engine that took about 11 seconds to push the C-HR from 0 to 60 miles per hour. Now, thanks to the instant torque of electric motors, the new version finally has the zip to go with its looks: It’ll get to 60 in under five seconds, and feels plenty zoomy just driving around town.

Inside, C-HR feels like an evolved Toyota that isn’t trying too hard to be a Tesla. The brand took the two-touchscreen approach, with a large one in the center console to handle main functions such as navigation, entertainment, and climate control, and a smaller one in front of the driver’s eyes where the traditional dashboard would be. There are still physical buttons on the wheel to manipulate music volume and cruise control, but climate controls are entirely digital.

The big touchscreen is a work in progress. It’s too crowded with information compared to a clean overlay like Tesla’s or Rivian’s, and the design of the navigation software had some profound flaws. (Whether you’re using the voice assistant or keyboard input to search for a destination, the system lags a troubling amount for a brand-new car. Maybe Toyota just expects you to use Apple CarPlay and ignore its built-in system.) Still, the interface is more iPhone-like and intuitive than what Hyundai and Kia are using in their EVs.

Here’s the real problem with the C-HR: Although it accomplishes the mission of feeling like a fun-to-drive Toyota that happens to be electric, it’s not terribly good at being an electric car. The Toyota lacks one-pedal driving, the delightful feature where the car slows itself as soon as you let off the accelerator, negating the need to move your foot between two pedals all time. Nor does it have a front trunk, a.k.a. frunk, the fun bonus on EVs made possible by the absence of an engine. According to Toyota, the C-HR is so small that engineers simply didn’t have room for a frunk (or a glovebox, for that matter).

The C-HR’s NACS charging port makes it possible to use Tesla Superchargers, and its charging port location on the passenger’s side front should make it simple to reach them. But instead of sitting on the corner of the car, easily reachable by a plug right in front of the parked vehicle, the port is several feet back, just behind the front wheel. And its door opens toward the charger, so the cord has to reach over or under the door that’s in the way. I made it work at a Supercharger in greater San Diego, but only after several frustrating tries and with less than an inch of cord to spare.

Those are the complaints of a longtime EV driver, and they might not matter to some C-HR buyers. The deepest oversight is the C-HR’s nav, which, at least right now, doesn’t have compatible charging stations built into its route planning — a warning message will notify you if the chosen route requires recharging to reach the final destination, but the car won’t tell you where to go. This is a glaring omission for potential buyers who’ll be taking their first EV road trip. (Get PlugShare, folks.) Planned charging is effectively an industry standard — even Toyota’s legacy competitors like Chevy and Hyundai will choose appropriate fast-chargers and route you to them, even if their interface isn’t as seamless and satisfying as what’s in a Tesla or Rivian. At least that’s a problem that could be solved later via software update, though.

Because of these faults, it’s difficult to imagine someone choosing this as their second or third EV. But maybe that’s not the game at all. There is a legion of Toyota drivers out there, many of whom might think about buying their first electric car if their brand built one. Despite its flaws, the C-HR is that. It’s got enough range for city living and occasional road trips, enough power to be fun to drive, and a Toyota badge on the hood.

Whatever their quirks, the very existence of the C-HR and its electric stablemates is a testament to Toyota’s plan to play the long game with EVs rather than ebb and flow with every whipsaw turn in the American car market. And they’re here just in time. Amidst volatile oil prices because of the Iran war, drivers worldwide are more interested in going electric.

In the U.S., that interest has buoyed used EV sales — not new — because so few affordable options are on the market. Although C-HR starts near $38,000, Toyota has begun to offer discounts that would bring it in line with gas-powered crossovers that are $5,000 cheaper. Maybe that’ll be enough for the subcompact to join its bigger sibling, the bZ, on that list of best-sellers.