You’re out of free articles.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Sign In or Create an Account.

By continuing, you agree to the Terms of Service and acknowledge our Privacy Policy

Welcome to Heatmap

Thank you for registering with Heatmap. Climate change is one of the greatest challenges of our lives, a force reshaping our economy, our politics, and our culture. We hope to be your trusted, friendly, and insightful guide to that transformation. Please enjoy your free articles. You can check your profile here .

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Subscribe to get unlimited Access

Hey, you are out of free articles but you are only a few clicks away from full access. Subscribe below and take advantage of our introductory offer.

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Create Your Account

Please Enter Your Password

Forgot your password?

Please enter the email address you use for your account so we can send you a link to reset your password:

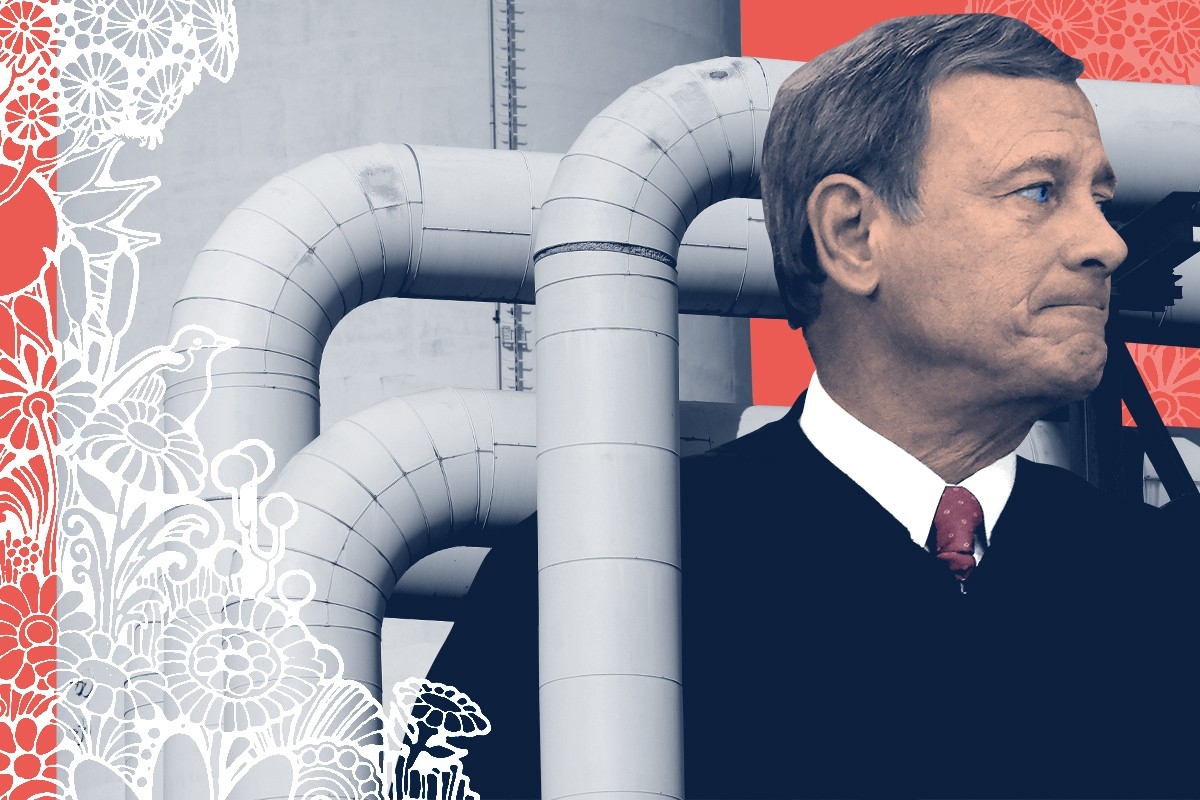

A desire to please the Court may have rendered the EPA’s new power plant rule a little too ineffectual.

If nothing else, give the Environmental Protection Agency credit for this: They seem to understand the assignment.

Last year, the Supreme Court struck down the Clean Power Plan, President Barack Obama’s ambitious attempt to restrict carbon pollution from power plants. That proposal never carried the force of law, and it had been held in suspended animation by the Court — and later the Trump administration — since 2016. But after President Joe Biden took office, Chief Justice John Roberts and the Court’s conservative majority revived it seemingly entirely for the sake of deeming it illegal.

The proposal went far beyond what was allowed by Congress, Roberts ruled. Normally, an EPA standard would require that power plants or factories install some kind of equipment on their smoke stacks to meet a pollution cap. “By contrast, and by design,” the Obama proposal could only be satisfied by burning less coal, the chief justice wrote. It required “generation shifting,” forcing states to get more of their power from renewable, nuclear, or natural-gas plants.

That overreached the EPA’s authority under the Clean Air Act, Roberts declared. If the EPA wanted to regulate greenhouse gases, then it needed to treat them like a normal air pollutant — and it needed to act like a normal technocratic agency. Above all, it had to keep its regulations to those that could be accomplished “inside the fenceline” of each power plant.

So last week, when the Biden administration finally unveiled its own draft attempt at regulating carbon pollution from power plants, it knew it was playing on the Court’s, well, court. And it behaved accordingly. The best thing you can say about the EPA’s new power-plant proposal — which will be one of the Biden era’s most important climate regulations — is that it was meticulously, painstakingly tailored to the Court’s demands. If Chief Justice Roberts asked for a normal rule, then the EPA has delivered one so awkwardly, self-consciously normal that it seems a little like a narc. The worst thing about the new rule is that this desire to please the Court may have rendered the rule a little too ineffectual.

If America wants to fight climate change, it must clean up its power plants. Generating abundant, cheap, zero-carbon electricity is the key to the country’s decarbonization strategy.

“If you clean up the power sector, it enables you to clean up other sectors of the economy too, through electrification,” Leah Stokes, an environmental-science professor at the University of California, Santa Barbara, told me. “Electric cars, heat pumps, induction stoves — all these machines can be fueled with clean power.”

Biden’s climate law, the Inflation Reduction Act, will slash emissions from the sector over the next decade, according to federal and independent modeling efforts. But it won’t get the sector all the way there. That’s where the new proposal is supposed to step in.

As per the Supreme Court’s request, the proposal details how every kind of power plant — even those that burn coal or natural gas — can meet their climate requirements for decades to come. It mandates a buildout of carbon capture and storage infrastructure, or CCS, for most coal and some natural-gas plants that plan to stay open long-term.

“The EPA rule makes sure everyone is on the same level-playing field. If the Inflation Reduction Act is enough to incentivize CCS in some places, the EPA is gonna make sure everyone is gonna do it,” Nick Bryner, a law professor at Louisiana State University, told me. “I think it’s designed very, very well to work in tandem with the IRA tax credits.”

If the IRA is the regulatory-friendly angel on its shoulder, then the Supreme Court’s decision last year — called West Virginia v. EPA — is the devil. The EPA’s desire to stay on the Court’s good side is even visible in the proposal’s name. Previous administrations have tried to give their power-plant rules a memorable name — Obama had the Clean Power Plan, of course, and the Trump administration christened its effort the “Affordable Clean Energy Rule,” or ACE. The Biden administration, by comparison, named the new proposal:

New Source Performance Standards for Greenhouse Gas Emissions from New, Modified, and Reconstructed Fossil Fuel-Fired Electric Generating Units; Emission Guidelines for Greenhouse Gas Emissions from Existing Fossil Fuel-Fired Electric Generating Units; and Repeal of the Affordable Clean Energy Rule

That’s the NSPSGHGNMRFFFEGU; EGGGEEFFFGU; RACE Rule for short.

I would say that the agency couldn’t have given it a more technocratic name if it tried, except that it obviously tried very hard. “Traditional approach, traditional name,” the EPA’s press office chirped when the Politico reporter Alex Guillén first noted the name. Just what the Supreme Court asked for!, they all but added. The agency is so desperate to look obedient and demure that even its social-media team has been briefed on current federal doctrine.

At the same time, the rule does “a tremendous amount to make the rule as flexible as possible given the constraints they’re working with in West Virginia v. EPA,” Bryner said. Under the proposal, some natural-gas plants can choose between installing carbon-capture equipment or burning low-carbon hydrogen.

But the rules may have erred on the side of too much flexibility, says Charles Harper, a policy analyst at Evergreen, a climate advocacy group and think tank. Evergreen and other environmental groups are worried that the rules might be too generous to fossil fuel companies. They’re focusing their criticism on two elements of the draft: its handling of natural-gas plants and coal retirements.

First, the EPA rule as proposed would not apply to an overwhelming majority of the country’s natural-gas plants.

A large share of carbon emissions from natural-gas plants come from so-called “baseload” plants that generate many hundreds of megawatts of electricity at all hours of the day. The rule focuses on these facilities, and it requires them either to install CCS equipment or to burn hydrogen fuel.

But the rule is not nearly so strict about small or medium-sized natural-gas plants. Natural-gas plants that generate less than 300 megawatts of electricity — or that run less than half the time — are essentially exempt from the rule. This excludes 77% of the country’s natural-gas plants from the new EPA proposal, requiring them to make no changes through 2040.

It is unclear what share of carbon emissions these natural-gas plants represent. The EPA did not provide an estimate of their carbon emissions before the deadline for this story.

As a whole, natural-gas power plants emit 43% of the U.S. electricity sector’s carbon pollution, despite producing nearly twice as much power as coal.

Environmental groups say the proposal’s coal problem is simpler to fix. In the draft, the EPA puts coal-fired power plants in different categories depending on when they’re slated to retire. Plants that have no retirement date — or that will remain open after 2040 — must install equipment to capture 90% of their emissions by the year 2030. Plants shutting down after 2035 must make a cheaper set of changes. And plants due to close by 2032 don’t have to make any changes at all, so long as they don’t increase their emissions over the next decade.

Those deadlines are too long from now, and the EPA should bring them forward in time when it issues a final version of the rule, Harper said. “2040 is pretty far out and would entail a lot of unabated emissions hitting the climate and human health,” he told me.

The EPA still has time to edit this proposal; it will hear public comment over the next few months and probably issue a final version of the rule next year. With the procedural issues resolved, the Supreme Court’s ability to object to that rule is limited to whether carbon capture is feasible and affordable enough to be used under the Clean Air Act.

If there is a bright spot for climate advocates in the new rule, it’s that the Biden administration — and last year’s Democratic majority in Congress — seem to have anticipated that move.

As the House was voting on the IRA last year, Representative Frank Pallone, the chair of the House Energy and Commerce Committee, put a statement in the congressional record saying that the EPA should take the IRA’s generous tax credits into account when proposing power-plant rules. The subsidies should be considered when the agency is deciding whether CCS is feasible and affordable, he said. The EPA cites Pallone’s statement in its new draft.

But ultimately it is Chief Justice John Roberts who will get to decide. Almost a decade ago, a set of conservative states sued the EPA to block it from requiring CCS. That issue has since been held in its own state of suspended animation. It may soon breathe again.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Just look at Heatmap’s latest poll results.

A few times a year, Heatmap News surveys a few thousand Americans on the biggest questions driving the world of energy, environment, and climate change. We’ve spent the past few days writing up the results of our latest poll, which was in the field in late May and which I thought was particularly striking.

It’s worth taking a step back to look at the biggest results together, because the American view of data centers is essentially in free fall:

The upshot of these findings: The public‘s turn against artificial intelligence and AI infrastructure is real, widespread, and cross-partisan. It doesn't matter whether Americans started out tolerating data centers or having no opinion about them; they now seem to resent them en masse.

Sign up to receive Heatmap Daily in your inbox:

These results also suggest Americans see little distinction between data centers as energy users and data centers as the physical embodiment of AI and Big Tech. At Heatmap, we can be a wonky and energy-focused bunch, and so we tend to think about data centers primarily as large-scale electricity users. I think most approaches to come up with “data center policy” do the same. We know data centers are distinctive in some ways, of course — an AI data center might require more on-site batteries or power generation than, say, an EV factory — but fundamentally it is just another air polluter, large-scale power user, and light-industrial land user.

But the public does not see things this way. Americans understand data centers in the context of the much broader AI policy conversation about jobs, growth, alignment, and even human extinction. And so, I should add, do politicians: Senator Bernie Sanders has framed his data center moratorium proposal as a response to rapid AI development as much as anything having to do with energy affordability. For that reason, I wonder how long the distinction between these two policy conversations — data centers here, and AI policy over there — can persist.

One last thought on this topic: Is the public’s resentment starting to affect the AI boom overall? I think it might be. It was hard for me not to think of our polling results — or our analysis of canceled data center projects — as I read about a recent JPMorgan analysis that found America’s data center boom is “falling way behind schedule,” in the words of The Wall Street Journal. More than 60% of the data center capacity that is supposed to come online next year has yet to break ground, according to the bank; another 7% is “delayed.”

That’s partially due to equipment and labor shortages, but it also might be what a siting-and-permitting bottleneck would look like. Much like renewable developers or venture capitalists, data center developers work by picking a number of sites and trying to develop on all of them. If only a few sites work out, they’re still in the money. But if a falling share of projects are working out — if building anything, anywhere, is getting harder, everywhere — then it might materialize as delays.

Plus more of the week’s big money moves in critical minerals and electric vehicle charging.

Two of climate tech’s hottest sectors — fusion and critical minerals — dominated this week’s funding headlines. Helion led the pack with its $465 million Series G, helping to push the startup with the sector’s most aggressive commercialization timeline one step closer to putting power on the grid. The round follows last week’s news that German fusion startup Focused Energy secured a $240 million Series A, making it Europe’s most valuable fusion company.

Then there’s the critical minerals. Shortly after venture firm Gigascale Capital announced the close of its $250 million fund targeting the physical clean energy economy, it announced one of its first investments: Red Metals, a startup working to bring copper refining back to the U.S. Terra AI, which is using artificial intelligence to identify promising sites for mineral extraction, also landed fresh funding. Rounding out the week’s deals, EV charging and energy services company InCharge also raised a new round as it looks to expand into a broader suite of energy services.

Leading fusion startup Helion has nearly tripled its valuation with its latest $465 million Series G round, which aims to help the company deliver commercial fusion power this decade — the most ambitious timeline in the industry. Per the terms of the power purchase agreement Helion signed with Microsoft in 2023, the startup plans to turn on its first commercial reactor just two years from now. That’s far sooner than even its most precocious competitors, who aim to put fusion power on the grid by the 2030s at the earliest.

Joshua Kushner’s venture firm Thrive Capital led the round, which also included participation from new investors including Lux Capital and Alta Park Capital. Thrive now values the company at $15.5 billion.

“The investors that have joined this round, it’s institutional capital, some very marquee investors,” Helion’s CEO David Kirtley told me, explaining they were willing to back an unproven technology thanks to a series of recent milestones that Helion’s latest prototype reactor, Polaris, achieved. “Polaris earlier this year set records for temperature and fuel. We’ve also reduced a lot of the business risk on the regulatory front, the commercial front, and the actual supply chain, too.” In February, Polaris became the first reactor developed by a private fusion company to operate on deuterium-tritium fuel — the most common fuel in the industry — and to achieve a plasma temperature of 150 million degrees Celsius.

Helion differs from many of its peers pursuing more established reactor concepts such as tokamaks, stellarators, or laser-driven inertial confinement. Instead, Helion’s tech uses powerful magnets to collide and compress two fusion plasmas together, generating temperatures over 100 million degrees Celsius and triggering a fusion reaction. It then seeks to capture the electricity this reaction generates via electromagnetic induction — no steam turbine required — similar to the way regenerative braking works in an electric vehicle. If successful, the approach could enable smaller, more modular fusion reactors than conventional designs would.

While the company had originally aimed for Polaris to demonstrate electricity production from fusion in 2024, that date came and went with no new goal set. Kirtley told me that Helion remains on track to meet the terms of its agreement with Microsoft, however. The startup broke ground on its commercial reactor site last year in Malaga, Washington, where it already has access to a substation and grid interconnection from a dormant aluminum smelter. In addition to building out this facility, Helion also plans to use its new funding to boost production at its electrical component manufacturing plant in nearby Everett, which Kirtley said opened earlier this year.

As investors pour billions into artificial intelligence and the infrastructure supporting it, former Meta CTO Mike Schroepfer has raised an inaugural $250 million fund for his venture firm, Gigascale Capital, which is focused on the physical clean energy economy. This represents Gigascale’s first institutional fundraise since its founding in 2023; until now, the firm’s investments have come entirely out of Schroepfer’s own pocket.

The fund will target early-stage companies working in clean energy, grid infrastructure, critical minerals, and AI-enabled design and manufacturing, while reserving capital to continue backing its portfolio companies as they scale. Gigascale has already backed a number of big names in the space, including Commonwealth Fusion System, iron-air battery developer Form Energy, solid-state transformer company Heron Power, and clean baseload power startup Arbor Energy.

It’s also already begun investing out of this new fund, announcing this week that it led a $10 million seed round for critical minerals company Red Metals, which also included participation from JB Straubel, founder and CEO of the battery recycling company Redwood Materials. The company aims to help reshore copper refining in the U.S., and will use this fresh capital to support the development of a $70 million refining facility in Charleston, South Carolina. Red Metals says its process can convert copper scrap directly into a finished copper product, bypassing several of the costly and emissions-intensive intermediate steps typical of conventional refining.

The investment offers a window into the kinds of companies Schroepfer is most interested in — businesses that might lack the glamor of an AI startup but represent bipartisan opportunities to address core industrial bottlenecks. Copper, for example, is essential to all sorts of clean energy infrastructure, including transformers, power lines, and anode battery materials, but also critical for defense technologies such as radar systems and ammunition. Yet American copper production has been on the decline, with analysts projecting that the U.S. will face a refined copper shortage of over 2.5 million metric tons annually by 2035.

Sustainability-focused firm S2G Investments has been on a roll recently, announcing a $1 billion fund last month that aims to fill climate tech’s “missing middle” and backing Goshe Energy Storage with up to $40 million in strategic financing last week. Its latest move is leading a $46 million strategic investment round for InCharge Energy, an EV charging and distributed energy management company.

InCharge got its start installing and managing electric vehicle charging stations, and is now operating more than 30,000 assets across North America. Through its software platform and network of technicians, the company handles all monitoring, diagnostics, and on-the-ground repairs, taking on a charger’s full lifecycle to minimize downtime. With this new capital, InCharge plans to expand beyond EV charging and leverage its software and field service network in adjacent industries, including electrical infrastructure work such as panel upgrades and wiring repairs, as well as distributed energy resources like rooftop solar and battery storage systems.

“EV charging was the entry point, but our customers increasingly need help operating more complex energy infrastructure,” Rich Mohr, InCharge’s CEO said in a press release. “This investment from S2G accelerates our evolution into a full energy solutions provider and allows us to advance smarter technology and strengthen our service capabilities nationwide.”

It’s a hot week — nay a hot year, for critical minerals and subsurface exploration startups, especially for those pairing geology with artificial intelligence. AI-powered mineral exploration company KoBold Metals has raised about $1.2 billion to date, while geothermal exploration startup Zanskar has brought in about $220 million.

Now, another entrant is attracting investor attention. Terra AI has raised a $20 million Series A led by Khosla Ventures to help do it all — use AI to identify prospective sites for critical minerals mining, next-generation geothermal development, and permanent carbon sequestration.

Terra’s platform integrates vast geological and geophysical datasets to generate 3D subsurface models, as well as risk assessments that allow teams to evaluate a range of potential geologic scenarios. From there, the team can identify the best sites for exploratory drilling and thus reduce risk and uncertainty much sooner in the project’s lifecycle. The company even uses what it calls “geology reasoning agents” to help operators create their exploration plans, all with the goal of drastically reducing the notoriously long timeline between discovery and production, which can stretch to nearly two decades for many subsurface projects.

“Minerals sit at the center of every major technology and infrastructure transition, but today’s exploration results are not keeping pace with demand,” Terra’s CEO John Mern posted on LinkedIn. “Our mission is to advance the frontier of AI into the geosciences and help supply the metals and resources the next generation needs.”

One of the biggest fusion funding rounds of the year landed last week, and somehow much of the media — including me — missed it. German fusion startup Focused Energy raised a whopping $240 million Series A led by RWE, one of Germany’s largest energy companies. Yet unlike most deals of this magnitude, it arrived with little fanfare: No press release in my inbox nor a flood of headlines. So in the interest of making up for lost time, here are the details.

With this latest round, which also includes participation from the German Federal Agency for Breakthrough Innovation, the European Innovation Council Fund and Prime Movers Lab, Focused Energy has become Europe’s most valuable fusion company. Like several other leading players, including Inertia Enterprises and Pacific Fusion, Focused Energy relies on an approach known as inertial confinement fusion. This involves using powerful lasers to compress a tiny fuel target, creating the extreme pressures and temperatures required for a fusion reaction. To date, inertial confinement remains the only approach to have demonstrated net energy gain, with Lawrence Livermore National Lab achieving this milestone in 2022.

The startup plans to use this latest funding to build out a demonstration plant in the German state of Hesse, at a site where RWE formerly operated a nuclear fission plant. The company ultimately aims to build a commercial reactor by the mid-2030s.

Catching up with the American Council on Renewable Energy’s Ray Long.

Today’s chat is with Ray Long, CEO of the American Council on Renewable Energy. We first discussed the odds of permitting reform a year and a half ago, for one of the first Q&As in The Fight. Flash forward and we’re still in the same situation, but now also wrestling with added demand for electricity to power data centers. I wanted to talk again about whether he thought the rise of artificial intelligence would increase the odds of some federal deal happening any time soon. The result: a wide-reaching conversation about the future of the electric grid, the struggles to win community buy-in and the sclerotic nature of the U.S. Congress.

The following conversation was lightly edited for clarity.

Do you think the buildout of our energy grid is entwined with the rise of the nation’s data center buildout?

When you look at what we need over the next four years — 166 gigawatts, 15 times the peak load of New York City — that’s a lot of power to build. Roughly half of that is for data center and AI growth.

There are five things we can build in the next four years at scale to address that collective amount. First, it’s transmission — the transmission buildout will help to get a modern grid to enable power flow to where it’s needed in a much more effective way. That’s the first step because if we just build all that power, the current grid can’t handle it.

Second, there are four supply technologies that can be built: solar, batteries, wind, and natural gas. All four of those technologies, we know there’s enough equipment here in the U.S. available for purchase that we can build at volume. And I’ll say this — natural gas is only about 10% of all those gigawatts because of the availability of turbines from suppliers. You can’t get enough over the next four years. So when I talk about decarbonization, most of what is built to address this issue is zero-carbon resources, renewable energy resources.

If you were to compare the current conversation around data center development to the debate over developing renewable energy in the U.S. — or energy in general — do you see any similarities or differences?

There are always issues with permitting projects. Communities are always going to have concerns about what’s built in their backyards.

What’s new — and your polling shows this — is the level of concern communities have. But here’s the thing: Most of this can be overcome by developers going in, listening to what the needs of the communities are, then responding and through the permitting process addressing those concerns. You can’t do that 100% of the time. But my experience is, when you take that sort of approach, you can overcome a lot of it.

Most of the large data centers are actually doing the things I’m discussing — going in and saying, Look, we want to be grid interconnected because grid connection at the end of the day means the resources we’re bringing to bear are also going to make a stronger grid. Number two, it's investing in power generation sources like the ones I said — and those power sources will be on the grid, so they’ll solve for the increased power demands of a community.

Third, water. They should bring the water solutions. You’re seeing data centers coming in and saying it head on now, that they have closed-loop systems or whatever the solution is. At the end of the day, the communities they’re proposing these in have a real negotiating opportunity to make sure they’re holding the data center developers accountable to the needs of the community.

For a community to say we don’t want it here misses a real opportunity for those communities to get the power they need, the grid they need, and the ability to bring down energy costs.

How is the data center debate affecting permitting reform conversations in Washington, from your perspective?

Permitting reform in the U.S. at the state and federal level has been broken for years. The SunZia transmission project? It took 17 years to permit. Ribbon-cutting is in a week or two and there’s still litigation around it. From a business perspective, it’s just untenable, and it’s a miracle that the project is getting built. Developers need a chance to come in and have their project evaluated. Both the community and the developer should be able to get to a go or no-go in a couple of years on one of these projects.

How is data center growth affecting the permitting reform discussion? It’s a very hot issue right now. Right now I think in part because the data center issue is so huge — because we’ve only got four years to solve for the first really big tranche of power we need and prices across the board for electricity are escalating — this is coming to a head. The data center load is a part of the catalyst to get people talking about it [permitting reform].

Do you expect legislating in Congress on permitting reform this year? Anything beyond more conversation?

My hope is that we get a bill. A few weeks ago someone from the administration was quoted as saying they wanted a framework for a bill by the end of May, and it’s June now. We haven’t seen both sides or the administration coalesce around a final project yet.

We’re in a midterm election cycle. Typically it’s very difficult during these cycles to move bills like this. At the same time, with electricity prices increasing and the need to build more, to fix this, I’m very hopeful something will come together. And look at the Senate — you’ve got Republicans and the Democratic ranking members talking about this. It’s all good signs.

If everyone’s talking about energy and affordability during this election, isn’t that a good thing for action in the next Congress?

I’ll say this: You’re seeing the catalyst for it right now with prices rising, and almost every grid operator around the country has raised concerns about shortages at some point this year or next year. It’ll hopefully be enough to have policymakers do something about it this year.