You’re out of free articles.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Sign In or Create an Account.

By continuing, you agree to the Terms of Service and acknowledge our Privacy Policy

Welcome to Heatmap

Thank you for registering with Heatmap. Climate change is one of the greatest challenges of our lives, a force reshaping our economy, our politics, and our culture. We hope to be your trusted, friendly, and insightful guide to that transformation. Please enjoy your free articles. You can check your profile here .

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Subscribe to get unlimited Access

Hey, you are out of free articles but you are only a few clicks away from full access. Subscribe below and take advantage of our introductory offer.

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Create Your Account

Please Enter Your Password

Forgot your password?

Please enter the email address you use for your account so we can send you a link to reset your password:

“I am increasingly becoming irrelevant in the public conversation,” says Kate Marvel, a climate scientist who until recently worked at NASA’s Goddard Institute for Space Studies. “And I love it.”

For years, such an exalted state was denied to Marvel. Every week, it seemed, someone — a high-profile politician, maybe, or a CEO — would say something idiotic about climate science. Journalists would dutifully call her to get a rebuttal: Yes, climate change is real, she would say, yes, we’re really certain. The media would print the story. Rinse, repeat.

A few years ago, she told a panel, half as a joke, that her highest professional ambition was not fame or a Nobel Prize but total irrelevance — a moment when climate scientists would no longer have anything useful to tell the public.

That 2020 dream is now her 2023 reality. “It’s incredible,” she told me last week. “Science is no longer even a dominant part of the climate story anymore, and I think that’s great. I think that represents just shattering progress.”

We were talking about a question, a private heresy, I’ve been musing about for some time. Because it’s not just the scientists who have faded into the background — over the past few years, the role of climate science itself has shifted. Gradually, then suddenly, a field once defined by urgent questions and dire warnings has become practical and specialized. So for the past few weeks, I’ve started to ask researchers my big question: Have we reached the end of climate science?

“Science is never done,” Michael Oppenheimer, a professor of geosciences and international affairs at Princeton, told me. “There’s always things that we thought we knew that we didn’t.”

“Your title is provocative, but not without basis,” Katharine Hayhoe, a climate scientist at Texas Tech University and one of the lead authors of the National Climate Assessment, said.

Not necessarily no, then. My question, I always clarified, had a few layers.

Since it first took shape, climate science has sought to answer a handful of big questions: Why does Earth’s temperature change so much across millennia? What role do specific gases play in regulating that temperature? If we keep burning fossil fuels, how bad could it be — and how hot could it get?

The field has now answered those questions to any useful degree. But what’s more, scientists have advocated and won widespread acceptance of the idea that inevitably follows from those answers, which is that humanity must decarbonize its economy as fast as it reasonably can. Climate science, in other words, didn’t just end. It reached its end — its ultimate state, its Really Big Important Point.

In the past few years, the world has begun to accept that Really Big Important Point. Since 2020, the world’s three largest climate polluters — China, the United States, and the European Union — have adopted more aggressive climate policies. Last year, the global clean-energy market cracked $1 trillion in annual investment for the first time; one of every seven new cars sold worldwide is now an electric vehicle. In other words, serious decarbonization — the end of climate science — has begun.

At the same time, climate science has resolved some of its niggling mysteries. When I became a climate reporter in 2015, questions still lingered about just how bad climate change would be. Researchers struggled to understand how clouds or melting permafrost fed back into the climate system; in 2016, a major paper argued that some Antarctic glaciers could collapse by the end of the century, leading to hyper-accelerated sea-level rise within my lifetime.

Today, not all of those questions have been completely put aside. But scientists now have a better grasp of how clouds work, and some of the most catastrophic Antarctic scenarios have been pushed into the next century. In 2020, researchers even made progress on one of the oldest mysteries in climate science — a variable called “climate sensitivity” — for the first time in 41 years.

Does the field have any mysteries left? “I wouldn’t go quite so far as angels dancing on the head of a pin” to describe them, Hayhoe told me. “But in order to act, we already know what we need.”

“I think at the macro level, what we discover [next] is not necessarily going to change policymakers’ decisions, but you could argue that’s been true since the late 90s,” Zeke Hausfather, a climate scientist at Berkeley Earth, agreed.

“Physics didn’t end when we figured out how to do engineering, and now they are both incredibly important,” Marvel said.

Yet across the discipline, you can see research switching their focus from learning to building — from physics, as it were, to engineering. Marvel herself left NASA last year to join Project Drawdown, a nonprofit that focuses on emissions reduction. Hausfather now works at Frontier, a tech-industry consortium that studies carbon-removal technology. Even Hayhoe — who trained as a climate scientist — joined a political-science department a decade ago. “I concluded that the biggest barriers to action were not more science,” she said this week.

To fully understand whether climate science has ended, it might help to go back to the very beginning of the field.

By the late 19th century, scientists knew that Earth was incredibly ancient. They also knew that over long enough timescales, the weather in one place changed dramatically. (Even the ancient Greeks and Chinese had noticed misplaced seashores or fossilized bamboo and figured out what they meant.) But only slowly did questions from chemistry, physics, and meteorology congeal into a new field of study.

The first climate scientist, we now know, was Eunice Newton Foote, an amateur inventor and feminist. In 1856, she observed that glass jars filled with carbon dioxide or water vapor trapped more of the sun’s heat than a jar containing dry air. “An atmosphere of that gas,” she wrote of CO₂, “would give to our earth a high temperature.”

But due to her gender and nationality, her work was lost. So the field began instead with the contributions of two Europeans: John Tyndall, an Irish physicist who in 1859 first identified which gases cause the greenhouse effect; and Svante Arrhenius, a Swedish chemist who in 1896 first described Earth’s climate sensitivity, perhaps the discipline’s most important number.

Arrhenius asked: If the amount of CO₂ in the atmosphere were to double, how much would the planet warm? Somewhere from five to six degrees Celsius, he concluded. Although he knew that humanity’s coal consumption was causing carbon pollution, his calculation was a purely academic exercise: We would not double atmospheric CO₂ for another 3,000 years.

In fact, it might take only two centuries. Atmospheric carbon-dioxide levels are now 50 percent higher than they were when the Industrial Revolution began — we are halfway to doubling.

Not until after World War II did climate science become an urgent field, as nuclear war, the space race, and the birth of environmentalism forced scientists to think about the whole Earth system for the first time — and computers made such a daring thing possible. In the late 1950s and 1960s, the physicists Syukuro Manabe and Richard Wetherald produced the first computer models of the atmosphere, confirming that climate sensitivity was real. (Last year, Manabe won the Nobel Prize in Physics for that work.) Half a hemisphere away, the oceanographer Charles Keeling used data collected from Hawaii’s Mauna Loa Observatory to show that fossil-fuel use was rapidly increasing the atmosphere’s carbon concentration.

Suddenly, the greenhouse effect — and climate sensitivity — were no longer theoretical. “If the human race survives into the 21st century,” Keeling warned, “the people living then … may also face the threat of climatic change brought about by an uncontrolled increase in atmospheric CO₂ from fossil fuels.”

Faced with a near-term threat, climate science took shape. An ever-growing group of scientists sketched what human-caused climate change might mean for droughts, storms, floods, glaciers, and sea levels. Even oil companies opened climate-research divisions — although they would later hide this fact and fund efforts to discredit the science. In 1979, the MIT meteorologist Jules Charney led a national report concluding that global warming was essentially inevitable. He also estimated climate sensitivity at 1.5 to 4 degrees Celsius, a range that would stand for the next four decades.

“In one sense, we’ve already known enough for over 50 years to do what we have to do,” Hayhoe, the Texas Tech professor, told me. “Some parts of climate science have been simply crossing the T’s and dotting the I’s since then.”

Crossing the T’s and dotting the I’s—such an idea would have made sense to the historian Thomas Kuhn. In his book, The Structure of Scientific Revolutions, he argued that science doesn’t progress in a dependable and linear way, but through spasmodic “paradigm shifts,” when a new theory supplants an older one and casts everything that scientists once knew in doubt. These revolutions are followed by happy doldrums that he called “normal science,” where researchers work to fit their observations of the world into the moment’s dominant paradigm.

By 1988, climate science had advanced to the degree that James Hansen, the head of NASA’s Goddard Institute, could confidently warn the Senate that global warming had begun. A few months later, the United Nations convened the first Intergovernmental Panel on Climate Change, an expert body of scientists asked to report on current scientific consensus.

Yet core scientific questions remained. In the 1990s, the federal scientist Ben Santer and his colleagues provided the first evidence of climate change’s “fingerprint” in the atmosphere — key observations that showed the lower atmosphere was warming in such a way as to implicate carbon dioxide.

By this point, any major scientific questions about climate change were effectively resolved. Paul N. Edwards, a Stanford historian and IPCC author, remembers musing in the early 2000s about whether the IPCC’s physical-science team should pack it up: They had done the job and shown that climate change was real.

Yet climate science had not yet won politically. Santer was harassed over his research; fossil-fuel companies continued to seed lies and doubt about the science for years. Across the West, only some politicians acted as if climate change was real; even the new U.S. president, Barack Obama, could not get a climate law through a liberal Congress in 2010.

It took one final slog for climate science to win. Through the 2010s, scientists ironed out remaining questions around clouds, glaciers, and other runaway feedbacks. “It’s become harder in the last decade to make a publicly skeptical case against mainstream climate science,” Hausfather said. “Part of that is climate science advancing one funeral at a time. But it’s also become so clear and self-evident — and so much of the scientific community supports it — that it’s harder to argue against with any credibility.”

Three years ago, a team of more than two dozen researchers — including Hausfather and Marvel — finally made progress on solving climate science’s biggest outstanding mystery, cutting our uncertainty around climate sensitivity in half. Since 1979, Charney’s estimate had remained essentially unchanged; it was quoted nearly verbatim in the 2013 IPCC report. Now, scientists know that if atmospheric CO₂ were to double, Earth’s temperature would rise 2.6 to 3.9 degrees Celsius.

That’s about as much specificity as we’ll ever need, Hayhoe told me. Now, “we know that climate sensitivity is either bad, really bad, or catastrophic.”

So isn’t climate science over, then? It’s resolved the big uncertainties; it’s even cleared up climate sensitivity. Not quite, Marvel said. She and other researchers described a few areas where science is still vital.

The first — and perhaps most important — is the object that covers two-thirds of Earth’s surface area: the ocean, Edwards told me. Since the 1990s, it has absorbed more than 90% of the excess heat caused by greenhouse gases, but we still don’t understand how it formed, much less how it will change over the next century.

Researchers also know some theories need to be revisited. “Antarctica is melting way faster than in the models,” Marvel said, which could change the climate much more quickly than previously imagined. And though the runaway collapse of Antarctica now seems less likely, we could be wrong, Oppenheimer reminded me. “The money that we put into understanding Antarctica is a pittance compared to what you would need to truly understand such a big object,” he said.

And these, mind you, are the known unknowns. There’s still the chance that we discover some huge new climatic process out there — at the bottom of the Mariana Trench, perhaps, or at the base of an Antarctic glacier — that has so far eluded us.

Yet in the wildfires of the old climate science, a new field is being born. The scientists who I spoke with see three big projects.

First, in the past decade, researchers have gotten much better at attributing individual weather events to climate change. They now know that the Lower 48 states are three times more likely to see a warm February than they would without human-caused climate change, for instance, or that Oregon and Washington’s record-breaking 2021 heat wave was “virtually impossible” without warming. This work will keep improving, Marvel said, and it will help us understand where climate models fail to predict the actual experience of climate change.

Second, scientists want to make the tools of climate science more useful to people at the scales where they live, work, and play. “We just don’t yet have the ability to understand in a detailed way and at a small-enough scale” what climate impacts will look like, Oppenheimer told me. Cities should be able to predict how drought or sea-level rise will affect their bridges or infrastructure. Members of Congress should know what a once-in-a-decade heat wave will look like in their district five, 10, or 20 years hence.

“It’s not so much that we don’t need science anymore; it’s that we need science focused on the questions that are going to save lives,” Oppenheimer said. The task before climate science is to steward humanity through the “treacherous next decades where we are likely to warm through the danger zone of 1.5 degrees.”

That brings us to the third project: That climatologists must create a “smoother interface between physical science and social science,” he said. The Yale economist Richard Nordhaus recently won a Nobel Prize for linking climate science with economics, “but other aspects of the human system are still totally undone.” Edwards wanted to get beyond economics altogether: “We need an anthropology and sociology of climate adaptation,” he said. Marvel, meanwhile, wanted to zoom the lens beyond just people. “We don’t really understand ... what the hell plants do,” she told me. Plants and plankton have absorbed half of all carbon pollution, but it’s unclear if they’ll keep doing so or how all that extra carbon has changed how they might respond to warming.

Economics, sociology, botany, politics — you can begin to see a new field taking shape here, a kind of climate post-science. Rooted in climatology’s theories and ideas, it stretches to embrace the breadth of the Earth system. The climate is everything, after all, and in order to survive an era when human desire has altered the planet’s geology, this new field of study must encompass humanity itself — and all the rest of the Earthly mess.

Nearly a century ago, the philosopher Alexander Kojéve concluded it was possible for political philosophy to gain a level of absolute knowledge about the world and, second, that it had done so. In the wake of the French Revolution, some fusion of socialism or capitalism would win the day, he concluded, meaning that much of the remaining “work to do” in society lay not in large-scale philosophizing about human nature, but in essentially bureaucratic questions of economic and social governance. So he became a technocrat, and helped design the market entity that later became the European Union.

Is this climate science’s Kojéve era? It just may be — but it won’t last forever, Oppenheimer reminded me.

“Generations in the future will still be dealing with this problem,” he said. “Even if we get off fossil fuels, some future idiot genius will invent some other climate altering substance. We can never put climate aside — it’s part of the responsibility we inherited when we started being clever enough to invent problems like this in future.”

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

The move by University of Pennsylvania researcher Danny Cullenward intensifies a debate over integrity at the carbon accounting organization.

A well-known scientist has resigned from the independent oversight board of the Greenhouse Gas Protocol, renewing questions about the integrity of one of the world’s most important arbiters of carbon emissions standards.

Danny Cullenward, who is also an economist and lawyer, notified the organization’s leadership on Monday that he no longer has “any confidence in the Protocol’s governance structure,” according to his resignation letter, which he posted publicly. He had previously tried to sound alarms about the organization and its lack of transparency in a paper he published in April.

Cullenward’s resignation letter goes a step further, accusing the Protocol of covering up an internal complaint he and a fellow board member filed, and of handing the reins of at least one of the organization’s standards to “a secret, industry-dominated drafting process.”

The Greenhouse Gas Protocol declined to comment on Cullenward’s resignation or answer questions about his account of events leading up to it.

The Protocol launched in the late 1990s as a joint project of the World Resources Institute, an environmental group, and the World Business Council for Sustainable Development, an industry association. Today it is the world’s leading standard-setter for corporate carbon accounting. More than 22,000 businesses rely on its methodologies to calculate and report their emissions. While adhering to the Protocol’s standards is still mostly voluntary, it will soon become a requirement under European Union and California disclosure rules.

Cullenward’s accusations arrive in the middle of a major revamp at the organization that began in 2022, designed specifically to improve the integrity of its corporate accounting standards. As part of the overhaul, it also put in place a new governance structure to improve transparency and accountability. Technical working groups made up of external experts would develop proposals to revise the standards to more accurately capture companies’ full carbon footprints, and then an Independent Standards Board would review and ultimately approve them. The Protocol appointed Cullenward to the independent board as one of its inaugural members in September 2024.

Cullenward’s reasons for leaving, as described in his letter, center around the development of a forest accounting standard to be used by companies that manage forests or have wood in their supply chains. The technical working group assigned to develop the standard could not reach a consensus, and ultimately submitted two competing proposals to the Board. Members associated with landowner groups and the forest products industry authored one of them, while the group’s research scientists primarily wrote the other.

According to Cullenward’s letter, as well as memos written by the academic scientists in the working group reviewed by Heatmap, the industry proposal, known as the “managed land proxy” method, would enable companies to claim they were removing carbon from the atmosphere when they cut down trees or used virgin wood. “This is the opposite of what physically happens when a forest is cut down,” Cullenward writes.

The method produces this counterintuitive result by allowing companies to take credit for all the carbon sucked up by the forests they manage, or in some cases by all the forests in a region, even if the company had no part in boosting that sequestration. If companies were to apply this accounting method to their products, Cullenward adds, not only would making virgin paper appear to involve zero carbon emissions, it would also apparently help to restore the climate. It would also look much more advantageous to the climate than producing recycled paper.

His concern is not just with this proposal, but also with how the Protocol handled a complaint filed by a proponent for the managed land proxy approach that challenged the scientists’ expertise. In response, the organization quietly solicited opinions from additional outside scientists on the two proposals.

Cullenward’s letter asserts that this was a decision made solely by the board’s chair, Alexander Bassen, alongside Protocol staff and without the rest of the board’s input. He writes that when these external comments were later shared with him and his fellow board members, the authors were “presented as neutral arbiters of a contested scientific debate,” even though they had been specifically referenced in the complaint as supporters of the managed land proxy approach.

Cullenward says he tried to “pursue internal accountability” but faced retaliation. In February he and another board member, an Australian forest ecologist named Heather Keith, filed an official complaint. The Protocol enlisted an outside mediator to resolve their dispute, but Cullenward says the hired adjudicator failed even to read the full complaint before meeting with him. The mediator also did not review any of the recordings of key board meetings referenced in the complaint, and was barred from speaking to technical working group scientists.

Cullenward and Keith eventually received a response to their complaint from the mediator but were told they could not share it, and the matter was deemed closed. According to a spokesperson for the Greenhouse Gas Protocol, who reached out to me with an update on the matter in late May, an independent review found “some process shortcomings” but “no material breach” of the organization’s rules or of due process. They added that “recommendations to address process shortcomings and strengthen conflict resolution are being reviewed and implemented.”

I reached out to Keith, who told me in an email that she was “deeply concerned about Danny’s resignation.” She praised his “wide-ranging expertise” in carbon accounting, law, and governance, and his “extensive contributions” to the board’s discussions. “One of the most valuable assets in a Board member is his demonstrated independence in making judgements that is based on a sound knowledge of climate science,” she wrote. The board “should be encouraging more people with Danny’s expertise and motivation for climate action to benefit the global community, not losing such valuable people.”

Cullenward’s primary concern moving forward is a new partnership between the Greenhouse Gas Protocol and the International Organization for Standardization, which establishes technical specifications for a range of industries and purposes, to unify their emissions accounting rules. The two groups’ first joint undertaking is to develop a standard for assigning emissions to specific products, which will include forest carbon accounting.

While the Greenhouse Gas Protocol has publicly listed the members it assigned to the joint working group, the ISO is under no obligation to do so. Cullenward asserts in his letter that the new joint groups “operate with confidential membership that is heavily tilted in favor of industry interests.” He says a representative from the World Business Council for Sustainable Development told him that the group may draw on an existing ISO standard based on the managed land proxy approach.

Meanwhile, over a year after the corporate forest accounting technical working group submitted its proposals, the Independent Standards Board is now contemplating kicking off a seven-month public comment period on the recommendations, Cullenward writes. He concludes that this elongated comment period is just for show, and that the issues “have already been delegated” to the joint working group with the ISO.

I asked the Greenhouse Gas Protocol how it planned to ensure “transparency and accountability for its stakeholders,” as it has previously promised, when the membership and meeting minutes of the joint ISO working groups are not disclosed to the public. I also asked, for the second time, whether the organization plans to publish meeting minutes from Independent Standards Board meetings — a requirement under the board’s governing rules that it has not followed. The Protocol declined to answer.

The U.S. electric vehicle maker’s make-or-break model, the R2, is finally here — and it’s pretty fun to drive.

The attainable Rivian is here, and not a moment too soon.

It’s been nearly a decade since the U.S.-based startup revealed its prestige R1T pickup truck and R1S SUV, earning plenty of “the next Tesla” hype and becoming lots of people’s favorite electric car brand. But with those R1 vehicles starting around $70,000 — and with nicer versions hitting six digits — lots of would-be drivers have been waiting for R2, the scaled-down vehicle first announced in 2024 and meant to take Rivian to the masses.

Now the moment has arrived: On Tuesday, Rivian began shipping the first version of the R2. I had the privilege of test-driving the vehicle that will make or break the brand last week on the highways and mountain roads outside Park City, Utah. If my experience is any indication, R2 is up to the job of making Rivian mainstream.

“A word we used really heavily throughout the development of R1 was … inviting,” CEO and founder RJ Scaringe said to the journalists at last week’s event. “We use that in the sense of inviting people to use it, inviting people to get it dirty, inviting people to have new experiences and new adventures in it. But by virtue of it being a flagship product, its price wasn't as inviting as we wanted. And so R2 really in many ways is the culmination of the full brand promise.”

First, the facts: R2 looks at first glance like a smooshed version of Rivian’s big SUV, with the same signature headlights and basic shape. It’s a little shorter, a little narrower, and 2,000 pounds lighter than its big cousin, seating five people as opposed to the seven that can cram into R1S. Range from the 88-kilowatt-hour battery is in the high 200 miles and tops 330 miles for some editions.

The stat that matters most is price. The first R2s out of the gate will cost around $58,000, and gradually less expensive tiers will arrive later this year and into next, culminating in the $45,000 base version at a yet-to-be-determined date. No, an EV around 50-grand doesn’t sound like a car for the common man. But as Scaringe noted, that is now the average price for a new car in America, which certainly makes R2 attainable for millions more drivers.

It’s also a lot of car for that money. Thanks to its boxiness, R2 feels like it has loads of room on the inside. Because of an improved battery shape, there’s actually more legroom for the rear passengers compared to R1. Double gloveboxes and a pretty big frunk add to the available storage space. (Rivian even fixed a pet peeve of R1 owners who couldn’t fit their monstrous water bottles in a convenient spot.)

Yet R2 doesn’t drive big. It rides high and offers the driver a wide view, but it’s not a tank like R1, which I found difficult to park in compact spaces like the one at my home. Its 5,000-pound weight is still a lot of heft (a Tesla Model Y is more like 4,000 to 4,400 pounds), but the car still feels zippy. The mass is simply overwhelmed by electric power, especially in the higher-end versions Rivian let us drive in Utah.

As the engineers on site noted, developing the R2 was mostly an exercise in subtraction — not just shrinking the physical size from the R1, but also making R2 cheaper to build by removing miles of wiring (something the brand visualized at the event by showing off bundles of copper in the style of a rubber band ball, representing all that had been cleaved). But R2 needed its own bells and whistles so it would feel desirable on its own and not appear to be merely a discount Rivian.

Those additions include rear windows that go all the way down, unlike the halfway that’s common in most passenger cars; the rear windshield descends, too. A fun button up front marked with a “5” will lower all four passenger windows plus the back windshield at once. In response to complaints about every function running through the center touchscreen, Rivian put in some buttons — or, rather, some wheels. On each side of the steering wheel, reachable by a person’s thumbs, are haptic “halo” buttons that can be pushed side to side or spun. These are not at all the subtle, slight wheels you’d find a Tesla, but rather beefy spinners meant to feel rugged and easy to manipulate.

During testing, I struggled with how hard to push them and in what direction to enter the desired mode that could then be adjusted by spinning the wheel, be it climate, music, drive mode, or the positioning of the side mirrors. But something tells me Rivian will refine the haptic feedback as R2 owners put miles on their vehicles. And even complicated or layered menus become second nature once it’s your own car.

Many of these vehicles will never go off-road, but Rivian still had to prove the R2’s backcountry bona fides. This is the adventure EV brand, after all, and part of the pitch for R2 is how much more it can do than a Tesla Model Y or Chevy Equinox EV. Keen to prove the point, Rivian swapped us halfway through the test drive into R2s with their tire pressure halved to make them mountain-ready, then directed us onto the rutty, boulder-pitted roads of Wasatch Mountain State Park to wade through water crossings and up to the top of a plateau. Here the touchscreen becomes an adventurer’s dream, displaying the vehicle’s moment-by-moment elevation, pitch, compass direction, and much more. Tap into the camera system and it can bring up the close-up view of what’s right in front of the vehicle and shows both front wheels to help navigate around pointy rocks and cavernous ruts.

R2 never wavered or felt as if it had taken on too much. It has all the capability you’d need as a trail warrior, and more than enough for the affluent professional who yearns to become outdoorsy. After so many decades when the world’s truly rugged vehicles were also low-mile-per-gallon polluters, it feels like a breakthrough just getting this much can-do spirit out of an electric car.

More salient for the urban dweller is Rivian’s big push into autonomous driving. As we noted in December after the brand’s AI and Autonomy Day, R2 is the company’s big play in that race: It vastly ups the amount of road open to Rivian’s hand-free autonomous driving feature works, raising it to about 3.5 million miles in the U.S. The company also told us that by the end of the year it would introduce point-to-point service, where the vehicle really can drive itself for the duration of a trip, with more autonomous features potentially on the way. During the test drive, the hands-free tech felt steady and assured on twisty local roads.

Rivian has a long way to go here, given Tesla’s major head start in developing vehicle autonomy. One big asset it does have is the thousands of drivers who’ve bought R1s and who opted to share their driving data with the company, helping it build a dataset that maps and models the world. The less expensive R2 should get many more people into a Rivian vehicle and accelerate that learning curve. That, plus the eventual addition of a LIDAR sensor to some models, will allow that kind of full autonomy that R2 will use when it goes into service as an Uber robotaxi following the ride-sharing company’s $1.2 billion investment earlier this year.

It’s difficult to overstate the importance of this vehicle for Rivian, or for the electrification of the American car. For the brand, this must be its Model 3 moment, where it leaps from a niche brand selling luxe status symbols to one that builds a huge number of EVs — and in the process hopefully becomes financially stable after years in the startup “valley of death,” between promise and profitability. Billion-dollar investments from the likes of Volkswagen and Amazon buoyed Rivian during those years; now R2 has to deliver on them.

As for the U.S. EV market as a whole? It also needs the R2. New EV sales are sagging in America, even amid gasoline price shocks caused by the Iran War. A $50,000 Rivian isn’t exactly the solution to the auto industry’s affordability crisis, but Scaringe argued that U.S. buyers also lack great choices. The industry leaders — Tesla’s Model 3 and Model Y — have been on the market since 2018 and 2020, respectively, with subtle tweaks and update since then. New offerings from legacy carmakers like Chevy and Toyota are a welcome change. Still, they feel like a Chevy or Toyota that’s been electrified, not like a vehicle built from the ground up to deliver on the promise of what a great EV can be.

Yet even now, the learnings from the EV startup world that led to R2 — dramatically simplified manufacturing to bring down costs, advanced touchscreen infotainment with elegant interfaces, EVs built fully integrated from the ground up rather than adapted from existing gas cars — are already influencing the rest of the industry. Just look at what Ford’s skunkworks operation is up to as the Detroit giant tries to catch up in the EV race starting next year. A successful R2 would push the car industry further in this direction.

R2 succeeds in bringing the feeling of a lusted-after EV to the five-seat, fully capable SUV, which has become the de facto family car of this country. And for all of Rivian’s focus on catching up in the AI and autonomy race, R2 still feels like a car you’re supposed to love to drive yourself, whether that’s to work, to grandma’s, or to the top of a mountain. It is, indeed, inviting. With Tesla having publicly abdicated its role of building great EVs for humans to drive, Rivian is now primed to seize the position.

Current conditions: China has triggered emergency warnings across six provinces as heavy rainfall floods the countryside • A magnitude 7.8 earthquake struck the Philippines, leaving at least 32 dead and more than 100 injured in building collapses • Temperatures in Albuquerque, New Mexico, are rising near 100 degrees Fahrenheit.

On Tuesday, Tennessee is set to become the first state in the nation with its own regulatory framework for nuclear fusion plants. You may be wondering, why Tennessee? The two-word answer: Oak Ridge. The Volunteer State has operated as a hub for nuclear energy research and development for more than 60 years, feeding off both the Oak Ridge National Laboratory and the Tennessee Valley Authority’s capacity to help commercialize new technologies. Now state regulators are establishing the first dedicated rulebook for building future fusion plants. “Tennessee has been named the top state in the nation for nuclear energy industry growth, and for good reason,” David Salyers, the commissioner of the Tennessee Department of Environment and Conservation, said in a statement. “This latest step supercharges our reputation as the global hub for nuclear innovation and positions us as the most responsive state to new advanced nuclear companies clamoring to call Tennessee home.”

It’s not the only government betting that the various attempts to commercialize fusion as an energy source will pan out in the near future. On Monday, NucNet reported that the British government had drafted legislation to “create conditions” for deploying fusion technology.

Typically, the rule of thumb in journalism is that the answer to a question headline is almost always “no,” otherwise the headline would simply state the fact. But this one is a genuine open question that climate-tech investor Shanu Mathew raised Monday in a post on X: Could PJM Interconnection, the nation’s largest grid operator, break apart? The speculation traces back to a Bloomberg article from last week in which unnamed federal officials suggested that the operator, which runs the grid from the Illinois prairie to the Jersey Shore, could split up as data centers put strain on the 13-state system’s electricity supplies.

The talks are happening as two of the largest utilities in PJM, NextEra and Dominion, discuss a potential $420 billion megamerger that would create, among other things, a storage giant, as Heatmap’s Matthew Zeitlin reported. The discussions are also occurring against the backdrop of major artificial intelligence companies going public, with ChatGPT-maker OpenAI following Claude-developer Anthropic in filing a confidential S-1 with the Securities and Exchange Commission this week.

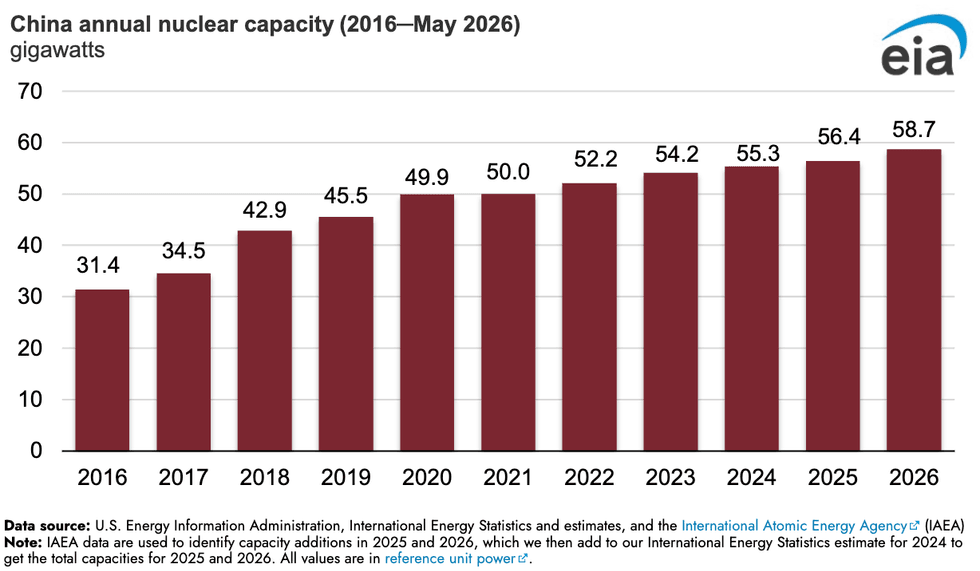

In the United States, you can’t build a single commercial nuclear reactor in a decade. In China, you can apparently double the size of your entire fleet in that time. Between 2016 and 2024, China’s nuclear generation capacity soared by 76%, according to a new Energy Information Administration analysis. That’s equal to 24 gigawatts. In 2025, China added another 1.1 gigawatts, followed by 2.2 gigawatts more this year just through May. The country has at least 36 other reactors under construction, accounting for nearly half of the world’s ongoing nuclear projects.

Sign up to receive Heatmap AM in your inbox every morning:

Just five years ago, the global aviation industry made a landmark pledge to achieve net zero emissions by 2050. Now the head of the industry’s global body says that goal is likely already out of reach. Willie Walsh, the director of the International Air Transport Association, told The Guardian that “hope was fading fast” and a new “realistic timeline” needed to be established. More than half of the planned decarbonization of air travel relied on the development of sustainable aviation fuels that remain nascent at best. Money is pouring into the technology, as Heatmap’s Katie Brigham reported. But uptake so far “is about 0.2% of fuel,” Nicole Cerulli, a research associate for transportation and logistics at the market research firm Cleantech Group, told her.

One cold autumn morning three years ago, I made my way across downtown Ulaanbaatar to an American-style diner called Millie’s Espresso to meet with a Mongolian mining executive who was thrilled about Western countries’ recent investments in his industry. Landlocked between Russia and China, the geographically huge but sparsely populated democracy hoped to shore up its sovereignty by forging deals with the U.S., Europe, South Korea, and Japan to satisfy soaring demand for minerals. Already Oyu Tolgoi, one of the world’s largest copper mines, was underway in the country’s Gobi desert south, and that year the French government inked a deal to start producing lithium and uranium in Mongolia. Now the uranium part of that agreement is moving forward. On Monday, World Nuclear News reported that the French state-backed nuclear fuel producer Orano had broken ground on its first mine in the Central Asian nation. The project raised some eyebrows among Mongolians who complained that Soviet-era Russian uranium mining left behind nasty pollution, and the terms of Ulaanbaatar’s deal with Rio Tinto over the new copper mine have been politically contentious. But the sprawling, smog-choked capital city — the only major urban development in the rural nation — is in need of more power.

Russia had promised to help meet that power by building Mongolia’s first nuclear power plant. A politically well-connected businessman from Ulaanbaatar, whom I caught up with last night over text to ask about the mood in the country, said Moscow’s bid had drawn more positive attention than France’s plans to mine fuel for their own reactors. “In Ulaanbaatar, we experienced electricity shortages last winter that caused apartment heating to stop during the winter. It was crazy,” the executive told me. While he’s typically a critic of the ruling Mongolian People’s Party, which formed out of the old Communist Party apparatus following the fall of the Soviet Union, the executive told me the government’s actions were “good and brave” steps to “diversify investment in Mongolia.”

I hate to close out on a bad note, but this one felt important to include: America’s screwworm problem is getting worse. On Monday, the U.S. Department of Agriculture confirmed the first case of the flesh-eating parasite in a dog in New Mexico, in addition to four cases in total in Texas. “This situation is evolving, and we expect new information to emerge as our investigation continues,” Dudley Hoskins, USDA’s under secretary for marketing and regulatory programs, said in a statement.