You’re out of free articles.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Sign In or Create an Account.

By continuing, you agree to the Terms of Service and acknowledge our Privacy Policy

Welcome to Heatmap

Thank you for registering with Heatmap. Climate change is one of the greatest challenges of our lives, a force reshaping our economy, our politics, and our culture. We hope to be your trusted, friendly, and insightful guide to that transformation. Please enjoy your free articles. You can check your profile here .

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Subscribe to get unlimited Access

Hey, you are out of free articles but you are only a few clicks away from full access. Subscribe below and take advantage of our introductory offer.

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Create Your Account

Please Enter Your Password

Forgot your password?

Please enter the email address you use for your account so we can send you a link to reset your password:

If it turns out to be a bubble, billions of dollars of energy assets will be on the line.

The data center investment boom has already transformed the American economy. It is now poised to transform the American energy system.

Hyperscalers — including tech giants such as Microsoft and Meta, as well as leaders in artificial intelligence like OpenAI and CoreWeave — are investing eyewatering amounts of capital into developing new energy resources to feed their power-hungry data infrastructure. Those data centers are already straining the existing energy grid, prompting widespread political anxiety over an energy supply crisis and a ratepayer affordability shock. Nothing in recent memory has thrown policymakers’ decades-long underinvestment in the health of our energy grid into such stark relief. The commercial potential of next-generation energy technologies such as advanced nuclear, batteries, and grid-enhancing applications now hinge on the speed and scale of the AI buildout.

But what happens if the AI boom buffers and data center investment collapses? It is not idle speculation to say that the AI boom rests on unstable financial foundations. Worse, however, is the fact that as of this year, the tech sector’s breakneck investment into data centers is the only tailwind to U.S. economic growth. If there is a market correction, there is no other growth sector that could pick up the slack.

Not only would a sudden reversal in investor sentiment make stranded assets of the data centers themselves, which will lose value as their lease revenue disappears, it also threatens to strand all the energy projects and efficiency innovations that data center demand might have called forth.

If the AI boom does not deliver, we need a backup plan for energy policy.

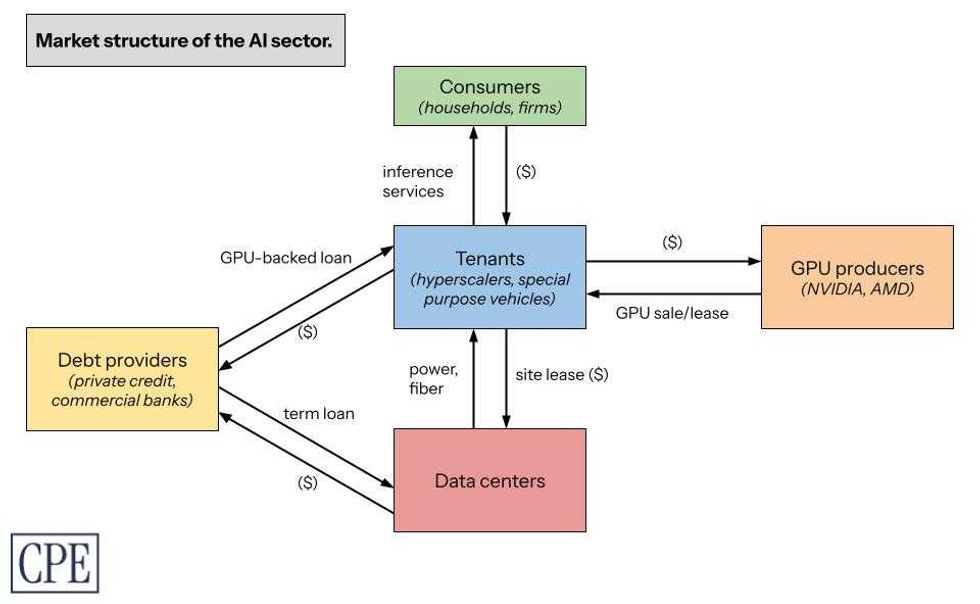

An analysis of the capital structure of the AI boom suggests that policymakers should be more concerned about the financial fundamentals of data centers and their tenants — the tech companies that are buoying the economy. My recent report for the Center for Public Enterprise, Bubble or Nothing, maps out how the various market actors in the AI sector interact, connecting the market structure of the AI inference sector to the economics of Nvidia’s graphics processing units, the chips known as GPUs that power AI software, to the data center real estate debt market. Spelling out the core financial relationships illuminates where the vulnerabilities lie.

First and foremost: The business model remains unprofitable. The leading AI companies ― mostly the leading tech companies, as well as some AI-specific firms such as OpenAI and Anthropic ― are all competing with each other to dominate the market for AI inference services such as large language models. None of them is returning a profit on its investments. Back-of-the-envelope math suggests that Meta, Google, Microsoft, and Amazon invested over $560 billion into AI technology and data centers through 2024 and 2025, and have reported revenues of just $35 billion.

To be sure, many new technology companies remain unprofitable for years ― including now-ubiquitous firms like Uber and Amazon. Profits are not the AI sector’s immediate goal; the sector’s high valuations reflect investors’ assumptions about future earnings potential. But while the losses pile up, the market leaders are all vying to maximize the market share of their virtually identical services ― a prisoner’s dilemma of sorts that forces down prices even as the cost of providing inference services continues to rise. Rising costs, suppressed revenues, and fuzzy measurements of real user demand are, when combined, a toxic cocktail and a reflection of the sector’s inherent uncertainty.

Second: AI companies have a capital investment problem. These are not pure software companies; to provide their inference services, AI companies must all invest in or find ways to access GPUs. In mature industries, capital assets have predictable valuations that their owners can borrow against and use as collateral to invest further in their businesses. Not here: The market value of a GPU is incredibly uncertain and, at least currently, remains suppressed due to the sector’s competitive market structure, the physical deterioration of GPUs at high utilization rates, the unclear trajectory of demand, and the value destruction that comes from Nvidia’s now-yearly release of new high-end GPU models.

The tech industry’s rush to invest in new GPUs means existing GPUs lose market value much faster. Some companies, particularly the vulnerable and debt-saddled “neocloud” companies that buy GPUs to rent their compute capacity to retail and hyperscaler consumers, are taking out tens of billions of dollars of loans to buy new GPUs backed by the value of their older GPU stock; the danger of this strategy is obvious. Others including OpenAI and xAI, having realized that GPUs are not safe to hold on one’s balance sheet, are instead renting them from Oracle and Nvidia, respectively.

To paper over the valuation uncertainty of the GPUs they do own, all the hyperscalers have changed their accounting standards for GPU valuations over the past few years to minimize their annual reported depreciation expenses. Some financial analysts don’t buy it: Last year, Barclays analysts judged GPU depreciation as risky enough to merit marking down the earnings estimates of Google (in this case its parent company, Alphabet), Microsoft, and Meta as much as 10%, arguing that consensus modeling was severely underestimating the earnings write-offs required.

Under these market dynamics, the booming demand for high-end chips looks less like a reflection of healthy growth for the tech sector and more like a scramble for high-value collateral to maintain market position among a set of firms with limited product differentiation. If high demand projections for AI technologies come true, collateral ostensibly depreciates at a manageable pace as older GPUs retain their marketable value over their useful life — but otherwise, this combination of structurally compressed profits and rapidly depreciating collateral is evidence of a snake eating its own tail.

All of these hyperscalers are tenants within data centers. Their lack of cash flow or good collateral should have their landlords worried about “tenant churn,” given the risk that many data center tenants will have to undertake multiple cycles of expensive capital expenditure on GPUs and network infrastructure within a single lease term. Data center developers take out construction (or “mini-perm”) loans of four to six years and refinance them into longer-term permanent loans, which can then be packaged into asset-backed and commercial mortgage-backed securities to sell to a wider pool of institutional investors and banks. The threat of broken leases and tenant vacancies threatens the long-term solvency of the leading data center developers ― companies like Equinix and Digital Realty ― as well as the livelihoods of the construction contractors and electricians they hire to build their facilities and manage their energy resources.

Much ink has already been spilled on how the hyperscalers are “roundabouting” each other, or engaging in circular financing: They are making billions of dollars of long-term purchase commitments, equity investments, and project co-development agreements with one another. OpenAI, Oracle, CoreWeave, and Nvidia are at the center of this web. Nvidia has invested $100 billion in OpenAI, to be repaid over time through OpenAI’s lease of Nvidia GPUs. Oracle is spending $40 billion on Nvidia GPUs to power a data center it has leased for 15 years to support OpenAI, for which OpenAI is paying Oracle $300 billion over the next five years. OpenAI is paying CoreWeave over the next five years to rent its Nvidia GPUs; the contract is valued at $11.9 billion, and OpenAI has committed to spending at least $4 billion through April 2029. OpenAI already has a $350 million equity stake in CoreWeave. Nvidia has committed to buying CoreWeave’s unsold cloud computing capacity by 2032 for $6.3 billion, after it already took a 7% stake in CoreWeave when the latter went public. If you’re feeling dizzy, count yourself lucky: These deals represent only a fraction of the available examples of circular financing.

These companies are all betting on each others’ growth; their growth projections and purchase commitments are all dependent on their peers’ growth projections and purchase commitments. Optimistically, this roundabouting represents a kind of “risk mutualism,” which, at least for now, ends up supporting greater capital expenditures. Pessimistically, roundabouting is a way for these companies to pay each other for goods and services in any way except cash — shares, warrants, purchase commitments, token reservations, backstop commitments, and accounts receivable, but not U.S. dollars. The second any one of these companies decides it wants cash rather than a commitment is when the music stops. Chances are, that company needs cash to pay a commitment of its own, likely involving a lender.

Lenders are the final piece of the puzzle. Contrary to the notion that cash-rich hyperscalers can finance their own data center buildout, there has been a record volume of debt issuance this year from companies such as Oracle and CoreWeave, as well as private credit giants like Blue Owl and Apollo, which are lending into the boom. The debt may not go directly onto hyperscalers’ balance sheets, but their purchase commitments are the collateral against which data center developers, neocloud companies like CoreWeave, and private credit firms raise capital. While debt is not inherently something to shy away from ― it’s how infrastructure gets built ― it’s worth raising eyebrows at the role private credit firms are playing at the center of this revenue-free investment boom. They are exposed to GPU financing and to data center financing, although not the GPU producers themselves. They have capped upside and unlimited downside. If they stop lending, the rest of the sector’s risks look a lot more risky.

A market correction starts when any one of the AI companies can’t scrounge up the cash to meet its liabilities and can no longer keep borrowing money to delay paying for its leases and its debts. A sudden stop in lending to any of these companies would be a big deal ― it would force AI companies to sell their assets, particularly GPUs, into a potentially adverse market in order to meet refinancing deadlines. A fire sale of GPUs hurts not just the long-term earnings potential of the AI companies themselves, but also producers such as Nvidia and AMD, since even they would be selling their GPUs into a soft market.

For the tech industry, the likely outcome of a market correction is consolidation. Any widespread defaults among AI-related businesses and special purpose vehicles will leave capital assets like GPUs and energy technologies like supercapacitors stranded, losing their market value in the absence of demand ― the perfect targets for a rollup. Indeed, it stands to reason that the tech giants’ dominance over the cloud and web services sectors, not to mention advertising, will allow them to continue leading the market. They can regain monopolistic control over the remaining consumer demand in the AI services sector; their access to more certain cash flows eases their leverage constraints over the longer term as the economy recovers.

A market correction, then, is hardly the end of the tech industry ― but it still leaves a lot of data center investments stranded. What does that mean for the energy buildout that data centers are directly and indirectly financing?

A market correction would likely compel vertically integrated utilities to cancel plans to develop new combined-cycle gas turbines and expensive clean firm resources such as nuclear energy. Developers on wholesale markets have it worse: It’s not clear how new and expensive firm resources compete if demand shrinks. Grid managers would have to call up more expensive units less frequently. Doing so would constrain the revenue-generating potential of those generators relative to the resources that can meet marginal load more cheaply — namely solar, storage, peaker gas, and demand-response systems. Combined-cycle gas turbines co-located with data centers might be stranded; at the very least, they wouldn’t be used very often. (Peaker gas plants, used to manage load fluctuation, might still get built over the medium term.) And the flight to quality and flexibility would consign coal power back to its own ash heaps. Ultimately, a market correction does not change the broader trend toward electrification.

A market correction that stabilizes the data center investment trajectory would make it easier for utilities to conduct integrated resource planning. But it would not necessarily simplify grid planners’ ability to plan their interconnection queues — phantom projects dropping out of the queue requires grid planners to redo all their studies. Regardless of the health of the investment boom, we still need to reform our grid interconnection processes.

The biggest risk is that ratepayers will be on the hook for assets that sit underutilized in the absence of tech companies’ large load requirements, especially those served by utilities that might be building power in advance of committed contracts with large load customers like data center developers. The energy assets they build might remain useful for grid stability and could still participate in capacity markets. But generation assets built close to data center sites to serve those sites cheaply might not be able to provision the broader energy grid cost-efficiently due to higher grid transport costs incurred when serving more distant sources of load.

These energy projects need not be albatrosses.

Many of these data centers being planned are in the process of securing permits and grid interconnection rights. Those interconnection rights are scarce and valuable; if a data center gets stranded, policymakers should consider purchasing those rights and incentivizing new businesses or manufacturing industries to build on that land and take advantage of those rights. Doing so would provide offtake for nearby energy assets and avoid displacing their costs onto other ratepayers. That being said, new users of that land may not be able to pay anywhere near as much as hyperscalers could for interconnection or for power. Policymakers seeking to capture value from stranded interconnection points must ensure that new projects pencil out at a lower price point.

Policymakers should also consider backstopping the development of critical and innovative energy projects and the firms contracted to build them. I mean this in the most expansive way possible: Policymakers should not just backstop the completion of the solar and storage assets built to serve new load, but also provide exigent purchase guarantees to the firms that are prototyping the flow batteries, supercapacitors, cooling systems, and uninterruptible power systems that data center developers are increasingly interested in. Without these interventions, a market correction would otherwise destroy the value of many of those projects and the earnings potential of their developers, to say nothing of arresting progress on incredibly promising and commercializable technologies.

Policymakers can capture long-term value for the taxpayer by making investments in these distressed projects and developers. This is already what the New York Power Authority has done by taking ownership and backstopping the development of over 7 gigawatts of energy projects ― most of which were at risk of being abandoned by a private sponsor.

The market might not immediately welcome risky bets like these. It is unclear, for instance, what industries could use the interconnection or energy provided to a stranded gigawatt-scale data center. Some of the more promising options ― take aluminum or green steel ― do not have a viable domestic market. Policy uncertainty, tariffs, and tax credit changes in the One Big Beautiful Bill Act have all suppressed the growth of clean manufacturing and metals refining industries like these. The rest of the economy is also deteriorating. The fact that the data center boom is threatened by, at its core, a lack of consumer demand and the resulting unstable investment pathways is itself an ironic miniature of the U.S. economy as a whole.

As analysts at Employ America put it, “The losses in a [tech sector] bust will simply be too large and swift to be neatly offset by an imminent and symmetric boom elsewhere. Even as housing and consumer durables ultimately did well following the bust of the 90s tech boom, there was a one- to two-year lag, as it took time for long-term rates to fall and investors to shift their focus.” This is the issue with having only one growth sector in the economy. And without a more holistic industrial policy, we cannot spur any others.

Questions like these ― questions about what comes next ― suggest that the messy details of data center project finance should not be the sole purview of investors. After all, our exposure to the sector only grows more concentrated by the day. More precisely mapping out how capital flows through the sector should help financial policymakers and industrial policy thinkers understand the risks of a market correction. Political leaders should be prepared to tackle the downside distributional challenges raised by the instability of this data center boom ― challenges to consumer wealth, public budgets, and our energy system.

This sparkling sector is no replacement for industrial policy and macroeconomic investment conditions that create broad-based sources of demand growth and prosperity. But in their absence, policymakers can still treat the challenge of a market correction as an opportunity to think ahead about the nation’s industrial future.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Catching up with the American Council on Renewable Energy’s Ray Long.

Today’s chat is with Ray Long, CEO of the American Council on Renewable Energy. We first discussed the odds of permitting reform a year and a half ago, for one of the first Q&As in The Fight. Flash forward and we’re still in the same situation, but now also wrestling with added demand for electricity to power data centers. I wanted to talk again about whether he thought the rise of artificial intelligence would increase the odds of some federal deal happening any time soon. The result: a wide-reaching conversation about the future of the electric grid, the struggles to win community buy-in and the sclerotic nature of the U.S. Congress.

The following conversation was lightly edited for clarity.

Do you think the buildout of our energy grid is entwined with the rise of the nation’s data center buildout?

When you look at what we need over the next four years — 166 gigawatts, 15 times the peak load of New York City — that’s a lot of power to build. Roughly half of that is for data center and AI growth.

There are five things we can build in the next four years at scale to address that collective amount. First, it’s transmission — the transmission buildout will help to get a modern grid to enable power flow to where it’s needed in a much more effective way. That’s the first step because if we just build all that power, the current grid can’t handle it.

Second, there are four supply technologies that can be built: solar, batteries, wind, and natural gas. All four of those technologies, we know there’s enough equipment here in the U.S. available for purchase that we can build at volume. And I’ll say this — natural gas is only about 10% of all those gigawatts because of the availability of turbines from suppliers. You can’t get enough over the next four years. So when I talk about decarbonization, most of what is built to address this issue is zero-carbon resources, renewable energy resources.

If you were to compare the current conversation around data center development to the debate over developing renewable energy in the U.S. — or energy in general — do you see any similarities or differences?

There are always issues with permitting projects. Communities are always going to have concerns about what’s built in their backyards.

What’s new — and your polling shows this — is the level of concern communities have. But here’s the thing: Most of this can be overcome by developers going in, listening to what the needs of the communities are, then responding and through the permitting process addressing those concerns. You can’t do that 100% of the time. But my experience is, when you take that sort of approach, you can overcome a lot of it.

Most of the large data centers are actually doing the things I’m discussing — going in and saying, Look, we want to be grid interconnected because grid connection at the end of the day means the resources we’re bringing to bear are also going to make a stronger grid. Number two, it's investing in power generation sources like the ones I said — and those power sources will be on the grid, so they’ll solve for the increased power demands of a community.

Third, water. They should bring the water solutions. You’re seeing data centers coming in and saying it head on now, that they have closed-loop systems or whatever the solution is. At the end of the day, the communities they’re proposing these in have a real negotiating opportunity to make sure they’re holding the data center developers accountable to the needs of the community.

For a community to say we don’t want it here misses a real opportunity for those communities to get the power they need, the grid they need, and the ability to bring down energy costs.

How is the data center debate affecting permitting reform conversations in Washington, from your perspective?

Permitting reform in the U.S. at the state and federal level has been broken for years. The SunZia transmission project? It took 17 years to permit. Ribbon-cutting is in a week or two and there’s still litigation around it. From a business perspective, it’s just untenable, and it’s a miracle that the project is getting built. Developers need a chance to come in and have their project evaluated. Both the community and the developer should be able to get to a go or no-go in a couple of years on one of these projects.

How is data center growth affecting the permitting reform discussion? It’s a very hot issue right now. Right now I think in part because the data center issue is so huge — because we’ve only got four years to solve for the first really big tranche of power we need and prices across the board for electricity are escalating — this is coming to a head. The data center load is a part of the catalyst to get people talking about it [permitting reform].

Do you expect legislating in Congress on permitting reform this year? Anything beyond more conversation?

My hope is that we get a bill. A few weeks ago someone from the administration was quoted as saying they wanted a framework for a bill by the end of May, and it’s June now. We haven’t seen both sides or the administration coalesce around a final project yet.

We’re in a midterm election cycle. Typically it’s very difficult during these cycles to move bills like this. At the same time, with electricity prices increasing and the need to build more, to fix this, I’m very hopeful something will come together. And look at the Senate — you’ve got Republicans and the Democratic ranking members talking about this. It’s all good signs.

If everyone’s talking about energy and affordability during this election, isn’t that a good thing for action in the next Congress?

I’ll say this: You’re seeing the catalyst for it right now with prices rising, and almost every grid operator around the country has raised concerns about shortages at some point this year or next year. It’ll hopefully be enough to have policymakers do something about it this year.

Plus more of week’s biggest development fights.

1. Ohio — This state might just be the most important flashpoint in the national fight over advanced energy and tech infrastructure.

2. Laramie County, Wyoming — The Cowboy State’s capital city is one of the few to reject a data center moratorium. But tech companies. don’t get your hopes up too high.

3. Los Angeles County, California — Elsewhere, we saw the first city in California vote to ban data centers … once and for all.

4. Charles County, Maryland — This populous county south of D.C. is now out of reach for data center development.

5. Baldwin County, Alabama — There will be a vote at the end of this month on whether to ban solar in the county whose opposition nearly prompted a statewide moratorium on development.

6. Hopkins County, Texas — I have one last update related to a large data center legal fight we’ve been covering closely.

The national AI data center moratorium has momentum.

As I’ve been documenting for months here at The Fight, data center opposition is surging across the country. Our latest Heatmap Pro poll puts some very hard numbers behind that picture. More than 7 in 10 Americans oppose new data center construction near where they live, up from just over 4 in 10 last fall. Part of what’s driving that opposition: More than half of respondents hold data centers largely responsible for rising electricity prices, and nearly half are pessimistic about the effect artificial intelligence will have on their lives.

Here’s yet another data point from our poll that underscores the intensity of the opposition: A majority of Americans now say they support a nationwide halt to new data center construction.

Digging into demographics, support for a national AI data center moratorium breaks predictably based on age and gender — younger people are more likely to back the idea, as are women. Americans are just as likely to back moratoria in their own states as they are a national stop to development, indicating the public relations rot may run deep amongst its critics in the public.

The notion of an AI data center moratorium comes from the political left, specifically Senator Bernie Sanders and Representative Alexandria Ocasio-Cortez, who introduced the first bill to enact such a pause earlier this year. Yet its appeal straddles political lines. Among Democrats, 66% said they’d back a national moratorium, compared to just 19% opposed; in the Republican camp, 55% said they backed the idea, compared to 28% opposed. Independents echoed those views as well, with answers falling neatly in between the two sides (58% support, 21% oppose).

The surge in support for a country-wide stop to new data centers stands in contrast to the more hesitant attitude politicians of all stripes have shown toward the opposition movement. That includes the White House, which until this week embraced a deregulatory approach to fostering AI tech before abruptly changing course this week and seeking early access to new models.

A good example of this political distance exists in Missouri, where Republican Governor Mike Kehoe last month proudly declared that Google was investing $15 billion in a hyperscale data center project in the rural town of New Florence in Montgomery County. After Kehoe’s announcement, the White House’s rapid response media account joined in on celebrating this economic investment, touting the potential for “thousands of construction jobs and hundreds of permanent jobs” from the Google project.

Among the hoi polloi, however, discontent was rife. This was actually the second large data center project in New Florence, and locals in and around this town of fewer than 1,000 residents have been busy suing the county to halt a separate Amazon data center proposed directly across from Google’s project.

Montgomery County is incredibly conservative politically and “has voted red since I can’t even remember,” Sabrina Cope, an organizer with opposition group Preserve Montgomery County, told me over the phone. “They’re turning up their nose at the White House’s support for these kinds of projects. This isn’t an issue solely Democrats or Republicans are upset about.” (The White House did not respond to a request for comment.)

The political mismatch here is also bipartisan.

In New York, state legislators on Thursday passed legislation to enact a one-year pause on new data center permitting. The bill now goes to the desk of New York’s governor, Democrat Kathy Hochul, who has signaled she’s against a broad moratorium. “This is a local decision for municipalities,” Hochul told reporters last month, according to a Politico report. “It’s not a statewide approach, necessarily, but it’s something I’m looking at intensely.”

The scene in the Empire State feels eerily similar to what happened in the Pine Tree State when Maine Democrats sought to enact a moratorium, only to be stymied by a veto from Governor Janet Mills, also a Democrat. Should Hochul spurn the state legislature, it would defy what our polls say is the overwhelming political opinion.

Our poll also found rural voters are almost 10 points more likely than suburban and urban denizens to support a moratorium on new data centers. Knowing how often land use conflicts occur in upstate New York, where voters skew Republican, the yeoman’s calculus in both parties might lead more politicians to support temporarily stopping or stalling data center industry growth.

In Illinois, we’re starting to see policy start to align at least a little more closely with what Democratic voters want. On Friday, Governor J.B. Pritzker announced he would pause data center tax breaks and ask the state legislature to enact a new statute governing the industry’s water and energy use as well as deployment of non-disclosure agreements. If Illinois is a harbinger of things to come in blue states, we’ll see more action like this.