You’re out of free articles.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Sign In or Create an Account.

By continuing, you agree to the Terms of Service and acknowledge our Privacy Policy

Welcome to Heatmap

Thank you for registering with Heatmap. Climate change is one of the greatest challenges of our lives, a force reshaping our economy, our politics, and our culture. We hope to be your trusted, friendly, and insightful guide to that transformation. Please enjoy your free articles. You can check your profile here .

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Subscribe to get unlimited Access

Hey, you are out of free articles but you are only a few clicks away from full access. Subscribe below and take advantage of our introductory offer.

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Create Your Account

Please Enter Your Password

Forgot your password?

Please enter the email address you use for your account so we can send you a link to reset your password:

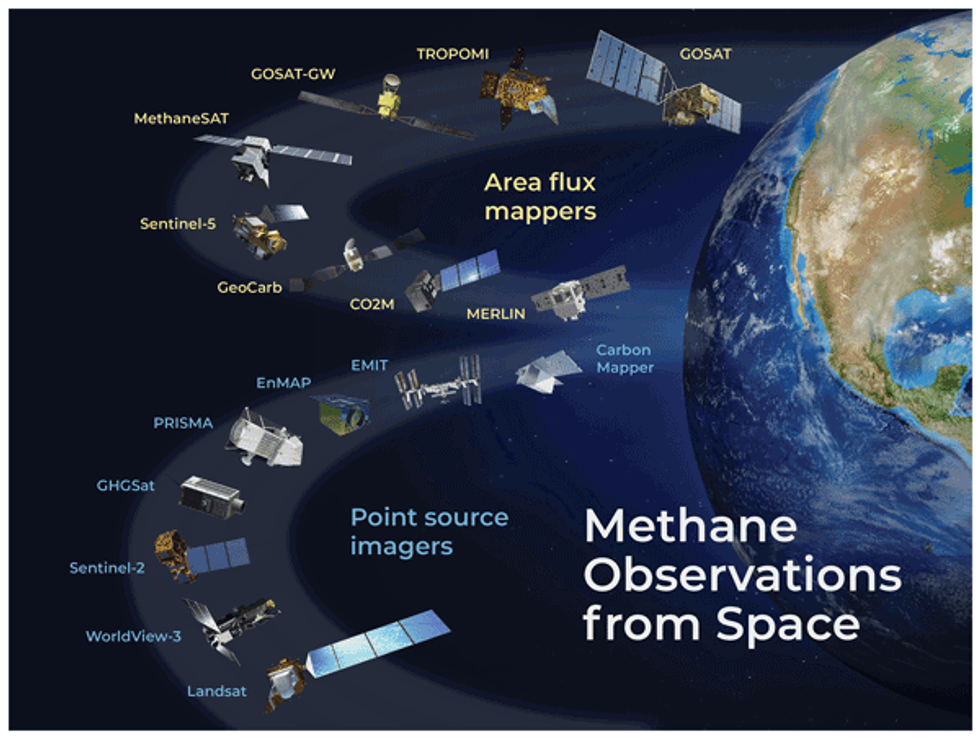

Over a dozen methane satellites are now circling the Earth — and more are on the way.

On Monday afternoon, a satellite the size of a washing machine hitched a ride on a SpaceX rocket and was launched into orbit. MethaneSAT, as the new satellite is called, is the latest to join more than a dozen other instruments currently circling the Earth monitoring emissions of the ultra-powerful greenhouse gas methane. But it won’t be the last. Over the next several months, at least two additional methane-detecting satellites from the U.S. and Japan are scheduled to join the fleet.

There’s a joke among scientists that there are so many methane-detecting satellites in space that they are reducing global warming — not just by providing essential data about emissions, but by blocking radiation from the sun.

So why do we keep launching more?

Despite the small army of probes in orbit, and an increasingly large fleet of methane-detecting planes and drones closer to the ground, our ability to identify where methane is leaking into the atmosphere is still far too limited. Like carbon dioxide, sources of methane around the world are numerous and diffuse. They can be natural, like wetlands and oceans, or man-made, like decomposing manure on farms, rotting waste in landfills, and leaks from oil and gas operations.

There are big, unanswered questions about methane, about which sources are driving the most emissions, and consequently, about tackling climate change, that scientists say MethaneSAT will help solve. But even then, some say we’ll need to launch even more instruments into space to really get to the bottom of it all.

Measuring methane from space only began in 2009 with the launch of the Greenhouse Gases Observing Satellite, or GOSAT, by Japan’s Aerospace Exploration Agency. Previously, most of the world’s methane detectors were on the ground in North America. GOSAT enabled scientists to develop a more geographically diverse understanding of major sources of methane to the atmosphere.

Soon after, the Environmental Defense Fund, which led the development of MethaneSAT, began campaigning for better data on methane emissions. Through its own, on-the-ground measurements, the group discovered that the Environmental Protection Agency’s estimates of leaks from U.S. oil and gas operations were totally off. EDF took this as a call to action. Because methane has such a strong warming effect, but also breaks down after about a decade in the atmosphere, curbing methane emissions can slow warming in the near-term.

“Some call it the low hanging fruit,” Steven Hamburg, the chief scientist at EDF leading the MethaneSAT project, said during a press conference on Friday. “I like to call it the fruit lying on the ground. We can really reduce those emissions and we can do it rapidly and see the benefits.”

But in order to do that, we need a much better picture than what GOSAT or other satellites like it can provide.

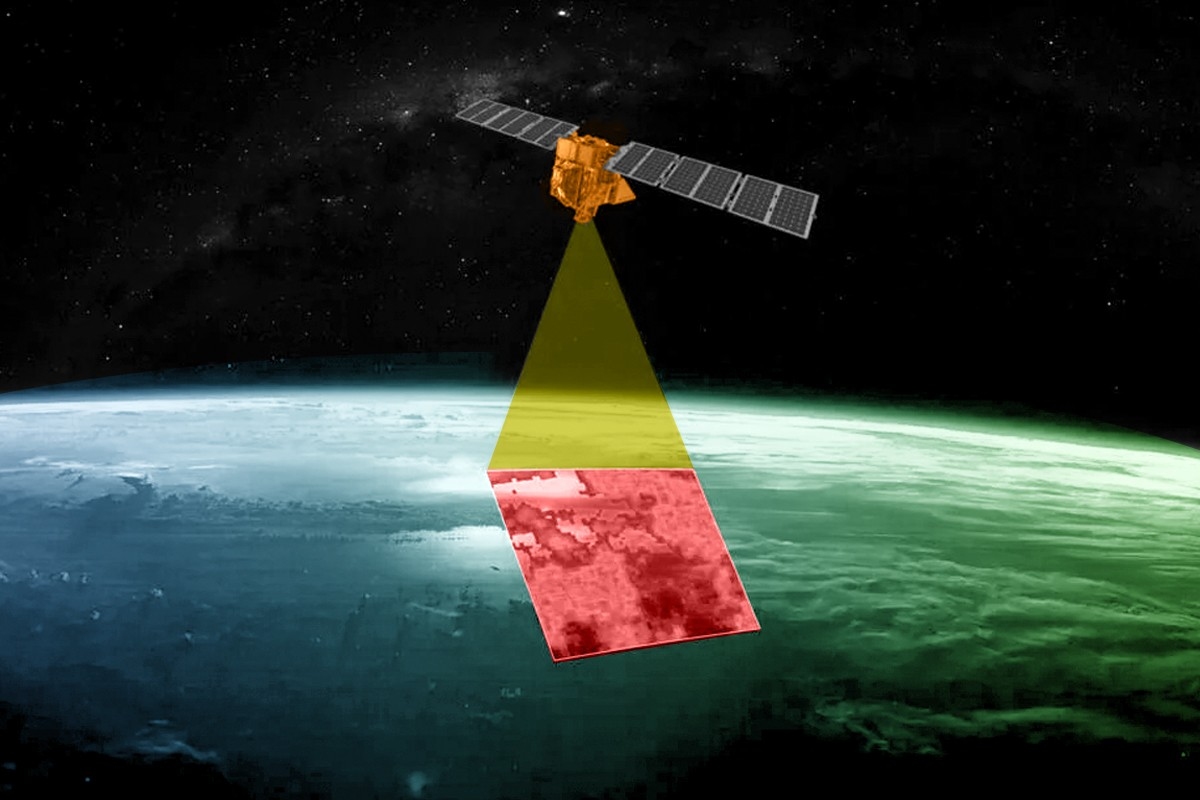

In the years since GOSAT launched, the field of methane monitoring has exploded. Today, there are two broad categories of methane instruments in space. Area flux mappers, like GOSAT, take global snapshots. They can show where methane concentrations are generally higher, and even identify exceptionally large leaks — so-called “ultra-emitters.” But the vast majority of leaks, big and small, are invisible to these instruments. Each pixel in a GOSAT image is 10 kilometers wide. Most of the time, there’s no way to zoom into the picture and see which facilities are responsible.

Point source imagers, on the other hand, take much smaller photos that have much finer resolution, with pixel sizes down to just a few meters wide. That means they provide geographically limited data — they have to be programmed to aim their lenses at very specific targets. But within each image is much more actionable data.

For example, GHGSat, a private company based in Canada, operates a constellation of 12 point-source satellites, each one about the size of a microwave oven. Oil and gas companies and government agencies pay GHGSat to help them identify facilities that are leaking. Jean-Francois Gauthier, the director of business development at GHGSat, told me that each image taken by one of their satellites is 12 kilometers wide, but the resolution for each pixel is 25 meters. A snapshot of the Permian Basin, a major oil and gas producing region in Texas, might contain hundreds of oil and gas wells, owned by a multitude of companies, but GHGSat can tell them apart and assign responsibility.

“We’ll see five, 10, 15, 20 different sites emitting at the same time and you can differentiate between them,” said Gauthier. “You can see them very distinctly on the map and be able to say, alright, that’s an unlit flare, and you can tell which company it is, too.” Similarly, GHGSat can look at a sprawling petrochemical complex and identify the exact tank or pipe that has sprung a leak.

But between this extremely wide-angle lens, and the many finely-tuned instruments pointing at specific targets, there’s a gap. “It might seem like there’s a lot of instruments in space, but we don’t have the kind of coverage that we need yet, believe it or not,” Andrew Thorpe, a research technologist at NASA’s Jet Propulsion Laboratory told me. He has been working with the nonprofit Carbon Mapper on a new constellation of point source imagers, the first of which is supposed to launch later this year.

The reason why we don’t have enough coverage has to do with the size of the existing images, their resolution, and the amount of time it takes to get them. One of the challenges, Thorpe said, is that it’s very hard to get a continuous picture of any given leak. Oil and gas equipment can spring leaks at random. They can leak continuously or intermittently. If you’re just getting a snapshot every few weeks, you may not be able to tell how long a leak lasted, or you might miss a short but significant plume. Meanwhile, oil and gas fields are also changing on a weekly basis, Joost de Gouw, an atmospheric chemist at the University of Colorado, Boulder, told me. New wells are being drilled in new places — places those point-source imagers may not be looking at.

“There’s a lot of potential to miss emissions because we’re not looking,” he said. “If you combine that with clouds — clouds can obscure a lot of our observations — there are still going to be a lot of times when we’re not actually seeing the methane emissions.”

De Gouw hopes MethaneSAT will help resolve one of the big debates about methane leaks. Between the millions of sites that release small amounts of methane all the time, and the handful of sites that exhale massive plumes infrequently, which is worse? What fraction of the total do those bigger emitters represent?

Paul Palmer, a professor at the University of Edinburgh who studies the Earth’s atmospheric composition, is hopeful that it will help pull together a more comprehensive picture of what’s driving changes in the atmosphere. Around the turn of the century, methane levels pretty much leveled off, he said. But then, around 2007, they started to grow again, and have since accelerated. Scientists have reached different conclusions about why.

“There’s lots of controversy about what the big drivers are,” Palmer told me. Some think it’s related to oil and gas production increasing. Others — and he’s in this camp — think it’s related to warming wetlands. “Anything that helps us would be great.”

MethaneSAT sits somewhere between the global mappers and point source imagers. It will take larger images than GHGSat, each one 200 kilometers wide, which means it will be able to cover more ground in a single day. Those images will also contain finer detail about leaks than GOSAT, but they won’t necessarily be able to identify exactly which facilities the smaller leaks are coming from. Also, unlike with GHGSat, MethaneSAT’s data will be freely available to the public.

EDF, which raised $88 million for the project and spent nearly a decade working on it, says that one of MethaneSAT’s main strengths will be to provide much more accurate basin-level emissions estimates. That means it will enable researchers to track the emissions of the entire Permian Basin over time, and compare it with other oil and gas fields in the U.S. and abroad. Many countries and companies are making pledges to reduce their emissions, and MethaneSAT will provide data on a relevant scale that can help track progress, Maryann Sargent, a senior project scientist at Harvard University who has been working with EDF on MethaneSAT, told me.

It could also help the Environmental Protection Agency understand whether its new methane regulations are working. It could help with the development of new standards for natural gas being imported into Europe. At the very least, it will help oil and gas buyers differentiate between products associated with higher or lower methane intensities. It will also enable fossil fuel companies who measure their own methane emissions to compare their performance to regional averages.

MethaneSAT won’t be able to look at every source of methane emissions around the world. The project is limited by how much data it can send back to Earth, so it has to be strategic. Sargent said they are limiting data collection to 30 targets per day, and in the near term, those will mostly be oil and gas producing regions. They aim to map emissions from 80% of global oil and gas production in the first year. The outcome could be revolutionary.

“We can look at the entire sector with high precision and track those emissions, quantify them and track them over time. That’s a first for empirical data for any sector, for any greenhouse gas, full stop,” Hamburg told reporters on Friday.

But this still won’t be enough, said Thorpe of NASA. He wants to see the next generation of instruments start to look more closely at natural sources of emissions, like wetlands. “These types of emissions are really, really important and very poorly understood,” he said. “So I think there’s a heck of a lot of potential to work towards the sectors that have been really hard to do with current technologies.”

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Giving up on hourly matching by 2030 doesn’t mean giving up on climate ambition — necessarily.

Microsoft celebrated a “milestone achievement” earlier this year, when it announced that it had successfully matched 100% of its 2025 electricity usage with renewable energy. This past week, however, Bloomberg reported that the company was considering delaying or abandoning its next clean energy target set for 2030.

What comes after achieving 100% renewable energy, you might ask? What Microsoft did in 2025 was tally its annual energy consumption and purchase an equal amount of solar and wind power. By 2030, the company aspired to match every kilowatt it consumes with carbon-free electricity hour by hour. That means finding clean power for all the hours when the sun isn’t shining and the wind isn’t blowing.

The news that Microsoft is revisiting this goal could be read as the beginning of the end of corporate climate ambition. Microsoft has long been a pioneer on that front, setting increasingly difficult goals and then doing the groundwork to help others follow in its footsteps. Now it appears to be accepting defeat. The news comes just weeks after my colleague Robinson Meyer broke the news that the company is also pausing its industry-leading carbon removal purchasing program.

Delaying or abandoning the clean energy target — the two options presented in the Bloomberg story — represent quite different scenarios, however.

“There’s going to be a big difference between them saying, We’re going to keep trying as hard as we can to go as far as we can, but acknowledge we may not hit it, versus saying, Well, we can’t hit this extremely ambitious goal we set for ourselves, therefore we’re just giving up on the overall mission,” Wilson Ricks, a manager in Clean Air Task Force’s electricity program, told me.

The goal was always going to be difficult, if not impossible, for Microsoft to hit, Ricks said. Yes, it’s gotten tougher as Microsoft’s electricity usage has surged with the rise of artificial intelligence, and because Congress killed subsidies for clean energy as the Trump administration has done its best to stall wind and solar development. But some of the technologies likely needed to achieve the goal, such as advanced nuclear and geothermal power plants, have yet to achieve commercial deployment, let alone reach meaningful scale, and probably won’t by 2030 — especially not across all the regions that Microsoft operates in.

Nonetheless, some clean energy advocates (including Ricks) argue that keeping hourly matching as a north star is paramount because it helps put the world on the path to fully decarbonized electric grids.

Google was the first to introduce a 24/7 carbon-free energy strategy in 2020, and for a moment, it seemed that the rest of the corporate world would follow. A handful of companies joined a coalition to support the goal, but to date, I’m aware of just two — Microsoft and the data storage company Iron Mountain — that have followed Google in committing to achieving it.

Most companies approach their clean energy claims with considerably less precision. The norm is to purchase “unbundled” renewable energy certificates, tradeable vouchers that say a certain amount of renewable energy has been generated somewhere, at some point, and that the certificate owner can lay claim to it. Many simply buy enough of these RECs to cover their annual electricity usage and call themselves “powered by 100% renewable energy.”

There’s a spectrum of quality in the RECs available for purchase, but the market is flooded with cheap, relatively meaningless certificates. A company that operates in a coal-heavy region like Indiana can buy RECs from a wind farm in Texas that was built a decade ago, which won’t do anything to change the makeup of the grid in either place.

Today, the gold standard for companies with capital to throw around is instead to seek out long-term contracts directly with wind and solar developers known as power purchase agreements. That doesn’t mean the wind and solar farms send power to the companies directly. But these types of contracts are more likely to bring new projects onto the grid by providing guaranteed future revenues, helping developers secure the financing they need to build.

Microsoft started buying unbundled RECs more than a decade ago, and in 2014, it reported it had matched all of its global electricity usage. In 2016, the company began setting goals for direct procurement of renewable energy. In 2020, it pledged to achieve 100% renewable this way by 2025 — but it wasn’t going to sign just any wind or solar agreements. It aimed to pursue contracts with projects that were in the same regions as the company’s operations and that wouldn’t have been built without the company’s support. “Where and how you buy matters,” it wrote in its 2020 sustainability report. “The closer the new wind or solar farm is to your data center, the more likely it is those zero carbon electrons are powering it.”

In 2021, Microsoft upped the ante again by establishing its 2030 hourly matching target, which it referred to as “100/100/0” — 100% of electrons, 100% of the time, zero-carbon energy.

Microsoft has never publicly reported its progress toward the 2030 goal. The company’s enthusiasm for the target has also appeared to wane. In 2020, before Microsoft even made the 100/100/0 commitment, it touted a solution it developed to track and match renewable energy generation and consumption on an hourly basis. In the years since, it has led its peers in investments in round-the-clock nuclear power, even signing a 20-year power purchase agreement with Constellation Energy to bring the shuttered Three Mile Island nuclear plant in Pennsylvania back online.

But Microsoft has stopped publicizing the goal in blog posts and press releases. It went unmentioned in the recent announcement about the 2025 renewable energy achievement, for instance. And a section in the company’s annual sustainability report listing its climate targets that had previously advertised the 2030 goal as “Replacing with 100/100/0 carbon-free energy” was re-written in 2025 as “Expanding carbon-free electricity,” fuzzier rhetoric that now reads as a harbinger of a softer approach.

Microsoft did not respond to questions about its progress toward the 2030 target. In an emailed statement, a spokesperson emphasized the company’s commitment to maintaining its annual matching goal — the one achieved in 2025. No doubt that will take a lot more investment in the years to come now that the company is gobbling up a lot more electricity for data centers — some of it directly from natural gas plants.

Microsoft also shared a statement from Melanie Nakagawa, Microsoft’s chief sustainability officer, emphasizing the company’s commitment to become carbon negative. “At times we may make adjustments to our approach toward our sustainability goals,” she said. “Any adjustments we make are part of our disciplined approach—not a change in our long-term ambition.”

Even if Microsoft axes its hourly matching target, the company might have to start reporting its clean electricity usage on an hourly basis anyway. The Greenhouse Gas Protocol, a nonprofit that sets standards for how companies should calculate their emissions, is currently considering adopting an hourly accounting requirement. While the protocol’s standards are voluntary, companies almost uniformly follow them, and they will soon become mandatory in much of the world, as governments in California and Europe plan to integrate them into corporate disclosure rules.

The accounting rule change is highly controversial, with many companies arguing that it will deter them from investing in clean energy altogether, since their purchases won’t look as good on paper. “I don’t think anybody is debating having rules and guidelines around how you do more narrow matching, we should have that,” Michael Leggett, the co-founder and chief product officer for Ever.Green, a company that sells high-impact RECs, told me. “I think the debate has largely been around, is that required?”

Leggett said he could see how Microsoft’s pullback could be twisted to support either side. Proponents of the hourly accounting method will say, “Aha! See? This is why we have to require it.” Opponents will say, “See, even Microsoft can’t do it, so how are you going to require all these other companies to do it?”

I spoke to Alex Piper, the head of U.S. policy and markets at EnergyTag, a nonprofit that advocates for reforms to enable 24/7 clean energy, who saw the news as vindicating.

“What we’re seeing right now is many of the hyperscale technology companies look to the fastest path to power, and whether it is or not, some of them are turning to gas as that solution,” he told me. Piper argued that companies are choosing natural gas in part because they can get away with clean energy claims under the protocol’s existing rules. “The proposed rules for the greenhouse gas protocol would require those companies to at least be transparent.”

But Microsoft walking back its hourly matching goal does not have to mean that it’s walking back its climate ambition. It’s possible for companies to achieve significant emissions reductions by focusing their clean energy purchases on the places where wind and solar will do the most to displace fossil fuels, rather than worrying about matching every hour. For a company that operates in California, for example, supporting the addition of solar power to a coal-heavy grid — even if it’s in a different part of the country or the world — will do more, faster, than helping to build solar locally or waiting for around-the-clock resources such as geothermal power to come online.

Critics of hourly accounting argue that it doesn’t give companies credit for this kind of approach. “What I would love to have happen is anything to incentivize, recognize, and reward companies signing 20-year contracts that enable new projects coming online,” Leggett said of the Greenhouse Gas Protocol’s forthcoming rule change.

Ricks, of Clean Air Task Force, rejects the idea that an hourly accounting requirement would deter these kinds of deals. “That doesn’t mean that they can’t report any other set of numbers they want to,” he said. “Many companies do report things that aren’t currently recognized in the Greenhouse Gas Protocol.”

Microsoft is a prime example. The company includes two measures of its renewable energy usage in its annual reports: “percentage of renewable electricity,” which includes the unbundled RECs Microsoft has continued to buy over the years, and “percentage of direct renewable electricity,” which tracks power purchase agreements and the renewable portion of the grid mix where its facilities are located. The former uses the Greenhouse Gas protocol’s current accounting method, under which Microsoft says it has hit 100% every year since 2014. But the latter is the company’s own bespoke calculation.

The company’s 2025 feat was based on this made-up methodology, and it represents the first time Microsoft has announced to the world that it used 100% renewable energy. It never previously made such claims about its REC purchases, as far as I can tell. In other words, Microsoft’s standards for what it publicizes are far more rigorous than what the Greenhouse Gas Protocol requires.

Regardless of what the protocol decides, it will determine only what companies must report. It won’t prevent them from offering up their own, additional metrics of success.

PJM Interconnection has some ideas, as does the state of New Jersey.

We’ve already talked this week about Pennsylvania asking whether the modern “regulatory compact,” which grants utilities monopoly geographical franchises and regulated returns from their capital investments, is still suitable in this era of rising prices and data-center-driven load growth.

Now America’s biggest electricity market and another one of that market’s biggest states are considering far-reaching, fundamental reforms that could alter how electricity infrastructure is planned and paid for over 65 million Americans.

New Jersey Governor Mikie Sherrill anchored her 2025 campaign on electricity prices, and for good reason — in the past four years, electricity prices in the state have gone up 48%, according to Heatmap and MIT’s Electricity Price Hub, while average bills have risen from $83 per month to $130. On her first day in office, Sherrill issued two executive orders acting on that promise, directing the state to make funds available to freeze rates and declaring a state of emergency to ease the way to building more generation.

Included in that first order was a review of utility business models to be carried out by state regulators. What that review will entail is now coming into focus.

On Wednesday, the New Jersey Board of Public Utilities issued a statement announcing that it will look specifically at “whether New Jersey’s century-old utility business model — one that rewards electric distribution companies (EDCs) for capital spending even when cheaper alternatives exist — should be replaced with a framework tied to performance, affordability, and long-term cost stability.” In case anyone was still ambiguous as to what the outcome of said study might be, the board added that it is “expected to drive the most significant restructuring of utility regulation in New Jersey in decades.”

The current system, the board’s president Christine Guhl-Savoy said at a hearing Thursday, “creates a structural incentive to favor capital intensive solutions, even when lower costs, non-wires or demand side alternatives may be available.”

This structure, she said, could help explain why “over the past decade, electric delivery charges in New Jersey have risen steadily.” Within the service territory of PSEG, one of the four major New Jersey utilities, distribution charges alone have risen from $19.24 per month in January 2020 (as far back as the Heatmap-MIT data goes) to $21.84 as of April, while transmission charges have risen from around $20 to just over $29 per month. Many critics of the utility business model point to high levels of local grid spending on distribution as a way that utilities pad their earnings with returns harvested from ratepayers.

In the system regulators explored at the hearing, new projects would get a more skeptical look and ratepayers payouts would be partially determined by utilities hitting pre-defined service goals. NJBPU executive director Bob Brabston also indicated that the review process would take a close look at utilities’ regulated returns on equity — echoing his neighbor across the Delaware River, Pennsylvania Governor Josh Shapiro, who wrote in a letter to his state’s utilities earlier this week that these returns must be “transparent” and “justifiable,” and no longer be based on “educated guesses.”

“We want to make sure that the actual cost of equity and the returns on equity are close,” Brabston said Thursday. “We don’t want there to be a significant gap between the cost of equity that you all experience and the returns that the agencies that the agency awards.”

Meanwhile, in Valley Forge, Pennsylvania, the framework within which New Jersey’s utilities exist is coming in for its own examination.

PJM Interconnection — the nation’s largest electricity market, which covers not just Pennsylvania and New Jersey but also part or all of 11 other states — released an almost 70-page paper Wednesday, in which the organization’s president David Mills wrote that “the current situation is not tenable.”

PJM has been the poster child for a host of issues plaguing the electricity markets across the country, including fast-rising prices, a failure to quickly bring on new generation, and an inability to assure the market’s preferred level of reserve reliability. This set of challenges, Mills said in the paper’s introduction, “reflects something more fundamental than a design that needs recalibration.” Instead, PJM must consider “whether the foundational assumptions of the market remain valid – and if not, what a valid set of assumptions would require.”

The problem with the electricity market, he argued, can be solved by more markets. Right now, when prices shoot up, governments intervene with price caps, suppressing the market signal necessary to bring on sufficient generation that would bring down prices.

To replace that system, the paper proposes three possible models. The first, which it calls “Stabilized Markets,” would allow capacity to be procured for several years at a time outside of the current auction system, so that utilities could make sure their basic needs were covered before they go into the annual auctions. This would provide long term security for new investment.

The second path would be a more fundamental reform. This “Differential Reliability” approach would do away with the “shared reliability compact,” under which all loads must be served by the system at all times. Instead, PJM would “develop the operational and commercial framework to explicitly differentiate reliability,” incentivizing approaches like bring your own generation or curtailing power for new large sources of demand.

The third path is an “Energy Market Transition,” which might also be called the “Texas option.” Following this path, the capacity market would shrink as a portion of revenues earned by generators, and more revenue would come from real-time or near-real-time electricity sales.

While this path isn’t “full Texas” (ERCOT doesn’t have a capacity market at all), it would mean allowing for higher prices for energy in real-time, a.k.a. “scarcity pricing” which is arguably the defining feature of the ERCOT system (though even that was scaled back when prices got too high).

“The choices embedded in these paths involve genuine trade-offs, and those trade-offs affect different stakeholders uniquely,” the paper says.If PJM has learned anything in the past few years, it’s that it doesn’t get to make decisions on its own. Those stakeholders will get their say, one way or another.

Big fundraises for Nyobolt and Skeleton Technologies, plus more of the week’s biggest money moves.

Following a quiet week for new deals, the industry is back at it with a bunch of capital flowing into some of the industry’s most active areas. My colleague Alexander C. Kaufman already told you about one of the more buzzworthy announcements from data center-land in Wednesday’s AM newsletter: Wave energy startup Panthalassa raised $140 million in a round led by Peter Thiel to “perform AI inference computing at sea” using nodes powered by the ocean’s waves.

This week also saw fresh funding for more conventional data center infrastructure, as Nyobolt and Skeleton Technologies both announced later-stage rounds for data center backup power solutions. Meanwhile, it turns out Redwood Materials is not the only company bringing in significant capital for second-life EV battery systems — Moment Energy just raised $40 million to pursue a similar approach. Elsewhere, investors backed an effort to rebuild domestic magnesium production, and, in a glimmer of hope for a sector on the outs, gave a boost to green cement startup Terra CO2.

Cambridge-based startup Nyobolt has become the latest battery company to reach a $1 billion valuation, with its expansion into the data center market helping fuel excitement around its tech. Spun out of University of Cambridge research in 2019, the company develops ultra-fast-charging batteries based on a modified lithium-ion chemistry. Its core innovation is an anode made from niobium tungsten oxide, which Nyobolt says enables its batteries to charge to 80% in less than five minutes, with a cycle life that’s 10 times longer than conventional lithium-ion, all without the risk of fire.

The company has now raised a $60 Series C, following what it describes as a period of “rapid commercial momentum,” with revenue increasing five-fold year-over-year as customers in the robotics and data center industry piled in. Symbotic, an autonomous robotics company and existing customer, led the latest round. While Symbotic previously relied on supercapacitors to power its robots, Nyobolt’s says its batteries provide six times more energy capacity in a lighter package, allowing its warehouse robots to work for retailers like Walgreens, Target, and Kroger around the clock.

Now the startup is targeting data center customers too, positioning its tech as a fast-acting fix for the sudden power surges common to large-scale artificial intelligence workloads, as well as a temporary backup power solution for outages. While it has no confirmed domestic data center customers to date, it does have a nonbinding agreement with the Indian state of Rajasthan to deploy over 100 megawatts of off-grid AI data center and power management infrastructure, part of a broader push to expand its presence across the country.

Notably, the press release made no mention of plans to sell its tech to electric vehicle automakers, though this appears to have been a central focus previously. As recently as last summer, executive vice president Ramesh Narasimhan told the BBC that he hoped Nyobolt’s batteries would “transform the experience of owning an EV.” But while its tech does enable extremely fast charging, its underlying chemistry is not optimized for long-range driving. A sports car built to test the company’s batteries had just a 155 mile range. So like many of its climate tech peers, the company appears to be betting that data centers now represent a more reliable opportunity.

This week brought additional news from another European player aiming to smooth out data center power surges. Estonia-based supercapacitor startup Skeleton Technologies raised $39 million in what it describes as the first close of a pre-IPO funding round, with a U.S. listing planned for next year. Its core tech is built around a “curved graphene” structure, which the company likens to a crumpled sheet of paper with a high surface area. The graphene’s many exposed surfaces and edges allows it to hold more electric charge, which Skeleton says delivers a 72% improvement in energy density.

Like Nyobolt, Skeleton says its tech offers faster response times and longer cycle life. But supercapacitors are a fundamentally different technology than Nyobolt’s modified lithium-ion solution. Though they offer near-instantaneous response times, they store very little energy — just enough to smooth out microsecond power spikes in GPU workloads. Nyobolt’s batteries, by contrast, aim not only to smooth out data center power spikes, but also to deliver about 90 seconds of backup power in the case of an outage, before a generator or other backup source kicks in.

Skeleton is already mass-producing supercapacitors in Germany and delivering to unnamed “major U.S. hyperscalers for AI infrastructure.” It’s also making moves to expand its U.S. footprint ahead of its pending IPO, opening an engineering facility in Houston and aiming to begin domestic manufacturing of AI data center solutions in the first half of this year.

Last year brought a wave of new climate tech coalitions, with one of the most ambitious efforts known as the All Aboard Coalition. This group of venture firms is targeting the investment gap known as the missing middle, which falls between early-stage venture rounds and infrastructure funding. The model is relatively mechanical: When three or more member firms participate in a later-stage round for a company, the coalition automatically coinvests out of its own fund, matching the members’ combined contribution.

The group made its first investment in January, supporting the AI-powered geothermal exploration and development company Zanskar’s Series C round. This week, it announced its second: a $22 million commitment to low-carbon cement startup Terra CO2, bringing the company’s Series B total to $147 million. Cement production accounts for roughly 8% of global emissions, a figure Terra aims to shrink by making so-called "supplementary cementitious materials” — which can partially displace traditional cement in concrete mixes — from abundant silicate rocks. By grinding and thermally processing these rocks into a glassy powder, Terra’s product mimics the properties of conventional cement. The company says it can replace up to 50% of the cement in typical concrete mixes, lowering associated emissions by as much as 70%.

The new funding will help Terra build its first commercial-scale plant in Texas, exactly the type of first-of-a-kind project that the coalition was designed to support. But the scale of this challenge remains clear. As noted in ImpactAlpha’s coverage, the coalition has raised just $100 million toward its goal of a $300 million fund — already a relatively modest goal considering the capital intensity of novel infrastructure projects. Bloomberg previously reported that the group aimed to raise the full amount by the end of October 2025, raising questions about the willingness of LPs to bet on projects at this crucial but capital-intensive juncture.

When I think about repurposing used electric vehicle batteries for stationary storage, I think of battery recycling giant Redwood Materials, which raised a $425 million Series E in January after moving aggressively into this promising market. But while Redwood’s well-established recycling business certainly provides it with the largest pipeline of used batteries, it’s far from the only company pursuing this business model. A smaller player with a largely similar approach underscored that this week, when it announced a $40 million Series B to scale its gigafactory in Texas and expand its facilities in British Columbia.

That’s Moment Energy, which focuses on using second-life EV batteries to power commercial and industrial sites such as data centers, hospitals, and factories. Like Redwood, it relies on proprietary software to aggregate battery packs with myriad chemistries and design specs into coordinated grid-scale systems. What the company sees as its critical differentiator, however, is its safety standards. Moment has achieved UL certification, a key safety benchmark that it says others in the industry have yet to meet.

In a shot at its competitors, the company described itself in a press release as the “only provider proven capable of deploying second-life battery storage systems in the built environment without special dispensations or regulatory loopholes.” While Moment never names names, Redwood’s first commercial-scale system sits on its own private land in an open air setting, where certification is arguably unnecessary. “What most other second life [battery] companies are now trying to say is, let’s just lobby to make second life UL certification easier, because it is impossible to get UL certification, as it stands,” the company’s CEO, Edward Chiang, told TechCrunch. “But at Moment, we say that’s not true. We got it.”

As I wrote last September, it’s a good time to be a critical minerals startup, because as you may have heard, “critical minerals are the new oil.” These materials sit at the center of modern energy infrastructure — batteries, magnets, photovoltaic cells, and electrical wiring, to name just a few uses — plus their supply is concentrated in geopolitically tense regions and subject to extreme price volatility. It also certainly doesn’t hurt that the Trump administration loves them and wants to mine and refine way more of them in the U.S.

The latest beneficiary of this enthusiasm is Magrathea, which this week raised a $24 million Series A to build what it says will be the only new magnesium smelter in the U.S., in Arkansas. The company has now raised over $100 million in total, including a $28 million grant from the Department of Defense. Its approach relies on an electrolysis-based process that’s able to extract pure magnesium from seawater and brines, which it positions as a cleaner, cheaper alternative to the high-heat, emission-intensive method that China uses to produce most of the world’s magnesium today.

The U.S. military has taken note of this potential new domestic supply. Magrathea’s 2022 seed round coincided with Russia’s invasion of Ukraine, as the military looked to scale domestic defense tech supply chains. Magnesium alloys are often used to help reduce weight in EV components, a benefit equally applicable to military helicopters, drones, and next-generation fighter jets. So while these defense applications represent somewhat of a pivot from the startup’s initial focus, a greener fighter jet is still better than a dirty fighter jet.