You’re out of free articles.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Sign In or Create an Account.

By continuing, you agree to the Terms of Service and acknowledge our Privacy Policy

Welcome to Heatmap

Thank you for registering with Heatmap. Climate change is one of the greatest challenges of our lives, a force reshaping our economy, our politics, and our culture. We hope to be your trusted, friendly, and insightful guide to that transformation. Please enjoy your free articles. You can check your profile here .

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Subscribe to get unlimited Access

Hey, you are out of free articles but you are only a few clicks away from full access. Subscribe below and take advantage of our introductory offer.

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Create Your Account

Please Enter Your Password

Forgot your password?

Please enter the email address you use for your account so we can send you a link to reset your password:

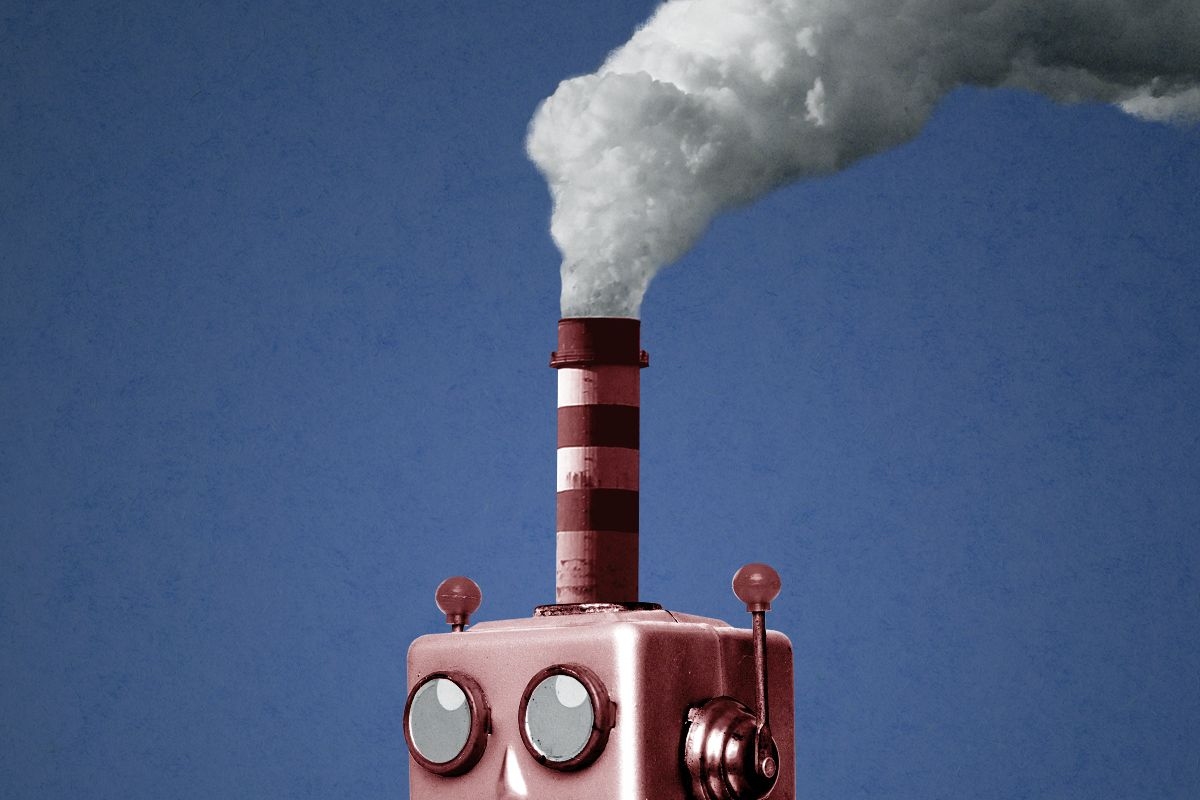

Why the new “reasoning” models might gobble up more electricity — at least in the short term

What happens when artificial intelligence takes some time to think?

The newest set of models from OpenAI, o1-mini and o1-preview, exhibit more “reasoning” than existing large language models and associated interfaces, which spit out answers to prompts almost instantaneously.

Instead, the new model will sometimes “think” for as long as a minute or two. “Through training, they learn to refine their thinking process, try different strategies, and recognize their mistakes,” OpenAI announced in a blog post last week. The company said these models perform better than their existing ones on some tasks, especially related to math and science. “This is a significant advancement and represents a new level of AI capability,” the company said.

But is it also a significant advancement in energy usage?

In the short run at least, almost certainly, as spending more time “thinking” and generating more text will require more computing power. As Erik Johannes Husom, a researcher at SINTEF Digital, a Norwegian research organization, told me, “It looks like we’re going to get another acceleration of generative AI’s carbon footprint.”

Discussion of energy use and large language models has been dominated by the gargantuan requirements for “training,” essentially running a massive set of equations through a corpus of text from the internet. This requires hardware on the scale of tens of thousands of graphical processing units and an estimated 50 gigawatt-hours of electricity to run.

Training GPT-4 cost “more than” $100 million OpenAI chief executive Sam Altman has said; the next generation models will likely cost around $1 billion, according to Anthropic chief executive Dario Amodei, a figure that might balloon to $100 billion for further generation models, according to Oracle founder Larry Ellison.

While a huge portion of these costs are hardware, the energy consumption is considerable as well. (Meta reported that when training its Llama 3 models, power would sometimes fluctuate by “tens of megawatts,” enough to power thousands of homes). It’s no wonder that OpenAI’s chief executive Sam Altman has put hundreds of millions of dollars into a fusion company.

But the models are not simply trained, they're used out in the world, generating outputs (think of what ChatGPT spits back at you). This process tends to be comparable to other common activities like streaming Netflix or using a lightbulb. This can be done with different hardware and the process is more distributed and less energy intensive.

As large language models are being developed, most computational power — and therefore most electricity — is used on training, Charlie Snell, a PhD student at University of California at Berkeley who studies artificial intelligence, told me. “For a long time training was the dominant term in computing because people weren’t using models much.” But as these models become more popular, that balance could shift.

“There will be a tipping point depending on the user load, when the total energy consumed by the inference requests is larger than the training,” said Jovan Stojkovic, a graduate student at the University of Illinois who has written about optimizing inference in large language models.

And these new reasoning models could bring that tipping point forward because of how computationally intensive they are.

“The more output a model produces, the more computations it has performed. So, long chain-of-thoughts leads to more energy consumption,” Husom of SINTEF Digital told me.

OpenAI staffers have been downright enthusiastic about the possibilities of having more time to think, seeing it as another breakthrough in artificial intelligence that could lead to subsequent breakthroughs on a range of scientific and mathematical problems. “o1 thinks for seconds, but we aim for future versions to think for hours, days, even weeks. Inference costs will be higher, but what cost would you pay for a new cancer drug? For breakthrough batteries? For a proof of the Riemann Hypothesis? AI can be more than chatbots,” OpenAI researcher Noam Brown tweeted.

But those “hours, days, even weeks” will mean more computation and “there is no doubt that the increased performance requires a lot of computation,” Husom said, along with more carbon emissions.

But Snell told me that might not be the end of the story. It’s possible that over the long term, the overall computing demands for constructing and operating large language models will remain fixed or possibly even decline.

While “the default is that as capabilities increase, demand will increase and there will be more inference,” Snell told me, “maybe we can squeeze reasoning capability into a small model ... Maybe we spend more on inference but it’s a much smaller model.”

OpenAI hints at this possibility, describing their o1-mini as “a smaller model optimized for STEM reasoning,” in contrast to other, larger models that “are pre-trained on vast datasets” and “have broad world knowledge,” which can make them “expensive and slow for real-world applications.” OpenAI is suggesting that a model can know less but think more and deliver comparable or better results to larger models — which might mean more efficient and less energy hungry large language models.

In short, thinking might use less brain power than remembering, even if you think for a very long time.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Catching up with the American Council on Renewable Energy’s Ray Long.

Today’s chat is with Ray Long, CEO of the American Council on Renewable Energy. We first discussed the odds of permitting reform a year and a half ago, for one of the first Q&As in The Fight. Flash forward and we’re still in the same situation, but now also wrestling with added demand for electricity to power data centers. I wanted to talk again about whether he thought the rise of artificial intelligence would increase the odds of some federal deal happening any time soon. The result: a wide-reaching conversation about the future of the electric grid, the struggles to win community buy-in and the sclerotic nature of the U.S. Congress.

The following conversation was lightly edited for clarity.

Do you think the buildout of our energy grid is entwined with the rise of the nation’s data center buildout?

When you look at what we need over the next four years — 166 gigawatts, 15 times the peak load of New York City — that’s a lot of power to build. Roughly half of that is for data center and AI growth.

There are five things we can build in the next four years at scale to address that collective amount. First, it’s transmission — the transmission buildout will help to get a modern grid to enable power flow to where it’s needed in a much more effective way. That’s the first step because if we just build all that power, the current grid can’t handle it.

Second, there are four supply technologies that can be built: solar, batteries, wind, and natural gas. All four of those technologies, we know there’s enough equipment here in the U.S. available for purchase that we can build at volume. And I’ll say this — natural gas is only about 10% of all those gigawatts because of the availability of turbines from suppliers. You can’t get enough over the next four years. So when I talk about decarbonization, most of what is built to address this issue is zero-carbon resources, renewable energy resources.

If you were to compare the current conversation around data center development to the debate over developing renewable energy in the U.S. — or energy in general — do you see any similarities or differences?

There are always issues with permitting projects. Communities are always going to have concerns about what’s built in their backyards.

What’s new — and your polling shows this — is the level of concern communities have. But here’s the thing: Most of this can be overcome by developers going in, listening to what the needs of the communities are, then responding and through the permitting process addressing those concerns. You can’t do that 100% of the time. But my experience is, when you take that sort of approach, you can overcome a lot of it.

Most of the large data centers are actually doing the things I’m discussing — going in and saying, Look, we want to be grid interconnected because grid connection at the end of the day means the resources we’re bringing to bear are also going to make a stronger grid. Number two, it's investing in power generation sources like the ones I said — and those power sources will be on the grid, so they’ll solve for the increased power demands of a community.

Third, water. They should bring the water solutions. You’re seeing data centers coming in and saying it head on now, that they have closed-loop systems or whatever the solution is. At the end of the day, the communities they’re proposing these in have a real negotiating opportunity to make sure they’re holding the data center developers accountable to the needs of the community.

For a community to say we don’t want it here misses a real opportunity for those communities to get the power they need, the grid they need, and the ability to bring down energy costs.

How is the data center debate affecting permitting reform conversations in Washington, from your perspective?

Permitting reform in the U.S. at the state and federal level has been broken for years. The SunZia transmission project? It took 17 years to permit. Ribbon-cutting is in a week or two and there’s still litigation around it. From a business perspective, it’s just untenable, and it’s a miracle that the project is getting built. Developers need a chance to come in and have their project evaluated. Both the community and the developer should be able to get to a go or no-go in a couple of years on one of these projects.

How is data center growth affecting the permitting reform discussion? It’s a very hot issue right now. Right now I think in part because the data center issue is so huge — because we’ve only got four years to solve for the first really big tranche of power we need and prices across the board for electricity are escalating — this is coming to a head. The data center load is a part of the catalyst to get people talking about it [permitting reform].

Do you expect legislating in Congress on permitting reform this year? Anything beyond more conversation?

My hope is that we get a bill. A few weeks ago someone from the administration was quoted as saying they wanted a framework for a bill by the end of May, and it’s June now. We haven’t seen both sides or the administration coalesce around a final project yet.

We’re in a midterm election cycle. Typically it’s very difficult during these cycles to move bills like this. At the same time, with electricity prices increasing and the need to build more, to fix this, I’m very hopeful something will come together. And look at the Senate — you’ve got Republicans and the Democratic ranking members talking about this. It’s all good signs.

If everyone’s talking about energy and affordability during this election, isn’t that a good thing for action in the next Congress?

I’ll say this: You’re seeing the catalyst for it right now with prices rising, and almost every grid operator around the country has raised concerns about shortages at some point this year or next year. It’ll hopefully be enough to have policymakers do something about it this year.

Plus more of week’s biggest development fights.

1. Ohio — This state might just be the most important flashpoint in the national fight over advanced energy and tech infrastructure.

2. Laramie County, Wyoming — The Cowboy State’s capital city is one of the few to reject a data center moratorium. But tech companies. don’t get your hopes up too high.

3. Los Angeles County, California — Elsewhere, we saw the first city in California vote to ban data centers … once and for all.

4. Charles County, Maryland — This populous county south of D.C. is now out of reach for data center development.

5. Baldwin County, Alabama — There will be a vote at the end of this month on whether to ban solar in the county whose opposition nearly prompted a statewide moratorium on development.

6. Hopkins County, Texas — I have one last update related to a large data center legal fight we’ve been covering closely.

The national AI data center moratorium has momentum.

As I’ve been documenting for months here at The Fight, data center opposition is surging across the country. Our latest Heatmap Pro poll puts some very hard numbers behind that picture. More than 7 in 10 Americans oppose new data center construction near where they live, up from just over 4 in 10 last fall. Part of what’s driving that opposition: More than half of respondents hold data centers largely responsible for rising electricity prices, and nearly half are pessimistic about the effect artificial intelligence will have on their lives.

Here’s yet another data point from our poll that underscores the intensity of the opposition: A majority of Americans now say they support a nationwide halt to new data center construction.

Digging into demographics, support for a national AI data center moratorium breaks predictably based on age and gender — younger people are more likely to back the idea, as are women. Americans are just as likely to back moratoria in their own states as they are a national stop to development, indicating the public relations rot may run deep amongst its critics in the public.

The notion of an AI data center moratorium comes from the political left, specifically Senator Bernie Sanders and Representative Alexandria Ocasio-Cortez, who introduced the first bill to enact such a pause earlier this year. Yet its appeal straddles political lines. Among Democrats, 66% said they’d back a national moratorium, compared to just 19% opposed; in the Republican camp, 55% said they backed the idea, compared to 28% opposed. Independents echoed those views as well, with answers falling neatly in between the two sides (58% support, 21% oppose).

The surge in support for a country-wide stop to new data centers stands in contrast to the more hesitant attitude politicians of all stripes have shown toward the opposition movement. That includes the White House, which until this week embraced a deregulatory approach to fostering AI tech before abruptly changing course this week and seeking early access to new models.

A good example of this political distance exists in Missouri, where Republican Governor Mike Kehoe last month proudly declared that Google was investing $15 billion in a hyperscale data center project in the rural town of New Florence in Montgomery County. After Kehoe’s announcement, the White House’s rapid response media account joined in on celebrating this economic investment, touting the potential for “thousands of construction jobs and hundreds of permanent jobs” from the Google project.

Among the hoi polloi, however, discontent was rife. This was actually the second large data center project in New Florence, and locals in and around this town of fewer than 1,000 residents have been busy suing the county to halt a separate Amazon data center proposed directly across from Google’s project.

Montgomery County is incredibly conservative politically and “has voted red since I can’t even remember,” Sabrina Cope, an organizer with opposition group Preserve Montgomery County, told me over the phone. “They’re turning up their nose at the White House’s support for these kinds of projects. This isn’t an issue solely Democrats or Republicans are upset about.” (The White House did not respond to a request for comment.)

The political mismatch here is also bipartisan.

In New York, state legislators on Thursday passed legislation to enact a one-year pause on new data center permitting. The bill now goes to the desk of New York’s governor, Democrat Kathy Hochul, who has signaled she’s against a broad moratorium. “This is a local decision for municipalities,” Hochul told reporters last month, according to a Politico report. “It’s not a statewide approach, necessarily, but it’s something I’m looking at intensely.”

The scene in the Empire State feels eerily similar to what happened in the Pine Tree State when Maine Democrats sought to enact a moratorium, only to be stymied by a veto from Governor Janet Mills, also a Democrat. Should Hochul spurn the state legislature, it would defy what our polls say is the overwhelming political opinion.

Our poll also found rural voters are almost 10 points more likely than suburban and urban denizens to support a moratorium on new data centers. Knowing how often land use conflicts occur in upstate New York, where voters skew Republican, the yeoman’s calculus in both parties might lead more politicians to support temporarily stopping or stalling data center industry growth.

In Illinois, we’re starting to see policy start to align at least a little more closely with what Democratic voters want. On Friday, Governor J.B. Pritzker announced he would pause data center tax breaks and ask the state legislature to enact a new statute governing the industry’s water and energy use as well as deployment of non-disclosure agreements. If Illinois is a harbinger of things to come in blue states, we’ll see more action like this.