You’re out of free articles.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Sign In or Create an Account.

By continuing, you agree to the Terms of Service and acknowledge our Privacy Policy

Welcome to Heatmap

Thank you for registering with Heatmap. Climate change is one of the greatest challenges of our lives, a force reshaping our economy, our politics, and our culture. We hope to be your trusted, friendly, and insightful guide to that transformation. Please enjoy your free articles. You can check your profile here .

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Subscribe to get unlimited Access

Hey, you are out of free articles but you are only a few clicks away from full access. Subscribe below and take advantage of our introductory offer.

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Create Your Account

Please Enter Your Password

Forgot your password?

Please enter the email address you use for your account so we can send you a link to reset your password:

A new Nature paper outlines the relationship between rising temperatures and the literal rotation of the Earth.

Thinking too hard about time is a little like thinking too hard about blinking; it seems natural and intuitive until suddenly you’re sweating and it makes no sense at all. At least, that’s how I felt when I came across an incredible new study published in Nature this afternoon by Duncan Agnew, a geophysicist at the Scripps Institution of Oceanography, suggesting that climate change might be affecting global timekeeping.

Our internationally agreed-upon clock, Coordinated Universal Time (UTC), consists of two components: the one you’re familiar with, which is the complete rotation of the Earth around its axis, as well as the average taken from 400 atomic clocks around the world. Since the 1970s, UTC has added 27 leap seconds at irregular intervals to keep pace with atomic clocks as the Earth’s rotation has gradually slowed. Then that rotation started to speed up in 2016; June 29, 2022, set a record for the planet’s shortest day, with the Earth completing a full rotation 1.59 milliseconds short of 24 hours. Timekeepers anticipated at that point that we’d need our first-ever negative leap second around 2026 to account for the acceleration.

But such a model doesn’t properly account for the transformative changes the planet is undergoing due to climate change — specifically, the billions of tons of ice melting from Greenland and Antarctica every year.

Using mathematical modeling, Agnew found that the melt-off, as measured by gravity-tracking satellites, has again decreased the Earth’s angular velocity to the extent that a negative leap second will actually be required three years later than estimates, in 2029.

While a second here or there might not seem like much on a cosmic scale, as Agnew explained to me, these kinds of discrepancies throw into question the entire idea of basing our time system on the physical position of the Earth. Even more mind-bogglingly, Agnew’s modeling makes the astonishing case that so long as it is, climate change will be “inextricably linked” to global timekeeping.

Confused? So was I, until Agnew talked me through his research. Our conversation has been edited and condensed for clarity.

How did you get involved in researching this? I’d never have expected there to be a relationship between climate change and timekeeping.

Pure accident. I’m a geophysicist and I have an avocational interest in timekeeping, so I know all about leap seconds and the history of atomic clocks. I thought about writing a paper figuring out statistically what the next century would bring in terms of leap seconds.

When I started working on the paper, I realized there was a signal that I needed to allow for, which was the change induced by melting ice — which has been studied, there are plenty of papers on this satellite gravity signal. But nobody has, as far as I can tell, related it to rotation. Mostly because, from a geophysical standpoint, that’s not very interesting.

Interesting. Or, well, I guess not interesting.

I mean, there is geophysical literature on this, but it’s largely, Okay, we see this signal, and gravity doesn’t mesh with what we think we know about ice melt. Does it measure what we think we know about sea level change? How does the geophysics all fit together? And the fact that it changes Earth’s rotation is kind of a side issue.

I did not know about this when I got started on this project; it appeared as I was working on it. I thought, “Wait, I need to allow for this.” And when I did, it produced the — I don’t want to use the words “more important” because of the climate change part, but it produced a secondary result, which was that this potential for a negative leap second became clear.

Walk me through how the ice melting at the poles changes the Earth’s rotation.

This is the part that’s easy to explain. Ice melts. A lot of water that used to be at the poles is now distributed all over the ocean. Some of it is close to the equator. The standard picture for what’s called change of angular velocity because of moment of inertia — ignore all the verbiage — but the standard picture is of an ice skater who is spinning. She has her arms over her head. When she puts her arms out, she will slow down — like the water going from the poles to the equator. And that’s it. This is the simple part of the problem.

So what’s the hard part?

The hard part is explaining the part about the Earth’s core. If you have two things that are connected to each other and rotating and one of them slows down, the other one has to speed up. I have not been able to think of an ice skater-like-metaphor to go with that, but the simple one is if you were to put a bowl of water on a lazy Susan and you spin the bowl, then the water will start to spin. It won’t spin initially, but then it will start.

If you started stirring the water in the other direction, that would slow the Lazy Susan down. And that’s the interaction between the core and the solid part of the Earth.

And is that causing the negative leap second to move back three years?

That’s why the leap second might happen at all. On a very long timescale, what’s happening is that the tides are slowing the Earth down. The Earth being slower than an atomic clock means that you need a positive leap second every so often. That was the case in 1972, when they started using leap seconds. The assumption was that the Earth would just keep slowing down and so there would be more positive leap seconds over time.

Instead, the Earth has sped up, entirely because of the core, and that’s not something that people necessarily anticipated. When you take the effect of melting ice out, it becomes clear there’s this steady deceleration of the core; the core is rotating more and more slowly. If you extrapolate that — which is a somewhat risky thing to do, you can’t really predict what the core is going to do — then you discover that there is a leap second, in 2029. The ice melting is going in the other direction; if the ice melting hadn’t occurred, then the leap second would come even earlier. Is this all making sense?

I think I’m grasping it.

Just so you know, one of the two reviewers of this paper was someone in geophysics who said, “I know all this stuff. I wasn’t familiar with the rotation part. This paper has an awful lot of moving parts.”

So, it’s just a difference of a second. Why does this even matter?

We are all familiar with the problem of not being synchronized — we just went through it. If you forget that we did Daylight Savings Time, then you’re an hour off from everybody else and it’s bewildering and a nuisance.

Same problem with leap seconds, except for us, a second is not a big deal. For a computer network, though, a second is a big deal. And why is that? Well, for example, in the United States, the rules for stock markets say that everything that is done has to be accurately timed to a 20th of a second. In Europe, it’s actually to the nearest 1,000th of a second. If we were all just farmers or something, it wouldn’t be a problem, but there’s this whole infrastructure that’s invisible to us that tells our phones what time it is, and allows GPS to work, and everything else.

The easiest thing to do would be to not have a negative leap second. Indeed, there are plans not to have leap seconds anymore because for computer networks, they’re an enormous problem. They arrive at irregular intervals; some human being has to put the information into the computer; the computer has to have a program that tells it when the leap seconds are; and most computer programs can’t tell whether it’s a plus or minus second because there’s never been a minus before. From the computer network standpoint, it would be simplest to just not do this.

So, you ask, why are we doing this? In 1972, when leap seconds were instituted, there were two communities that cared about precise time. One was the people who cared about the frequency of your radio station and other kinds of telecommunications. They wanted to use atomic clocks with frequencies that didn’t change, but that didn’t mesh with what the Earth was doing.

Who cares about time telling you how the Earth is rotating? Well, the answer then was that there were people who used the stars for celestial navigation. Back then, celestial navigation was used not just for ships, but for airplanes — if you flew across the ocean, there was a guy in the cockpit, an actual navigator, who would use a periscope to look at the stars and locate the plane, if only as sort of a backup system. That community is now gone. Almost nobody uses celestial navigation as a primary, or even a secondary, way of finding out where they are anymore because of GPS.

My own personal view — and I can warn you, there’s a huge amount of dispute about this — is that we’d be fine if we just stopped having leap seconds at all.

Is there a … governing body of time? That forces us to do leap seconds?

There’s a giant tangle of international organizations that deal with this, but the rules were set by the people in charge of keeping radio stations aligned because radio broadcasts were how time signals were distributed back in 1972. So the rule was created. Who makes that decision is something called the International Earth Rotation and Reference Systems Service, which uses astronomy to monitor what the Earth is doing. They can predict a little bit in advance where things are going to be, and if within six months things are going to be more than half a second out, they will announce there will be a leap second.

Back to climate change: It seems pretty amazing that something like melting ice can throw things off so much.

All the stuff about negative seconds is important, but it’s only important because of this infrastructure, because we have all these rules. Strip all of that away and the most important result becomes the fact that climate change has caused an amount of ice melt that is enough to change the rotation rate of the entire Earth in a way that’s visible.

How do you talk to people about the gigatons of ice that Greenland loses every year? Do you talk about “water that could cover the entire United States to the depth of X” to get it into people’s minds? Yes, these are small changes in the rotation rate, but just the fact that we can say, “Look, this is slowing down the entire Earth” seems like another way of saying that climate change is unprecedented and important.

How do we proceed, then, if climate change is messing with our system?

There was a lot of resistance to even introducing atomic time. Time was thought of as being about Earth’s rotation and the astronomers didn’t want to give it up. In fact, in the 19th century, observatories would make money by selling time signals to the rest of the community. Then, in the 1950s, the physicists showed up, ran atomic clocks, never looked at the stars, and said, “We can do time better.” The physicists were right. But it took the astronomical community a while to come around to accepting that was how time was going to be defined.

If we get rid of leap seconds then we’d really have cut the connection between the way in which human beings have always thought of time as being, say, from noon to noon, or from sunrise to sunset, and we’d be replacing it with some bunch of guys in a laboratory somewhere running a machine. For some people, it’s very troubling to think of severing the keeping of time from the Earth’s rotation.

You lose a bit of the romance, I think. But clearly, tying our way of describing the linear passage of sequential events to the Earth’s rotation is going to be messy.

Exactly right. There’s a quote from, of all people, St. Augustine, saying, “I know what time is, but if somebody asked me, I can’t tell them.”

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

On Thacker Pass, the Bonneville Power Administration, and Azerbaijan’s offshore wind

Current conditions: New York City is bracing for triple-digit heat in some parts of the five boroughs this week • The warm-up along the East Coast could worsen the drought parching the country’s southeastern shores • After Sunday reached 95 degrees Fahrenheit in the war-ravaged Gaza, temperatures in the Palestinian enclave are dropping back into the 80s and 70s all week.

Assuming world peace is something you find aspirational, here’s the good news: By all accounts, President Donald Trump’s two-day summit in Beijing with Chinese President Xi Jinping went well. Here’s the bad news: The energy crisis triggered by the Iran War is entering a grim new phase. Nearly 80 countries have now instituted emergency measures as the world braces for slow but long-predicted reverberations of the most severe oil shock in modern history. With demand for air conditioning and summer vacations poised to begin in the northern hemisphere’s summer, already-strained global supplies of crude oil, gasoline, diesel, and jet fuel will grow scarcer as the United States and Iran mutually blockade the Strait of Hormuz and halt virtually all tanker shipments from each other’s allies. “We are taking that outcome very seriously,” Paul Diggle, the chief economist at fund manager Aberdeen, told the Financial Times, noting that his team was now considering scenarios where Brent crude shoots up to $180 a barrel from $109 a barrel today. “We are living on borrowed time.”

The weekend brought a grave new energy concern over the conflict’s kinetic warfare. On Sunday, the United Arab Emirates condemned a drone strike it referred to as a “treacherous terrorist attack” that caused a fire near Abu Dhabi’s Barakah nuclear station. The UAE’s top English-language newspaper, The National, noted that the government’s official statement did not blame Iran explicitly. The attack came just a day after the International Atomic Energy Agency raised the alarm over drone strikes near nuclear plants after a swarm of more than 160 drones hovered near key stations in Ukraine last week.

We are apparently now entering the megamerger phase of the new electricity supercycle. On Friday, the Financial Times broke news that NextEra Energy is in talks with rival Dominion Energy for a tie-up that would create a more than $400 billion utility behemoth in one of the biggest deals of all time. The merger talks, which The Wall Street Journal confirmed, could be announced as early as this week. The combined company would reach from Dominion’s homebase of Virginia, where the northern half of the state is serving as what the FT called “the heartland of U.S. digital infrastructure serving the AI boom,” down to NextEra’s home-state of Florida, where the subsidiary Florida Power & Light serves roughly 6 million customers. While Dominion dominates data centers in Northern Virginia, NextEra last year partnered with Google to build more power plants and even reopen the Duane Arnold nuclear station in Iowa.

Trump digs lithium. In fact, he’s such a fan of Lithium Americas’ plan to build North America’s largest lithium mine on federal land in Nevada that he renegotiated a Biden-era deal to finance construction of the Thacker Pass project to secure a 5% equity stake in the publicly-traded developer. Yet the White House’s macroeconomic policies are pinching the nation’s lithium champion. During its first-quarter earnings call with investors last week, Lithium Americas cautioned that the Trump administration’s steel tariffs, coupled with inflation from disrupted shipments through the Strait of Hormuz, could add between $80 million and $120 million to construction costs at Thacker Pass. Most of the impact, Mining.com noted, is expected this year. Once mining begins, the project could spur new discussion of a strategic lithium reserve, the case for which Heatmap’s Matthew Zeitlin articulated here.

Sign up to receive Heatmap AM in your inbox every morning:

The Department of Energy has selected Travis Kavulla, an energy industry veteran, as the 17th chief executive and administrator of the Bonneville Power Administration, NewsData reported. Founded under then-President Franklin D. Roosevelt in 1937, the federal agency is a holdover from the New Deal era before utilities had built out electrical networks in rural parts of the U.S. Unlike the Tennessee Valley Authority — which functions as a standalone utility that owns and sells power, though it’s wholly owned by the federal government and its board of directors is appointed by the White House — the BPA, as it’s known, is a power marketing agency that sells electricity from hydroelectric dams owned by the Army Corps of Engineers and the Department of the Interior’s Bureau of Reclamation. Kavulla currently serves as the head of policy for Base Power, the startup building a network of distributed batteries to back up the grid. He previously worked as the regulatory chief at the utility NRG Energy, and as a state utility commissioner in his home state of Montana. NewsData, a trade publication focused on Western energy markets, cautioned that the Energy Department may hold off on announcing the appointment for “the next few days or weeks” as sources warned that “it might be delayed while the department conducts a background check, or to allow the new undersecretary of energy, Kyle Haustveit, to be confirmed.”

Reached Sunday night via LinkedIn message, Kavulla politely declined to comment on whether he was appointed to lead the BPA.

Offshore wind may be spinning in reverse in the U.S. as the Trump administration attempts to, as Heatmap’s Jael Holzman put it, “murder” an industry through death by a thousand cuts. But elsewhere in the world, offshore wind is booming. Just look at Azerbaijan. Despite its vast reserves of natural gas, the nation on the Caspian Sea is looking into building its first offshore turbines. On Friday, offshoreWIND.biz reported that the Azerbaijan Green Energy Company, owned by the Baku-based industrial giant Nobel Energy, had commissioned a Spanish company to design a floating LiDAR-equipped buoy for the country’s first turbines in the Caspian. The debut project, backed by the Azeri government, would start with 200 megawatts of offshore wind and eventually triple in size.

Before the wealthy software entrepreneur Greg Gianforte ran to be governor of Montana, he donated millions of dollars to a Christian-themed museum that claims humans walked alongside dinosaurs and the Earth is just 6,000 years old. After winning the state’s top job, the Republican set about revoking virtually all policies related to climate change, including banning the projected effects of warming from state agencies’ risk forecasts. With drought withering the state, however, Gianforte has turned to perhaps the most ancient policy approach humanities leaders have called upon to fix devastating weather patterns: Pray. On Sunday, Gianforte declared an official day of prayer for rain. “Prayer is the most powerful tool we have,” he wrote in a post on X. “I ask all who are faithful to come to God with thanks and pray.”

With construction deadlines approaching, developers still aren’t sure how to comply with the new rules.

Certainty, certainty, certainty — three things that are of paramount importance for anyone making an investment decision. There’s little of it to be found in the renewable energy business these days.

The main vectors of uncertainty are obvious enough — whipsawing trade policy, protean administrative hostility toward wind, a long-awaited summit with China that appears to have done nothing to resolve the war with Iran. But there’s still one big “known unknown” — rules governing how companies are allowed to interact with “prohibited foreign entities,” which remain unwritten nearly a year after the One Big Beautiful Bill Act slapped them on just about every remaining clean energy tax credit.

The list of countries that qualify as “foreign entities of concern” is short, including Russian, Iran, North Korea, and China. Post-OBBBA, a firm may be treated as a “foreign-influenced entity” if at least 15% of its debt is issued by one of these countries — though in reality, China is the only one that matters. This rule also kicks in when there’s foreign entity authority to appoint executive officers, 25% or greater ownership by a single entity or a combined ownership of at least 40%.

Any company that wants to claim a clean energy tax credit must comply with the FEOC rules. How to calculate those percentages, however, the Trump administration has so far failed to say. This is tricky because clean energy projects seeking tax credits must be placed in service by the end of 2027 or start construction by July 4 of this year, which doesn’t leave them much time left to align themselves with the new rules.

While the Treasury Department published preliminary guidance in February, it largely covered “material assistance,” the system for determining how much of the cost of the project comes from inputs that are linked to those four nations (again, this is really about China). That still leaves the issue of foreign influence and “effective control,” i.e. who is allowed to own or invest in a project and what that means.

This has meant a lot of work for tax lawyers, Heather Cooper, a partner at McDermott Will & Schulte, told me on Friday.

“The FEOC ownership rules are an all or nothing proposition,” she said. “You have to satisfy these rules. It’s not optional. It’s not a matter of you lose some of the credits, but you keep others. There’s no remedy or anything. This is all or nothing.”

That uncertainty has had a chilling effect on the market. In February, Bloomberg reported that Morgan Stanley and JPMorgan had frozen some of their renewables financing work because of uncertainty around these rules, though Cooper told me the market has since thawed somewhat.

“More parties are getting comfortable enough that there are reasonable interpretations of these rules that they can move forward,” she said. “The reality is that, for folks in this industry — not just developers, but investors, tax insurers, and others — their business mandate is they need to be doing these projects.”

Some of the most frequent complaints from advisors and trade groups come around just how deep into a project’s investors you have to look to find undue foreign ownership or investment.

This gets complicated when it comes to the structures involved with clean energy projects that claim tax credits. They often combine developers (who have their own investors), outside investment funds, banks, and large companies that buy the tax credits on the transferability market.

These companies — especially the banks, which fund themselves with debt — “don’t know on any particular date how much of their debt is held by Chinese connected lenders, and therefore they’re not sure how the rules apply, and that’s caused a couple of banks to pull out of the tax equity market,” David Burton, a partner at Norton Rose Fulbright, told me. “It seems pretty crazy that a large international bank that has its debt trading is going to be a specified foreign entity because on some date, a Chinese party decided to take a large position in its debt.”

For those still participating in the market, the lack of guidance on debt and equity provisions has meant that lawyers are having to ascend the ladder of entities involved in a project, from private equity firms who aren’t typically used to disclosing their limited partners to developers, banks, and public companies that buy the tax credits.

“We’re having to go to private equity funds and say, hey, how many of your LPs are Chinese?” David Burton, a partner at Norton Rose Fulbright, told me. This is not information these funds are typically particularly eager to share. If a lawyer “had asked a private equity firm please tell us about your LPs, before One Big Beautiful Bill, they probably would have told us to go jump in the lake,” Burton said.

Still, the deals are still happening, but “the legal fees are more expensive. The underwriting and due diligence time is longer, there are more headaches,” he told me.

Typically these deals involve joint ventures that formed for that specific deal, which can then transfer the tax credits to another entity with more tax liability to offset. The joint venture might be majority owned by a public company, with a large minority position held by a private equity fund, Burton said.

For the public company, Burton said, his team has to ask “Are any of your shareholders large enough that they have to be disclosed to the SEC? Are any of those Chinese?” For the private equity fund, they have to ask where its investors are residents and what countries they’re citizens of. While private equity funds can be “relatively cooperative,” the process is still a “headache.”

“It took time to figure out how to write these certifications and get me comfortable with the certification, my client comfortable with it, the private equity firm comfortable with it, the tax credit buyer comfortable with it,” he told me, referring to the written legal explanation for how companies involved are complying with what their lawyers think the tax rules are.

Players such as the American Council on Renewable Energy hope that guidance will cut down on this certification time by limiting the universe of entities that will have to scrub their rolls of Chinese investors or corporate officers.

“It’d be nice if we knew you only have to apply the test at the entity that’s considered the tax owner of the project,” i.e. just the joint venture that’s formed for a specific project, Cooper told me.

“There’s a pretty reasonable and plain reading of the statute that limits the term ’taxpayer’ to the entity that owns the project when it’s placed in service,” Cooper said.

Many in the industry expect more guidance on the rules by the end of year, though as Burton noted, “this Treasury is hard to predict.”

In the meantime, expect even more work for tax lawyers.

“We’re used to December being super busy,” Burton said. “But it now feels like every month since the One Big Beautiful Bill passed is like December, so we’ve had, like, you know, eight Decembers in a row.”

Deep cuts to the department have left each staffer with a huge amount of money to manage.

The Department of Energy has an enviable problem: It has more money than it can spend.

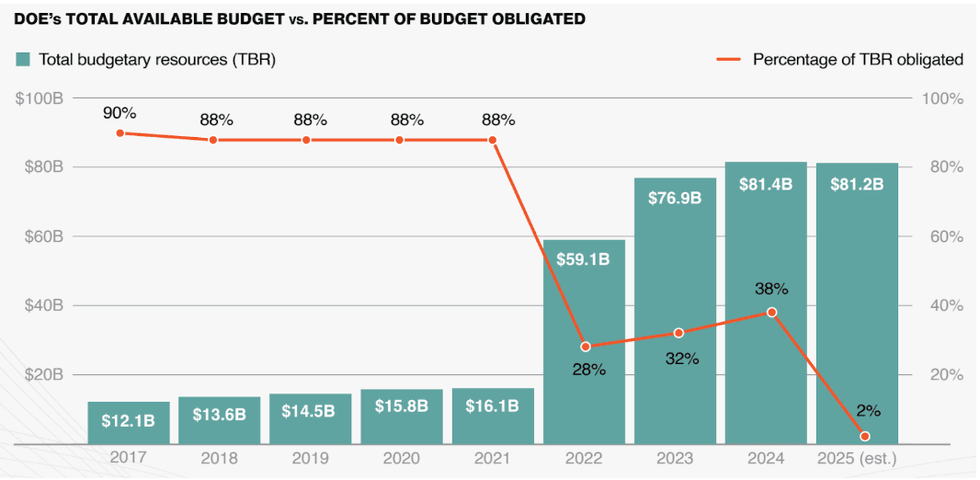

DOE disbursed just 2% of its total budgetary resources in fiscal year 2025, according to a report released earlier this year from the EFI Foundation, a nonprofit that tracks innovations in energy. That figure is far lower than the 38% of funds it distributed the year prior.

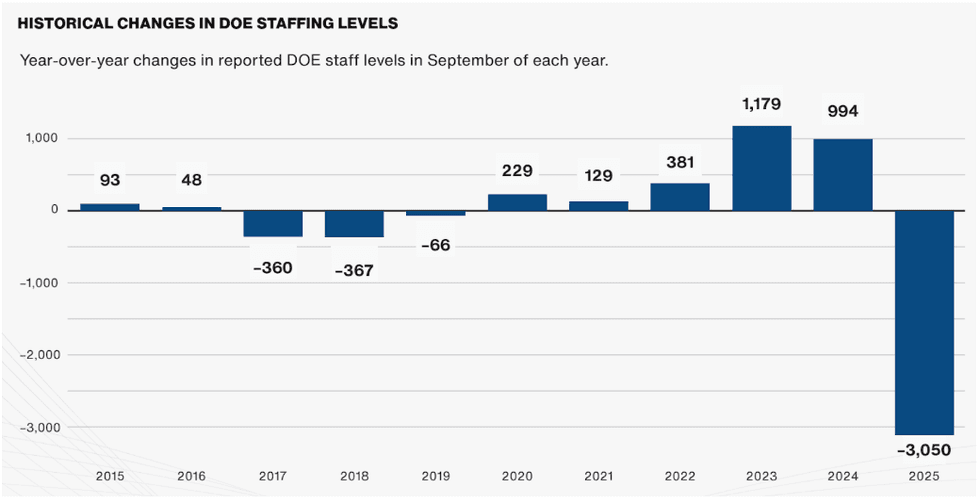

While some of that is due to political whiplash in Washington, there is another, far more mundane cause: There simply aren’t that many people left to oversee the money. Thanks to the Department of Government Efficiency’s efforts, one in five DOE staff members left the agency. On top of that, Energy Secretary Chris Wright shuffled around and combined offices in a Kafkaesque restructuring. Short on workers and clear direction, the department appears unable to churn through its sizable budget.

Though Congress provides budgetary authority, agencies are left to allot spending for the programs under their ambit, and then obligate payments through contracts, grants, and loans. While departments are expected to use the money they’re allocated, federal staff have to work through the gritty details of each individual transaction.

As a result of its reduced headcount, DOE’s employees are each responsible for far more budgetary resources than ever before.

“DOE is facing its largest imbalance in its history,” Alex Kizer, executive vice president of EFI Foundation, told me. In fiscal year 2017, DOE budgeted around $4.7 million per full-time employee. In the fiscal year 2026 budget request, that figure reached $35.7 million per worker — about eight times more.

Part of that increase is the result of the unprecedented injection of funding into DOE from the 2021 Infrastructure Investment and Jobs Act and the 2022 Inflation Reduction Act. The pair of laws, which gave DOE access to $97 billion, comprised the United States’ largest investment to combat climate change in the nation’s history.

The epoch of federally backed renewable energy investment proved to be short-lived, however. Once President Trump retook office last year, his administration froze funds and initiated a purge of federal workers that resulted in 3,000 staffers (about one in five) leaving DOE through the Deferred Resignation Program. The administration canceled hundreds of projects, evaporating $23 billion in federal support.

While the One Big Beautiful Bill Act passed last summer depleted some of the IRA’s coffers and sunsetted many tax credits years early, it only rescinded about $1.8 billion from DOE, according to the EFI Foundation. Much of the IRA’s spending had already gone out the door or was left intact.

This leaves DOE in a strange position: Its budget is historically high, but its staffing levels have suffered an unprecedented drop.

Even before the short-lived Elon Musk-run agency took a chainsaw to the federal workforce, DOE struggled to hire enough people to keep up with the pace of funding demanded by the IRA’s funding deadlines. The Loan Programs Office, for example, was criticized for moving too slowly in shelling out its hundreds of billions in loan authority. According to a report from three ex-DOE staffers that Heatmap’s Emily Pontecorvo covered, the IRA’s implementation suffered from a lack of “highly skilled, highly talented staff” to carry out its many programs.

“The last year’s uncertainty and the staff cuts, the project cancellations, those increase an already tightening bottleneck of difficulty with implementation at the department,” Sarah Frances Smith, EFI Foundation’s deputy director, told me.

One former longtime Department of Energy staffer who asked not to be named because they may want to return one day told me that as soon as Trump’s second term started, funding disbursement slowed to a halt. Employees had to get permission from leadership just to pay invoices for projects that had already been granted funding, the ex-DOE worker said.

While the Trump administration quickly moved to hamstring renewable energy resources, staff were kept busy complying with executive orders such as removing any mention of diversity equity and inclusion from government websites and responding to automated “What did you do last week?” emails.

On top of government funding drying up, Kizer told me that the confusion surrounding DOE has had a “cooling effect on the private sector’s appetite to do business with DOE,” though the size of that effect is “hard to quantify.”

Under President Biden, DOE put a lot of effort into building trust with companies doing work critical to its renewable energy priorities. Now, states and companies alike are suing DOE to restore revoked funds. In a recent report, the Government Accountability Office warned, “Private companies, which are often funding more than 50 percent of these projects, may reconsider future partnerships with the federal government.”

Clean energy firms aren’t the only ones upset by DOE’s about-face. Even the Republican-controlled Congress balked at President Trump’s proposed deep cuts to DOE’s budget in its latest round of budget negotiations. Appropriations for fiscal year 2026 will be just slightly lower than the year before — though without additional headcount to manage it, the same difficulties getting money out the door will remain.

The widespread staff exit also appears to have slowed work supporting the administration’s new priorities, namely coal and critical minerals. LPO, which was rebranded the “Office of Energy Dominance Financing,” has announced only a few new loans since President Biden left office. Southern Company, which received the Office’s largest-ever loan, was previously backed by a loan to its subsidiary Georgia Power under the first Trump administration.

Despite Trump’s frequent invocation of the importance of coal, DOE hasn’t accomplished much for the technology besides some funding to keep open a handful of struggling coal plants and a loan to restart a coal gasification plant for fertilizer production that was already in LPO’s pipeline under Biden.

Even if DOE wanted to become an oil and gas-enabling juggernaut, it may not have the labor force it needs to carry out a carbon-heavy energy mandate.

“When you cut as many people as they did, you have to figure out who’s going to do the stuff that those people were doing,” said the ex-DOE staffer. “And now they’re going to move and going, Oh crap, we fired that guy.”