You’re out of free articles.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Sign In or Create an Account.

By continuing, you agree to the Terms of Service and acknowledge our Privacy Policy

Welcome to Heatmap

Thank you for registering with Heatmap. Climate change is one of the greatest challenges of our lives, a force reshaping our economy, our politics, and our culture. We hope to be your trusted, friendly, and insightful guide to that transformation. Please enjoy your free articles. You can check your profile here .

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Subscribe to get unlimited Access

Hey, you are out of free articles but you are only a few clicks away from full access. Subscribe below and take advantage of our introductory offer.

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Create Your Account

Please Enter Your Password

Forgot your password?

Please enter the email address you use for your account so we can send you a link to reset your password:

Just a few years ago, the subject was basically taboo.

Katherine Ricke, a University of California at San Diego sustainability professor, turned to face the roomful of attentive scientists at the American Geophysical Union a few weeks ago. In any other year, she would have been about to break one of climate science’s biggest taboos.

“Geoscientists know very well at this point that solar geoengineering is not a very good substitute for emissions reductions,” she said. “The question that comes next, then, is, Is solar geoengineering a complement to mitigation?”

The answer, she then argued, was yes. While cutting greenhouse gas emissions might bring down the planet’s temperature in the long term, she said, it would not do so immediately. But spraying sulfate aerosols into the stratosphere was pretty cheap, and it could quickly help relieve the planet’s fever. “Solar geoengineering has a rapid but temporary effect on global temperatures, while the effect of emissions reduction is deferred but persistent,” she said.

Ricke went on to ask whether the economics of solar geoengineering made sense — and about its risks. Would it deprive other important efforts of research funding? Probably not. Could it encourage the public to procrastinate on cutting emissions? Maybe yes.

Yet perhaps the presentation’s biggest surprise — for people who have long thought about the issue — was that nobody in the audience of normal climate scientists gasped. Nobody shooed Ricke out of the room or told her that her talk didn’t belong in a session devoted to achieving net zero — that is, to climate mitigation, to reducing carbon pollution, not blotting out its effects.

To get a sense of what American climate scientists are talking about, you can do a lot worse than attending the annual fall meeting of the AGU, where more than 20,000 scientists come to network, present new research, and gossip about their superiors. This year, AGU was held in the cavernous Moscone Center in San Francisco. The arrival of tens of thousands of people immediately broke the city’s post-pandemic downtown; Starbucks ran out of breakfast sandwiches and every restaurant within a quarter mile of the conference site was jammed before the 8:30 a.m. sessions.

AGU is almost always held, for some nonsensical reason, at roughly the same time as the annual United Nations climate conference, and the two events have a lot in common: They are bazaars, free-for-alls, half salon and half trade show, and each way too big for any one person to see. Yet by keen attention to sounds and signals, one can detect a vibe at both events. The vibe of this year’s AGU was clear: Geoengineering is here to stay.

This sincere interest in geoengineering and climate modification represents a broader shift in climate science from observation to intervention. It also represents a huge change for a field that used to regard any interference with the climate system — short of cutting greenhouse gas emissions — as verboten. “There is a growing realization that [solar radiation management] is not a taboo anymore,” Dan Visioni, a Cornell climate professor, told me. “There was a growing interest from NASA, NOAA, the national labs, that wasn’t there a year ago.”

At the highest level, this acceptance of geoengineering shows that scientists have seriously begun to imagine what will happen if humanity blows its goal of cutting greenhouse gas emissions.

Why the sudden embrace of geoengineering? Part of it is that the Intergovernmental Panel on Climate Change has become increasingly insistent that carbon removal is crucial — and opened the door to other once-taboo ideas.

But another part is that climate disasters seem to get bigger and bigger every year, and humanity seems to be growing more and more alarmed about them, yet no country plans to cut emissions fast enough to relieve global warming’s near-term dangers. 2023 was the warmest year in modern human history, but the Paris Agreement’s temperature goals remain far off. “It was always pretty clear that the kind of emissions reduction to stay below 1.5 [degrees Celsius] was never going to happen in any realistic scenario, but there was always a conviction that just by saying it was physically possible, it was going to inspire people into some kind of action,” Visioni said. “2023 has shown this to not be the case.”

Perhaps one more reason is that, for better or worse, geoengineering is already happening. Economists have long argued that stratospheric aerosol injection is so cheap that someone will eventually try to do it. Then, last year, Luke Iseman, a 39-year-old former employee of the startup incubator Y Combinator, claimed to have conducted rogue experiments in western Mexico delivering reflective sulfur molecules to the atmosphere using weather balloons. It’s unclear whether this “move fast and break things”-styled effort actually reflected any meaningful sunlight back into space. What it did do was awaken the Mexican government to a regulatory arbitrage. It responded by banning solar geoengineering.

Yet more serious attempts have been made at bringing geoengineering into the mainstream. In September, the Overshoot Commission, a panel of current and former world leaders — including an influential Chinese adviser and a former Canadian prime minister — recommended that the world begin to seriously study solar geoengineering. And Congress recently mandated that the White House Office of Science and Technology Policy study the technique — although the office’s resulting report also suggested that scientists are still treading carefully around it. Its hilariously curt title: “Congressionally-Mandated Report on Solar Radiation Modification.”

“The way that broader climate intervention has started to move into the mainstream has been kind of astounding,” said Shuchi Talati, a University of Pennsylvania scholar and former Energy Department official. “If you look at AGU of four or five years ago, if there was one [solar radiation management] panel, that was novel,” she told me. But this year, there were more panels and side conversations than ever. “You can feel it in the air that there was more interest.”

Ricke’s was far from the only geoengineering presentation in San Francisco this year. In a packed lunchtime session, Lisa Graumlich, AGU’s president, led a town hall about the organization’s draft proposal on how to research climate intervention ethically. “Are we attempting to play God? Do we have the right to do this? What risks are we willing to accept? Or … do we have the right not to?” Cynthia Scharf, a former UN adviser who helped lead a Carnegie Foundation project on how the world could possibly govern geoengineering, told the room by video conference. The crowd wasn’t exactly rewarded for attending: After every panelist had finished going through their introductions, the audience only had time to ask two questions.

Across the hall, more than 60 people were talking about a different kind of climate intervention. For years, scientists have known that the stability of a few glaciers in West Antarctica could mean the difference between quasi-manageable amounts of sea-level rise this century and a rapid, catastrophic surge. So small groups of glaciologists have now started to ask whether those specific glaciers — such as Thwaites, which holds a quadrillion gallons of water and is larger than Florida — could be engineered or modified somehow to slow their collapse.

Perhaps a berm could be built on the seafloor, in front of each of the glaciers, in order to prevent warm water from eroding them. Or maybe holes could be drilled into the glaciers, allowing the warmth of their subsurface to be vented to the surface. Glacial scientists have already met twice this year — at the University of Chicago and later Stanford — to begin hashing out the idea.

Another approach — using ships to spray ocean water into the atmosphere, thereby brightening clouds and reflecting more sunlight into space — was also the subject of several events. One scholar, Chih-Chieh Jack Chen, showed research suggesting that brightening the clouds over just 5% of the ocean surface could cool the planet enough to meet the world’s temperature targets — but that the climatic ripple effects of doing so might simultaneously raise temperatures in Southeast Asia by even more than what global warming would do alone. Others presented work showing that cloud brightening might accidentally shut down the planet’s westerly trade winds — or even silence the Pacific Ocean’s El Niño oscillation.

Then there were the carbon removal people, who arrived by the tens and who seemed to have graduated to a less controversial (and possibly more remunerative) plane than geoengineering. Most scientists seem to have accepted that carbon dioxide removal, or CDR, will need to happen to at least some degree. “CDR is a given. People don’t even consider it to be geoengineering any more, which is what the CDR people have always wanted,” Visioni told me. A new Department of Energy report, released during the conference, argues that by 2050, the United States might be able to suck 1 billion tons of carbon dioxide out of the atmosphere for a mere $130 billion a year, creating 440,000 jobs. In other scenarios — and not only those sponsored by the federal government — America seems likely to become the keystone of the global carbon removal industry, its vast geological capacity and fossil-fuel expertise giving it a competitive advantage.

In anticipation, venture capital and public-sector cash has surged into carbon removal, creating a corps of CDR startups with one foot in the geosciences and the other in Silicon Valley. Their employees were at AGU too, mingling in full force. “It was interesting how much industry was there — researchers at companies, even heads of companies,” Talati told me. “I’ve never really experienced that at AGU.” Employees from Lithos, Heirloom, Carbon Direct, Stripe, and Additional Ventures all registered for the conference; in what might be an AGU first, scientists and technologists sipped cappuccinos and nibbled pastries during an early-morning confab at the Salesforce Tower, a few blocks from the official conference site. “AGU is not the place where you would have expected to find these kinds of people, even just for CDR, so it’s interesting that they’re there,” Visioni said.

The whole thing presented both a stark contrast and an inescapable mirror to COP28, where oil lobbyists roamed the grounds. Some environmental old-timers grumble that the UN climate conference has transformed from a diplomatic meeting into a trade show. But maybe there is now so much money and interest and public attention directed at the climate problem that any major gathering about it will take on shades of the commercial. There are lots of rich people with huge amounts of money who want to help do something about climate change. At the same time, the United States government is looking like less and less of a long-term reliable partner on climate research. Sooner or later, someone is going to try to do more serious geoengineering than releasing a few balloons in Mexico. Scientists have started preparing for that day. Is that smart? I don’t know. But it seems like a better strategy than feigned ignorance about where we’re headed.

Editor’s note: This story originally misidentified the name of the person who conducted geoengineering experiments in Mexico. We regret the error.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Instead of rocket fuel, they’re burning biomass.

Arbor Energy might have the flashiest origin story in cleantech.

After the company’s CEO, Brad Hartwig, left SpaceX in 2018, he attempted to craft the ideal resume for a future astronaut, his dream career. He joined the California Air National Guard, worked as a test pilot at the now-defunct electric aviation startup Kitty Hawk, and participated in volunteer search and rescue missions in the Bay Area, which gave him a front row seat to the devastating effects of wildfires in Northern California.

That experience changed everything. “I decided I actually really like planet Earth,” Hartwig told me, “and I wanted to focus my career instead on preserving it, rather than trying to leave it.” So he rallied a bunch of his former rocket engineer colleagues to repurpose technology they pioneered at SpaceX to build a biomass-fueled, carbon negative power source that’s supposedly about ten times smaller, twice as efficient, and eventually, one-third the cost of the industry standard for this type of plant.

Take that, all you founders humble-bragging about starting in a dingy garage.

“It’s not new science, per se,” Hartwig told me. The goal of this type of tech, called bioenergy with carbon capture and storage, is to combine biomass-based energy generation with carbon dioxide removal to achieve net negative emissions. Sounds like a dream, but actually producing power or heat from this process has so far proven too expensive to really make sense. There are only a few so-called BECCS facilities operating in the U.S. today, and they’re all just ethanol fuel refineries with carbon capture and storage technology tacked on.

But the advances in 3D printing and computer modeling that allowed the SpaceX team to build an increasingly simple and cheap rocket engine have allowed Arbor to move quickly into this new market, Hartwig explained. “A lot of the technology that we had really pioneered over the last decade — in reactor design, combustion devices, turbo machinery, all for rocket propulsion — all that technology has really quite immediate application in this space of biomass conversion and power generation.”

Arbor’s method is poised to be a whole lot sleeker and cheaper than the BECCS plants of today, enabling both more carbon sequestration and actual electricity production, all by utilizing what Hartwig fondly refers to as a “vegetarian rocket engine.” Because there’s no air in space, astronauts have to bring pure oxygen onboard, which the rocket engines use to burn fuel and propel themselves into the stratosphere and beyond. Arbor simply subs out the rocket fuel for biomass. When that biomass is combusted with pure oxygen, the resulting exhaust consists of just CO2 and water. As the exhaust cools, the water condenses out, and what’s left is a stream of pure carbon dioxide that’s ready to be injected deep underground for permanent storage. All of the energy required to operate Arbor’s system is generated by the biomass combustion itself.

“Arbor is the first to bring forward a technology that can provide clean baseload energy in a very compact form,” Clea Kolster, a partner and Head of Science at Lowercarbon Capital told me. Lowercarbon is an investor in Arbor, alongside other climate tech-focused venture capital firms including Gigascale Capital and Voyager Ventures, but the company has not yet disclosed how much it’s raised.

Last month, Arbor signed a deal with Microsoft to deliver 25,000 tons of permanent carbon dioxide removal to the tech giant starting in 2027, when the startup’s first commercial project is expected to come online. As a part of the deal, Arbor will also generate 5 megawatts of clean electricity per year, enough to power about 4,000 U.S. homes. And just a few days ago, the Department of Energy announced that Arbor is one of 11 projects to receive a combined total of $58.5 million to help develop the domestic carbon removal industry.

Arbor’s current plan is to source biomass from forestry waste, much of which is generated by forest thinning operations intended to prevent destructive wildfires. Hartwig told me that for every ton of organic waste, Arbor can produce about one megawatt hour of electricity, which is in line with current efficiency standards, plus about 1.8 tons of carbon removal. “We look at being as efficient, if not a little more efficient than a traditional bioenergy power plant that does not have carbon capture on it,” he explained.

The company’s carbon removal price targets are also extremely competitive — in the $50 to $100 per ton range, Hartwig said. Compare that to something like direct air capture, which today exceeds $600 per ton, or enhanced rock weathering, which is usually upwards of $300 per ton. “The power and carbon removal they can offer comes at prices that meet nearly unlimited demand,”Mike Schroepfer, the founder of Gigascale Capital and former CTO of Meta, told me via email. Arbor benefits from the fact that the electricity it produces and sells can help offset the cost of the carbon removal, and vice versa. So if the company succeeds in hitting its cost and efficiency targets, Hartwig said, this “quickly becomes a case for, why wouldn’t you just deploy these everywhere?”

Initial customers will likely be (no surprise here) the Microsofts, Googles and Metas of the world — hyperscalers with growing data center needs and ambitious emissions targets. “What Arbor unlocks is basically the ability for hyperscalers to stop needing to sacrifice their net zero goals for AI,” Kolster told me. And instead of languishing in the interminable grid interconnection queue, Hartwig said that providing power directly to customers could ensure rapid, early deployment. “We see it as being quicker to power behind-the-meter applications, because you don’t have to go through the process of connecting to the grid,” he told me. Long-term though, he said grid connection will be vital, since Arbor can provide baseload power whereas intermittent renewables cannot.

All of this could serve as a much cheaper alternative, to say, re-opening shuttered nuclear facilities, as Microsoft also recently committed to doing at Three Mile Island. “It’s great, we should be doing that,” Kolster said of this nuclear deal, “but there’s actually a limited pool of options to do that, and unfortunately, there is still community pushback.”

Currently, Arbor is working to build out its pilot plant in San Bernardino, California, which Hartwig told me will turn on this December. And by 2030, the company plans to have its first commercial plant operating at scale, generating 100 megawatts of electricity while removing nearly 2 megatons of CO2 every year. “To put it in perspective: In 2023, the U.S. added roughly 9 gigawatts of gas power to the grid, which generates 18 to 23 megatons of CO2 a year,” Schroepfer wrote to me. So having just one Arbor facility removing 2 megatons would make a real dent. The first plant will be located in Louisiana, where Arbor will also be working with an as-yet-unnamed partner to do the carbon storage.

The company’s carbon credits will be verified with the credit certification platform Isometric, which is also backed by Lowercarbon and thought to have the most stringent standards in the industry. Hartwig told me that Arbor worked hand-in-hand with Isometric to develop the protocol for “biogenic carbon capture and storage,” as the company is the first Isometric-approved supplier to use this standard.

But Hartwig also said that government support hasn’t yet caught up to the tech’s potential. While the Inflation Reduction Act provides direct air capture companies with $180 per ton of carbon dioxide removed, technology such as Arbor’s only qualifies for $85 per ton. It’s not nothing — more than the zero dollars enhanced rock weathering companies such as Lithos or bio-oil sequestration companies such as Charm are getting. “But at the same time, we’re treated the same as if we’re sequestering CO2 emissions from a natural gas plant or a coal plant,” Hartwig told me, as opposed to getting paid for actual CO2 removal.

“I think we are definitely going to need government procurement or involvement to actually hit one, five, 10 gigatons per year of carbon removal,” Hartwig said. Globally, scientists estimate that we’ll need up to 10 gigatons of annual CO2 removal by 2050 in order to limit global warming to 1.5 degrees Celsius. “Even at $100 per ton, 10 gigatons of carbon removal is still a pretty hefty price tag,” Hartwig told me. A $1 trillion price tag, to be exact. “We definitely need more players than just Microsoft.”

New research out today shows a 10-fold increase in smoke mortality related to climate change from the 1960s to the 2010.

If you are one of the more than 2 billion people on Earth who have inhaled wildfire smoke, then you know firsthand that it is nasty stuff. It makes your eyes sting and your throat sore and raw; breathe in smoke for long enough, and you might get a headache or start to wheeze. Maybe you’ll have an asthma attack and end up in the emergency room. Or maybe, in the days or weeks afterward, you’ll suffer from a stroke or heart attack that you wouldn’t have had otherwise.

Researchers are increasingly convinced that the tiny, inhalable particulate matter in wildfire smoke, known as PM2.5, contributes to thousands of excess deaths annually in the United States alone. But is it fair to link those deaths directly to climate change?

A new study published Monday in Nature Climate Change suggests that for a growing number of cases, the answer should be yes. Chae Yeon Park, a climate risk modeling researcher at Japan’s National Institute for Environmental Studies, looked with her colleagues at three fire-vegetation models to understand how hazardous emissions changed from 1960 to 2019, compared to a hypothetical control model that excluded historical climate change data. They found that while fewer than 669 deaths in the 1960s could be attributed to climate change globally, that number ballooned to 12,566 in the 2010s — roughly a 20-fold increase. The proportion of all global PM2.5 deaths attributable to climate change jumped 10-fold over the same period, from 1.2% in the 1960s to 12.8% in the 2010s.

“It’s a timely and meaningful study that informs the public and the government about the dangers of wildfire smoke and how climate change is contributing to that,” Yiqun Ma, who researches the intersection of climate change, air pollution, and human health at the Yale School of Medicine, and who was not involved in the Nature study, told me.

The study found the highest climate change-attributable fire mortality values in South America, Australia, and Europe, where increases in heat and decreases in humidity were also the greatest. In the southern hemisphere of South America, for example, the authors wrote that fire mortalities attributable to climate change increased from a model average of 35% to 71% between the 1960s and 2010s, “coinciding with decreased relative humidity,” which dries out fire fuels. For the same reason, an increase in relative humidity lowered fire mortality in other regions, such as South Asia. North America exhibited a less dramatic leap in climate-related smoke mortalities, with climate change’s contribution around 3.6% in the 1960s, “with a notable rise in the 2010s” to 18.8%, Park told me in an email.

While that’s alarming all on its own, Ma told me there was a possibility that Park’s findings might actually be too conservative. “They assume PM2.5 from wildfire sources and from other sources” — like from cars or power plants — “have the same toxicity,” she explained. “But in fact, in recent studies, people have found PM2.5 from fire sources can be more toxic than those from an urban background.” Another reason Ma suspected the study’s numbers might be an underestimate was because the researchers focused on only six diseases that have known links to PM2.5 exposure: chronic obstructive pulmonary disease, lung cancer, coronary heart disease, type 2 diabetes, stroke, and lower respiratory infection. “According to our previous findings [at the Yale School of Medicine], other diseases can also be influenced by wildfire smoke, such as mental disorders, depression, and anxiety, and they did not consider that part,” she told me.

Minghao Qiu, an assistant professor at Stony Brook University and one of the country’s leading researchers on wildfire smoke exposure and climate change, generally agreed with Park’s findings, but cautioned that there is “a lot of uncertainty in the underlying numbers” in part because, intrinsically, wildfire smoke exposure is such a complicated thing to try to put firm numbers to. “It’s so difficult to model how climate influences wildfire because wildfire is such an idiosyncratic process and it’s so random, ” he told me, adding, “In general, models are not great in terms of capturing wildfire.”

Despite their few reservations, both Qiu and Ma emphasized the importance of studies like Park’s. “There are no really good solutions” to reduce wildfire PM2.5 exposure. You can’t just “put a filter on a stack” as you (sort of) can with power plant emissions, Qiu pointed out.

Even prescribed fires, often touted as an important wildfire mitigation technique, still produce smoke. Park’s team acknowledged that a whole suite of options would be needed to minimize future wildfire deaths, ranging from fire-resilient forest and urban planning to PM2.5 treatment advances in hospitals. And, of course, there is addressing the root cause of the increased mortality to begin with: our warming climate.

“To respond to these long-term changes,” Park told me, “it is crucial to gradually modify our system.”

On the COP16 biodiversity summit, Big Oil’s big plan, and sea level rise

Current conditions: Record rainfall triggered flooding in Roswell, New Mexico, that killed at least two people • Storm Ashley unleashed 80 mph winds across parts of the U.K. • A wildfire that broke out near Oakland, California, on Friday is now 85% contained.

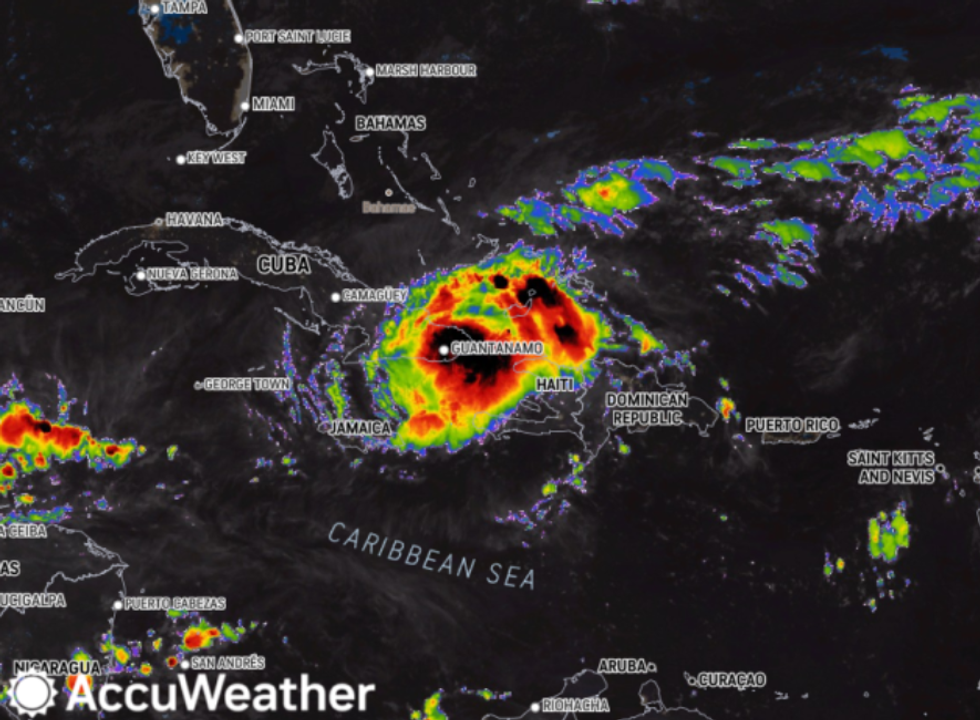

Forecasters hadn’t expected Hurricane Oscar to develop into a hurricane at all, let alone in just 12 hours. But it did. The Category 1 storm made landfall in Cuba on Sunday, hours after passing over the Bahamas, bringing intense rain and strong winds. Up to a foot of rainfall was expected. Oscar struck while Cuba was struggling to recover from a large blackout that has left millions without power for four days. A second system, Tropical Storm Nadine, made landfall in Belize on Saturday with 60 mph winds and then quickly weakened. Both Oscar and Nadine developed in the Atlantic on the same day.

The COP16 biodiversity summit starts today in Cali, Colombia. Diplomats from 190 countries will try to come up with a plan to halt global biodiversity loss, aiming to protect 30% of land and sea areas and restore 30% of degraded ecosystems by 2030. Discussions will revolve around how to monitor nature degradation, hold countries accountable for their protection pledges, and pay for biodiversity efforts. There will also be a big push to get many more countries to publish national biodiversity strategies. “This COP is a test of how serious countries are about upholding their international commitments to stop the rapid loss of biodiversity,” said Crystal Davis, Global Director of Food, Land, and Water at the World Resources Institute. “The world has no shot at doing so without richer countries providing more financial support to developing countries — which contain most of the world’s biodiversity.”

A prominent group of oil and gas producers has developed a plan to roll back environmental rules put in place by President Biden, The Washington Post reported. The paper got its hands on confidential documents from the American Exploration and Production Council (AXPC), which represents some 30 producers. The documents include draft executive orders promoting fossil fuel production for a newly-elected President Trump to sign if he takes the White House in November, as well as a roadmap for dismantling many policies aimed at getting oil and gas producers to disclose and curb emissions. AXPC’s members, including ExxonMobil, ConocoPhillips, and Hess, account for about half of the oil and gas produced in the U.S., the Post reported.

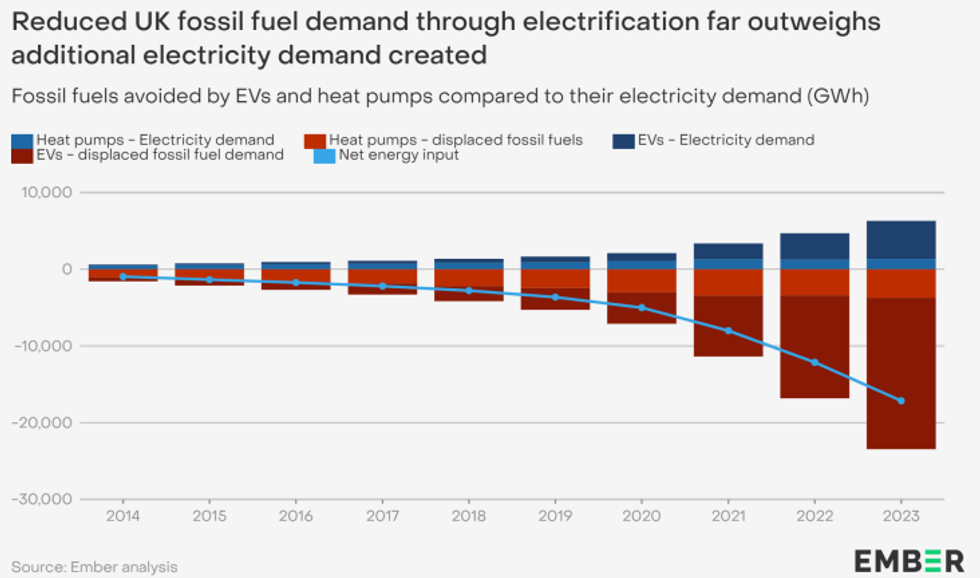

A new report from the energy think tank Ember looks at how the uptake of electric vehicles and heat pumps in the U.K. is affecting oil and gas consumption. It found that last year the country had 1.5 million EVs on the road, and 430,000 residential heat pumps in homes, and the reduction in fossil fuel use due to the growth of these technologies was equivalent to 14 million barrels of oil, or about what the U.K. imports over a two-week span. This reduction effect will be even stronger as more and more EVs and heat pumps are powered by clean energy. The report also found that even though power demand is expected to rise, efficiency gains from electrification and decarbonization will make up for this, leading to an overall decline in energy use and fossil fuel consumption.

The world’s sea levels are projected to rise by more than 6 inches on average over the next 30 years if current trends continue, according to a new study published in the journal Nature. “Such rates would represent an evolving challenge for adaptation efforts,” the authors wrote. By examining satellite data, the researchers found that sea levels have risen by about .4 inches since 1993, and that they’re rising faster now than they were then. In 1993 the seas were rising by about .08 inches per year, and last year they were rising at .17 inches per year. These are averages, of course, and some areas are seeing much more extreme changes. For example, areas around Miami, Florida, have already seen sea levels rise by 6 inches over the last 31 years.

“As the climate crisis grows more urgent, restoring faith in government will be more important than ever.” –Paul Waldman writing for Heatmap about the profound implications of America becoming a low-trust society.