You’re out of free articles.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Sign In or Create an Account.

By continuing, you agree to the Terms of Service and acknowledge our Privacy Policy

Welcome to Heatmap

Thank you for registering with Heatmap. Climate change is one of the greatest challenges of our lives, a force reshaping our economy, our politics, and our culture. We hope to be your trusted, friendly, and insightful guide to that transformation. Please enjoy your free articles. You can check your profile here .

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Subscribe to get unlimited Access

Hey, you are out of free articles but you are only a few clicks away from full access. Subscribe below and take advantage of our introductory offer.

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Create Your Account

Please Enter Your Password

Forgot your password?

Please enter the email address you use for your account so we can send you a link to reset your password:

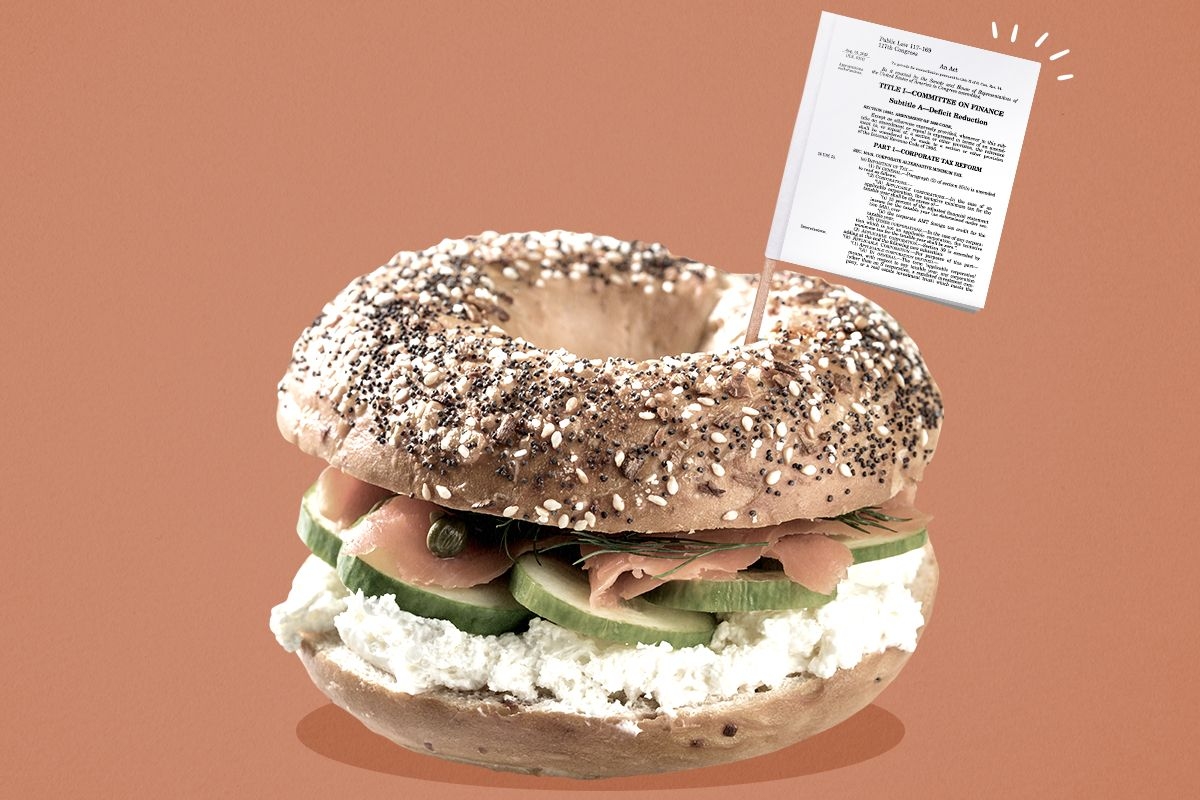

In defense of “everything bagel” policymaking.

Writers have likely spilled more ink on the word “abundance” in the past couple months than at any other point in the word’s history.

Beneath the hubbub, fed by Ezra Klein and Derek Thompson’s bestselling new book, lies a pressing question: What would it take to build things faster? Few climate advocates would deny the salience of the question, given the incontrovertible need to fix the sluggish pace of many clean energy projects.

A critical question demands an actionable answer. To date, many takes on various sides of the debate have focused more on high-level narrative than precise policy prescriptions. If we zoom in to look at the actual sources of delay in clean energy projects, what sorts of solutions would we come up with? What would a data-backed agenda for clean energy abundance look like?

The most glaring threat to clean energy deployment is, of course, the Republican Party’s plan to gut the Inflation Reduction Act. But “abundance” proponents posit that Democrats have imposed their own hurdles, in the form of well-intentioned policies that get in the way of government-backed building projects. According to some broad-brush recommendations, Democrats should adopt an abundance agenda focused on rolling back such policies.

But the reality for clean energy is more nuanced. At least as often, expediting clean energy projects will require more, not less, government intervention. So too will the task of ensuring those projects benefit workers and communities.

To craft a grounded agenda for clean energy abundance, we can start by taking stock of successes and gaps in implementing the IRA. The law’s core strategy was to unite climate, jobs, and justice goals. The IRA aims to use incentives to channel a wave of clean energy investments towards good union jobs and communities that have endured decades of divestment.

Klein and Thompson are wary that such “everything bagel” strategies try to do too much. Other “abundance” advocates explicitly support sidelining the IRA’s labor objectives to expedite clean energy buildout.

But here’s the thing about everything bagels: They taste good.

They taste good because they combine ingredients that go well together. The question — whether for bagels or policies — is, are we using congruent ingredients?

The data suggests that clean energy growth, union jobs, and equitable investments — like garlic, onion, and sesame seeds — can indeed pair well together. While we have a long way to go, early indicators show significant post-IRA progress on all three fronts: a nearly 100-gigawatt boom in clean energy installations, an historic high in clean energy union density, and outsized clean investments flowing to fossil fuel communities. If we can design policy to yield such a win-win-win, why would we choose otherwise?

Klein and Thompson are of course right that to realize the potential of the IRA, we must reduce the long lag time in building clean energy projects. That lag time does not stem from incentives for clean energy companies to provide quality jobs, negotiate Community Benefits Agreements, or invest in low-income communities. Such incentives did not deter clean energy companies from applying for IRA funding in droves. Programs that included all such incentives were typically oversubscribed, with companies applying for up to 10 times the amount of available funding.

If labor and equity incentives are not holding up clean energy deployment, what is? And what are the remedies?

Some of the biggest delays point not to an excess of policymaking — the concern of many “abundance” proponents — but an absence. Such gaps call for more market-shaping policies to expedite the clean energy transition.

Take, for example, the years-long queues for clean energy projects to connect to the electrical grid, which developers rank as one of the largest sources of delay. That wait stems from a piecemeal approach to transmission buildout — the result not of overregulation by progressive lawmakers, but rather the opposite: a hands-off mode of governance that has created vast inefficiencies. For years, grid operators have built transmission lines not according to a strategic plan, but in response to the requests of individual projects to connect to the grid. This reactive, haphazard approach requires a laborious battery of studies to determine the incremental transmission upgrades (and the associated costs) needed to connect each project. As a result, project developers face high cost uncertainty and a nearly five-year median wait time to finish the process, contributing to the withdrawal of about three of every four proposed projects.

The solution, according to clean energy developers, buyers, and analysts alike, is to fill the regulatory void that has enabled such a fragmentary system. Transmission experts have called for rules that require grid operators to proactively plan new transmission lines in anticipation of new clean energy generation and then charge a preestablished fee for projects to connect, yielding more strategic grid expansion, greater cost certainty for developers, fewer studies, and reduced wait times to connect to the grid. Last year, the Federal Energy Regulatory Commission took a step in this direction by requiring grid operators to adopt regional transmission planning. Many energy analysts applauded the move and highlighted the need for additional policies to expedite transmission buildout.

Another source of delay that underscores policy gaps is the 137-week lag time to obtain a large power transformer, due to supply chain shortages. The United States imports four of every five large power transformers used on our electric grid. Amid the post-pandemic snarling of global supply chains, such high import dependency has created another bottleneck for building out the new transmission lines that clean energy projects demand. To stimulate domestic transformer production, the National Infrastructure Advisory Council — including representatives from major utilities — has proposed that the federal government establish new transformer manufacturing investments and create a public stockpiling system that stabilizes demand. That is, a clean energy abundance agenda also requires new industrial policies.

While such clean energy delays call for additional policymaking, “abundance” advocates are correct that other delays call for ending problematic policies. Rising local restrictions on clean energy development, for example, pose a major hurdle. However, the map of those restrictions, as tracked in an authoritative Columbia University report, does not support the notion that they stem primarily from Democrats’ penchant for overregulation. Of the 11 states with more than 10 such restrictions, six are red, three are purple, and two are blue — New York and Texas, Virginia and Kansas, Maine and Indiana, etc. To take on such restrictions, we shouldn’t let concern with progressive wish lists eclipse a focused challenge to old-fashioned, transpartisan NIMBYism.

“Abundance” proponents also focus their ire on permitting processes like those required by the National Environmental Policy Act, which the Supreme Court curtailed last week. Permitting needs mending, but with a chisel, not a Musk-esque chainsaw. The Biden administration produced a chisel last year: a NEPA reform to expedite clean energy projects and support environmental justice. In February, the Trump administration tossed out that reform and nearly five decades of NEPA rules without offering a replacement — a chainsaw maneuver that has created more, not less, uncertainty for project developers. When the wreckage of this administration ends, we’ll need to fill the void with targeted permitting policies that streamline clean energy while protecting communities.

Finally, a clean energy abundance agenda should also welcome pro-worker, pro-equity incentives like those in the IRA “everything bagel.” Despite claims to the contrary, such policies can help to overcome additional sources of delay and facilitate buildout.

For example, Community Benefits Agreements, which IRA programs encouraged, offer a distinct, pro-building advantage: a way to avoid the community opposition that has become a top-tier reason for delays and cancellations of wind and solar projects. CBAs give community and labor groups a tool to secure locally-defined economic, health, and environmental benefits from clean energy projects. For clean energy firms, they offer an opportunity to obtain explicit project support from community organizations. Three out of four wind and solar developers agree that increased community engagement reduces project cancellations, and more than 80% see it as at least somewhat “feasible” to offer benefits via CBAs. Indeed, developers and communities are increasingly using CBAs, from a wind farm off the coast of Rhode Island to a solar park in California’s central valley, to deliver tangible benefits and completed projects — the ingredients of abundance.

A similar win-win can come from incentives for clean energy companies to pay construction workers decent wages, which the IRA included. Most peer-reviewed studies find that the impact of such standards on infrastructure construction costs is approximately zero. By contrast, wage standards can help to address a key constraint on clean energy buildout: companies’ struggle to recruit a skilled and stable workforce in a tight labor market. More than 80% of solar firms, for example, report difficulties in finding qualified workers. Wage standards offer a proven solution, helping companies attract and retain the workforce needed for on-time project completion.

In addition to labor standards and support for CBAs, a clean energy abundance agenda also should expand on the IRA’s incentives to invest in low-income communities. Such policies spur clean energy deployment in neighborhoods the market would otherwise deem unprofitable. Indeed, since enactment of the IRA, 75% of announced clean energy investments have been in low-income counties. That buildout is a deliberate outcome of the “everything bagel” approach. If we want clean energy abundance for all, not just the wealthy, we need to wield — not withdraw — such incentives.

Crafting an agenda for clean energy abundance requires precision, not abstraction. We need to add industrial policies that offer a foundation for clean energy growth. We need to end parochial policies that deter buildout on behalf of private interests. And we need to build on labor and equity policies that enable workers and communities to reap material rewards from clean energy expansion. Differentiating between those needs will be essential for Democrats to build a clean energy plan that actually delivers abundance.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

The maker of smart panels is tapping into unused grid capacity to help power the AI boom.

The race for artificial intelligence is a race for electricity. Data centers are scrambling to find enough power to run their servers, and when they do, they often face long waits while utilities upgrade the grid to accommodate the added demand.

In the eyes of Arch Rao, the CEO and founder of the smart electrical panel company Span, however, there is a glut of electricity waiting to be exploited. That’s because the electric grid is already oversized, designed to satisfy spikes in demand that occur for just a few hours each year. By shifting when and where different users consume power, it’s possible to squeeze far more juice out of the existing system, faster, and for a lot less money, than it takes to make it bigger.

This is what Span’s smart panel does — it manages the energy drawn by household appliances to help homeowners integrate electric vehicle chargers and heat pumps without triggering the need for electrical upgrades.

Now the age of AI has opened up new opportunities for the company. Last month, Span announced the launch of XFRA, a device that works with Span’s smart panel to power AI applications by tapping into the unused electrical capacity available to homes and businesses.

The company refers to XFRA as a “distributed data center.” It’s sort of like if you chopped up a full-scale data center into washing machine-sized boxes and plugged them into peoples’ homes; Span’s smart panel then acts as a conductor, orchestrating XFRA’s energy consumption to take advantage of unused power capacity without stepping on the home’s other energy needs. In exchange for hosting one of these XFRA “nodes,” Span will offer homeowners and tenants deeply discounted, if not free electricity and internet service.

The idea sounded audacious, verging on fantastical, until I watched the economics play out in real time at one of Span’s labs in a warehouse south of San Francisco. Ryan Harris, the company’s chief revenue officer, showed me an XFRA prototype — a metal box about the size of a freezer chest stuffed with Dell servers and Nvidia liquid-cooled GPUs. Span was renting out the processing power from this node and six others to AI users through an online marketplace. On a computer screen next to the unit, a dashboard showed the revenue flowing in from the fleet — $500 over the past 24 hours, and more than $21,000 in the previous three weeks. The numbers continued to tick up as I stood there.

When I first planned to write about Span, XFRA was still a secret. I reached out because its smart panel business, which debuted in 2019, seemed to suddenly take off.

In February, Span announced that PG&E, the largest utility in California, would be installing its devices in thousands of homes beginning this summer. Then in March, the company revealed a partnership with Eaton, one of the biggest legacy electrical equipment companies in the world. Eaton is investing $75 million in Span and will begin selling co-branded electrical panels to its extensive network of distributors, installers, and homebuilders later this year. With the launch of XFRA, Span is becoming something like a utility itself. To date, the company has raised more than $400 million, and will soon close a nearly $200 million Series C.

Of course it will take more than smart electrical panels to serve data centers’ soaring power needs. In this era of unprecedented energy demand growth, building a bigger electrical system is unavoidable — but the size of the investment, and the cost impacts on everyday electricity customers, are malleable. Several recent studies have shown just how big the opportunity is to get more energy out of our existing infrastructure if the entire system can become a bit more flexible.

Last year, Duke University researchers found that on average, the U.S. is utilizing only about half of our electricity generation capacity. Nationwide, they estimated, the grid could accommodate at least 76 gigawatts of new load — close to the total generation capacity installed in California — without having to upgrade the electrical system or build new power plants, so long as those new end-users were somewhat flexible with when and how much electricity they used.

More recently, in a report commissioned by a coalition called Utilize, of which Span is a member, the Brattle Group found that milking just 10% more from our existing grid infrastructure on an annual basis could reduce electricity rates for all end users by 3.4%. Utilities can sell more energy, faster, and spread the fixed costs of running the system across more customers.

What all this meant in practice did not fully click for me until I saw a demonstration of Span’s panel at the lab a few weeks ago. Harris, the CRO, led me to a free-standing wall lined with household appliances, a stripped-down version of an all-electric home. A minisplit heat pump whirred while a high-speed electric vehicle charger was juicing up a Rivian parked on the warehouse floor. A TV screen displayed the amount of power going to each device, as measured by Span’s electric panel.

Together, the heat pump and charger were using about two-thirds of the electric capacity of this demonstration home, which was running on a 100-amp utility service connection. The charger alone was using 48 amps.

The owner of this theoretical home would typically not have been allowed to install such an energy-intensive EV charger without upgrading to 200-amp service. Electric codes require that residential electrical systems have room for the rare scenario that a home’s major appliances all run at once, for safety reasons. Otherwise, the occupants might accidentally try to draw more power than their utility connection can deliver, overheat their wires, and start a fire. 100-amp connections are exceedingly common in homes designed to use gas or propane for cooking and heating, but once you replace those appliances with electric versions, or add an EV charger, you start to push the limit.

A service upgrade to 200 amps can take many months and cost several thousands of dollars. The utility typically has to run new wiring to the house, and might even have to augment the grid infrastructure serving the neighborhood.

Span’s smart panel offers an alternative.

“Shall we turn on some load?” Harris said. An engineer on Span’s product team turned on the demo home’s electric water heater, and I watched as the chart on the screen adjusted. The water heater jumped from zero to 22 amps, while the EV charger’s amperage decreased from 48 to 33. When the engineer switched on the clothes dryer, drawing 24 amps, the EV charger’s amperage dropped further.

The electrical panel was tracking how much power was flowing to each of its circuits and throttling the EV charger in response. When the team dialed up the electric stove to heat a pot of water, the EV charger shut off altogether.

Next, Harris requested a boost to the “garage” sub-panel, simulating a hot tub or some power tools kicking on. Soon, the water heater shut off, too. “You have 50 gallons of hot water, so it’s not going to have any negative impact on the customer in that moment,” Harris told me. He showed me an alert that appeared on the Span phone app notifying the homeowner that the system was temporarily limiting power to the EV charger and water heater in order to power other devices.

Users can choose which appliances the system bumps first. While some devices, such as EV chargers, water heaters, and heat pumps, have the ability to be ramped up and down, others will simply shut off.

At $2,550 excluding labor for the smallest, most basic smart panel, and just over $4,000 for the biggest one, Span is more expensive than the average dumb panel, which can come in under $1,000. Depending on the home and the complexity of a service upgrade, however, it’s often cheaper to install Span than to move to 200 amps. It’s also almost certainly faster.

Span’s first generation product couldn’t do any of this. Initially, the company’s value proposition was just to give people more control over their energy usage. The original Span panel gave homeowners with batteries the ability to select which devices they wanted to power during an outage and ensure they didn’t accidentally lose charge on non-essentials. The company had to build an initial customer base and validate the technology in the real world, Rao told me, before it could earn the credibility (and the capital) to deploy the fully realized version of the product.

In 2023, Span debuted “PowerUp,” the software that makes what I witnessed at the lab possible. With PowerUp, Span’s smart panel went from being a cool gadget to a money-saver, helping homeowners skip utility service upgrades. The success of PowerUp opened the door for Span to engage with larger partners, starting with homebuilders.

“We had to demonstrate that we were safe and scalable in the home retrofit category to then get homebuilders — who are typically very, very cost sensitive, are not often at the tip of the spear in terms of technology adoption — to say, this is a proven technology, and it saves you money,” said Rao.

Residential developers face similar problems as homeowners, but on a bigger scale. While 200-amp connections have become more standard over the past few decades, new electrical codes that require either fully electric or electric-ready construction are pushing the limits.

“Now the load calculations will put them at 300 or 400 amps of service per home,” Rao told me. “Multiply that by a community of 500 homes, and suddenly you’ve doubled the amount of interconnection you need to bring from the utility.”

This raises the cost of development, and it can also increase the wait time — potentially by years — to get hooked up to the grid. Again, Span offers an alternative. To date, nearly half of the top 20 homebuilders across the U.S. have used the company’s technology, Rao told me. More broadly, its electrical panels have been installed in tens of thousands of homes in all 50 states.

I should note that Span is not the only solution on the market for homeowners or homebuilders to avoid service upgrades — the main alternative is just choosing appliances that don’t use so much power. There are water heaters, clothes dryers, and EV chargers on the market that run on lower amperage, and startups like Copper and Impulse Labs are making stoves with integrated batteries that enable them to do the same. There are also Span-adjacent technologies such as smart circuit splitters that let you plug two power-hungry devices, like an EV charger and a clothes dryer, into the same circuit, and the device will safely modulate power between the two.

“You can hack your way around both problems — one, of a panel upgrade, and two, a Span upgrade, which is also expensive — with cheaper solutions,” Brian Stewart, the co-founder of Electrify Now, a group that provides education and advocacy on home electrification, told me. “But it’s less elegant, let’s just say, than the Span solution.”

Though he started at the home level, Rao has always had his sights set on a much bigger customer — utilities. Several Span executives I spoke to referenced an “infamous” Powerpoint slide from the early days of the company with a bar chart that showed how the company would scale in three phases. First came “back-up,” referring to Span’s initial home battery management product. Next was “power-up,” the software that enabled electrification by avoiding service upgrades. The third was “fleet.”

The same safety principles that trigger service upgrades at individual homes also apply upstream at the neighborhood level. For example, the size of a neighborhood’s transformer, the equipment that changes the voltage of the electricity as it moves along the grid, depends on the combined amperage of the homes it serves. If all those homes are installing EV chargers or heat pumps or whatever else and starting to use more electricity, the utility will have to upgrade the transformer — a cost that gets spread across all of its customers. If a critical mass of the homes have Span panels, however, they can avoid this.

Partnering with major homebuilders earned Span “the right to sit at the table with utilities,” Rao told me, “and say, look, we’ve done this at the home level, at the community level. Imagine if you could do this at the grid level, where the benefit doesn’t just accrue to individual customers or home builders, it can accrue to all rate payers?”

I got a taste of what this looks like back at the lab, where Harris showed me Span’s “fleet capability.” There were actually three demonstration homes set up on the warehouse floor, and Harris showed me how a utility could coordinate a response across multiple Span panels to keep a neighborhood within its safe energy limits.

Imagine it’s a really hot day, and the utility is on the verge of having to institute rolling blackouts. Instead, it can implement what’s called a dynamic service rating event, sending a signal out to the Span panels served by a given transformer to reduce their electrical limit from 100 amps to 60, for example. Rather than the entire neighborhood losing power, a few homes would see their EV charging cut back or their thermostats go up by a few degrees. Of course, not everybody will want to give this kind of control to the utility; customers often cite concerns about comfort and convenience as reasons they are skeptical of these kinds of programs. When I asked Harris whether participating would require that Span customers opt in, he said it was more likely to be opt-out.

Span has done several pilot projects testing this capability. Installing electrical panels is too complex for utilities to do en masse, though. So the company developed Span Edge, a smaller version of its panel that can be installed at a building’s electricity meter. It does all the same things the larger electrical panel does, without needing to serve as the home’s central nervous system. It still enables homeowners to avoid service upgrades by throttling EV chargers or whatever other devices are hooked up to it, but it’s much simpler to install.

This is the device that the California utility PG&E will begin deploying in homes later this summer. The company will offer Span Edge to homeowners who are installing appliances that might trigger an electrical upgrade, or are considering doing so in the future, through a program called PanelBoost. It’s entirely voluntary, and while participants will have to pay for installation, the panel itself comes gratis.

“This is the first time that there’s a large-scale direct purchase of Span equipment by a utility,” Alex Pratt, Span’s vice president of business development, told me. “This has long been the North Star for the company.”

Paul Doherty, the manager for clean energy and innovation communications at PG&E, told me the company saw Span Edge as a “win, win, win for PG&E, for our customers, and for the environment.” It enables customers to electrify their homes more quickly and affordably, and for PG&E to sell more electrons without raising rates.

“We’re very bullish about the opportunity for this technology and the benefit that it will bring for the grid and for our customers here in California,” Doherty told me.

Rao sees XFRA as a natural evolution of Span’s basic premise. The company has found that 98% of its customers that have 200-amp service connections have about 80 amps available at any given time, Harris told me. Hosting an XFRA node enables homeowners to monetize that unused capacity.

To start, Span is prioritizing getting XFRA into newly built homes, where the developer handles customer acquisition and installing at scale is straightforward since every home is roughly the same. The company has partnered with the developer PulteGroup to roll out a 100-home pilot program for a total of over 1.2 megawatts of compute capacity. The partners have not specified where it will be yet or whether there will be a single offtaker for the compute.

In the longer term, Rao told me, XFRA could be the “unlock” that makes electrification more affordable for people. “There is a utopian end state in my mind where XFRA allows more of our customers to get free energy, free backup, and free internet,” he said.

First, the company will have to find out if anyone is actually willing to let XFRA into their home. During my final conversation with the CEO, after my lab visit, he showed me the infamous slide forecasting the company’s growth from “back-up to power-up to fleet.” The y-axis on the chart showed the number of homes per year the company could address at each stage. The bar for back-up systems landed at 5,000 per year, Power-up came to nearly 100,000. Suffice it to say, Span hasn’t hit these numbers.

“Are you where you want to be today?” I asked him.

Of course, he wasn’t going to say no. “We have contracts in place for hundreds of thousands of homes already with utilities,” he said. “Right now our focus is on execution — delivering on that scale, as opposed to finding that scale. It’s a deployed product, it’s not a downloadable app, so it takes time to physically deploy hundreds of thousands of endpoints. So I think that scale is coming.”

After years of dithering, the world’s biggest automaker is finally in the game.

The hottest contest in the electric car industry right now may be the race for third place.

Thanks to Tesla’s longtime supremacy (at least in this country), its two mainstays — the Model Y and Model 3 — sit comfortably atop the monthly list of best-selling EVs. Movement in the No. 3 spot, then, has become a signal for success from the automakers attempting to go electric. The original Chevy Bolt once occupied this position thanks to its band of diehard fans. Last year, the brand’s affordable Equinox EV grabbed third. And then, earlier this year, an unexpected car took over that spot on the leaderboard: the Toyota bZ.

The surprise is not so much the car itself, but rather its maker. Over the years, we’ve called out Toyota numerous times for dragging its feet about electric cars. The world’s largest automaker took the hybrid mainstream and still produces the hydrogen-powered Mirai. Nevertheless, Toyota publicly cast doubt about the viability of fully electric cars on several occasions and let other legacy car companies take the lead. Its first true EV, the bZ4X, was a disappointment, with driving range and power figures that lagged behind the rest of the industry.

Suddenly, though, the Toyota narrative looks different. Working at its trademark deliberate pace, the auto giant is revealing a batch of new EVs this year, just as competitors Ford, GM, Honda, and Hyundai-Kia are pulling back on their electric lines (and writing off billions of dollars to tilt their companies back toward fossil fuels). There is the Toyota bZ, which Car and Driver called “quicker, nicer inside, and better at being an EV” than the bZ4X, its predecessor. There is the C-HR, a small crossover that had been gas-powered before it became fully electric this year. And there is the large Highlander SUV, a popular nameplate that’s about to become EV-only.

To see what’s changed with the cars themselves, I test-drove the C-HR last week. A decade ago, I’d taken its gas-powered predecessor on a road trip down Long Island and found it to be a fun but frustrating vehicle. Toyota went way over the top with the exterior styling back then to make the little car scream “youthful,” but under the hood was a woefully underpowered engine that took about 11 seconds to push the C-HR from 0 to 60 miles per hour. Now, thanks to the instant torque of electric motors, the new version finally has the zip to go with its looks: It’ll get to 60 in under five seconds, and feels plenty zoomy just driving around town.

Inside, C-HR feels like an evolved Toyota that isn’t trying too hard to be a Tesla. The brand took the two-touchscreen approach, with a large one in the center console to handle main functions such as navigation, entertainment, and climate control, and a smaller one in front of the driver’s eyes where the traditional dashboard would be. There are still physical buttons on the wheel to manipulate music volume and cruise control, but climate controls are entirely digital.

The big touchscreen is a work in progress. It’s too crowded with information compared to a clean overlay like Tesla’s or Rivian’s, and the design of the navigation software had some profound flaws. (Whether you’re using the voice assistant or keyboard input to search for a destination, the system lags a troubling amount for a brand-new car. Maybe Toyota just expects you to use Apple CarPlay and ignore its built-in system.) Still, the interface is more iPhone-like and intuitive than what Hyundai and Kia are using in their EVs.

Here’s the real problem with the C-HR: Although it accomplishes the mission of feeling like a fun-to-drive Toyota that happens to be electric, it’s not terribly good at being an electric car. The Toyota lacks one-pedal driving, the delightful feature where the car slows itself as soon as you let off the accelerator, negating the need to move your foot between two pedals all time. Nor does it have a front trunk, a.k.a. frunk, the fun bonus on EVs made possible by the absence of an engine. According to Toyota, the C-HR is so small that engineers simply didn’t have room for a frunk (or a glovebox, for that matter).

The C-HR’s NACS charging port makes it possible to use Tesla Superchargers, and its charging port location on the passenger’s side front should make it simple to reach them. But instead of sitting on the corner of the car, easily reachable by a plug right in front of the parked vehicle, the port is several feet back, just behind the front wheel. And its door opens toward the charger, so the cord has to reach over or under the door that’s in the way. I made it work at a Supercharger in greater San Diego, but only after several frustrating tries and with less than an inch of cord to spare.

Those are the complaints of a longtime EV driver, and they might not matter to some C-HR buyers. The deepest oversight is the C-HR’s nav, which, at least right now, doesn’t have compatible charging stations built into its route planning — a warning message will notify you if the chosen route requires recharging to reach the final destination, but the car won’t tell you where to go. This is a glaring omission for potential buyers who’ll be taking their first EV road trip. (Get PlugShare, folks.) Planned charging is effectively an industry standard — even Toyota’s legacy competitors like Chevy and Hyundai will choose appropriate fast-chargers and route you to them, even if their interface isn’t as seamless and satisfying as what’s in a Tesla or Rivian. At least that’s a problem that could be solved later via software update, though.

Because of these faults, it’s difficult to imagine someone choosing this as their second or third EV. But maybe that’s not the game at all. There is a legion of Toyota drivers out there, many of whom might think about buying their first electric car if their brand built one. Despite its flaws, the C-HR is that. It’s got enough range for city living and occasional road trips, enough power to be fun to drive, and a Toyota badge on the hood.

Whatever their quirks, the very existence of the C-HR and its electric stablemates is a testament to Toyota’s plan to play the long game with EVs rather than ebb and flow with every whipsaw turn in the American car market. And they’re here just in time. Amidst volatile oil prices because of the Iran war, drivers worldwide are more interested in going electric.

In the U.S., that interest has buoyed used EV sales — not new — because so few affordable options are on the market. Although C-HR starts near $38,000, Toyota has begun to offer discounts that would bring it in line with gas-powered crossovers that are $5,000 cheaper. Maybe that’ll be enough for the subcompact to join its bigger sibling, the bZ, on that list of best-sellers.

Current conditions: A raging brushfire in the suburbs north of Los Angeles has forced more than 23,000 Californians to evacuate • The Guayanese capital of Georgetown, newly awash in offshore oil money, is also set to be drenched by thunderstorms through next week • Temperatures in Washington, D.C., are nearing triple digits today.

A bipartisan budget deal to fund roads, railways, and bridges for the next five years would also slap a $130 per year fee on drivers registering electric vehicles, with a $35 fee for plug-in hybrids. Late Sunday, lawmakers on the House Transportation and Infrastructure Committee released the text of the 1,000-page bill. Roughly a sixth of the way through the legislation is a measure directing the Federal Highway Administration to impose the annual fees on battery-electric and plug-in hybrid vehicles — and to withhold federal funding from any state that fails to comply with the rule. If passed, the fees would take effect at the end of September 2027. The fees — which increase to $150 and $50, respectively, after a decade — are designed to reinforce the Highway Trust Fund, which has traditionally been financed through gasoline taxes. In a statement, Representative Sam Graves, a Missouri Republican and the committee’s chairman, said the legislation “ensures that electric vehicle owners begin paying their fair share for the use of our roads.” But Albert Gore, the executive director of the Zero Emission Transportation Association, called the proposal “simply a punitive tax that would disproportionately impact adopters of electric vehicles, with no meaningful impact on” maintaining the fund. “Drivers of gas-powered vehicles pay approximately $73 to $89 in federal gas tax each year,” Gore said. “The proposed fee would charge an unfair premium on EV drivers, at a time when all Americans are looking for ways to save money.”

The Department of Justice, meanwhile, is preparing to weigh in on whether Elon Musk’s artificial intelligence company, xAI, is operating an illegal gas electrical plant to power its data center in Southaven, Mississippi. Last month, the NAACP and the Southern Environmental Law Center accused xAI of operating 27 gas turbines without pollution controls or Clean Air permits at the server farm, known as Colossus 2. Last week, the groups asked the federal court for a preliminary injunction to stop pollution from what E&E News described as “tractor-trailer-sized generators.” In response, the Justice Department cited President Donald Trump’s support for AI and said it was “evaluating possible intervention or amicus participation in this lawsuit.” It’s not the only agency riding in to aid Musk and his ilk. As I told you last week, the Environmental Protection Agency just proposed a new rule that would allow data centers and power plants to begin construction without air permits.

The Environmental Protection Agency has proposed two separate rules to delay and rescind drinking water limits on four “forever chemicals,” the class of cancer-causing compounds that spread in water and accumulate in the human body. The rules, as The Guardian noted, “must go through an approval process that can take several years, and almost certainly will be challenged in court.” Over the past decade, perfluoroalkyl and polyfluoroalkyl substances, known as PFAS, were discovered to be pervasive in the drinking water of some 176 million Americans. The chemicals — which are linked to kidney cancer, immune system suppression, and developmental delays in infants — are estimated to be in nearly 99% of Americans’ blood. In 2024, the Biden administration established limits on six substances, as Heatmap’s Jeva Lange reported at the time. But the Trump administration will now ax protections for four of the substances and provide companies with an extra two years to comply with rules on the other two. The move, The New York Times reported, has already “sparked fury within the Make America Healthy Again movement, a diverse group of anti-vaccine activists, wellness influencers and others who make up a key part” of Trump’s base.

India was once a forbidden prize for nuclear exporters. The world’s most populous nation, its metropoles choked by coal smog, operates two dozen commercial nuclear reactors — and wants more. But until earlier this year, the country was hamstrung by the haunting memory of Union Carbide’s 1984 accident at its Bhopal plant, where a leak killed thousands of Indians and the American chemical giant avoided any serious liability. To prevent a similar dynamic in the nuclear sector, New Delhi passed a law in 2010 that put developers on the hook for any accidents. The statute effectively banned American, European, or East Asian companies from attempting to build any reactors, lest they risk bankruptcy; only Russia’s state-owned nuclear company was willing to sell its wares on the subcontinent. In December, as I told you at the time, the Indian parliament passed legislation to reform the liability law and welcome more foreign developers into its market. Already, as I reported in a scoop for Heatmap last month, a Chicago-based fuel startup is making moves to sell its product in India.

Fast forward to this week: On Monday, a high-level delegation of U.S. industry officials flew to New Delhi to meet with Indian science minister Jitendra Singh and discuss “private investment opportunities” to export small modular reactors and other American nuclear technology, NucNet reported.

Sign up to receive Heatmap AM in your inbox every morning:

Ford Energy, the wholly owned battery storage business forged out of Ford Motor’s electric vehicle efforts, has landed its first big deal. On Monday, the company announced a five-year framework agreement with French utility giant EDF’s North American renewables division to design battery storage systems for the multinational. As part of the deal, EDF will buy up to 4 gigawatt-hours of battery blocks per year, totaling up to 20 gigawatt-hours by the end of the contract. The first deliveries are expected in 2028. Lisa Drake, Ford Energy’s president, said the deal “validates the market’s need for” a battery storage supplier “that combines industrial-scale manufacturing discipline with full lifecycle accountability.” In a statement, EDF said Ford’s “commitment to domestic manufacturing and its rigorous approach to traceability and lifecycle support align with the standards we hold across our portfolio.”

Last August, I told you that Anglo American’s deal to sell the U.S. giant Peabody Energy its Australian coal business for $3.8 billion collapsed. Well, nine months later, the London-based mining behemoth has found a new buyer for the same price. On Monday, the Financial Times reported that Anglo American would sell the Australian coal mining operations to Dhilmar, a little-known and privately held company that was formed out of some Canadian mining assets and incorporated in London in 2024. The value of the deal? $3.88 billion. The agreement, which faces years of arbitration, closes what the newspaper called “a difficult chapter for Anglo” after last year’s sale to Peabody fell apart following an explosion at one of the mines included in the deal.

India isn’t the only country getting its act together on new nuclear plants. On Monday, Sweden’s next-generation reactor champion, the startup Blykalla, submitted the first-ever application to regulators in Stockholm to build the nation’s first commercial advanced nuclear reactor park two hours north of the capital. The 330-megawatt facility would include six lead-cooled units Blykalla called “advanced modular reactors,” or AMRs. “This application is a historic first for Sweden,” Blykalla CEO Jacob Stedman said in a statement. “We’re not just planning an advanced reactor park — we’re building Sweden’s energy future and putting the country at the forefront of the global nuclear power renaissance.”