You’re out of free articles.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Sign In or Create an Account.

By continuing, you agree to the Terms of Service and acknowledge our Privacy Policy

Welcome to Heatmap

Thank you for registering with Heatmap. Climate change is one of the greatest challenges of our lives, a force reshaping our economy, our politics, and our culture. We hope to be your trusted, friendly, and insightful guide to that transformation. Please enjoy your free articles. You can check your profile here .

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Subscribe to get unlimited Access

Hey, you are out of free articles but you are only a few clicks away from full access. Subscribe below and take advantage of our introductory offer.

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Create Your Account

Please Enter Your Password

Forgot your password?

Please enter the email address you use for your account so we can send you a link to reset your password:

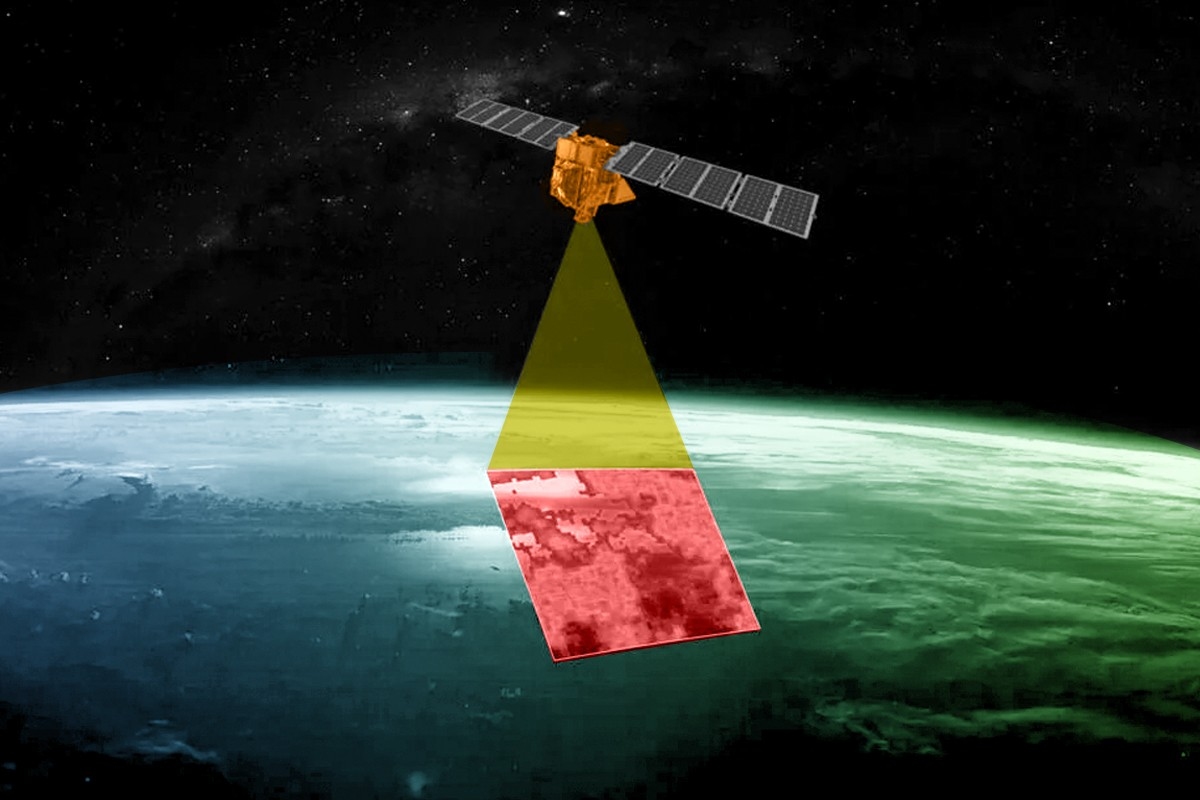

Over a dozen methane satellites are now circling the Earth — and more are on the way.

On Monday afternoon, a satellite the size of a washing machine hitched a ride on a SpaceX rocket and was launched into orbit. MethaneSAT, as the new satellite is called, is the latest to join more than a dozen other instruments currently circling the Earth monitoring emissions of the ultra-powerful greenhouse gas methane. But it won’t be the last. Over the next several months, at least two additional methane-detecting satellites from the U.S. and Japan are scheduled to join the fleet.

There’s a joke among scientists that there are so many methane-detecting satellites in space that they are reducing global warming — not just by providing essential data about emissions, but by blocking radiation from the sun.

So why do we keep launching more?

Despite the small army of probes in orbit, and an increasingly large fleet of methane-detecting planes and drones closer to the ground, our ability to identify where methane is leaking into the atmosphere is still far too limited. Like carbon dioxide, sources of methane around the world are numerous and diffuse. They can be natural, like wetlands and oceans, or man-made, like decomposing manure on farms, rotting waste in landfills, and leaks from oil and gas operations.

There are big, unanswered questions about methane, about which sources are driving the most emissions, and consequently, about tackling climate change, that scientists say MethaneSAT will help solve. But even then, some say we’ll need to launch even more instruments into space to really get to the bottom of it all.

Measuring methane from space only began in 2009 with the launch of the Greenhouse Gases Observing Satellite, or GOSAT, by Japan’s Aerospace Exploration Agency. Previously, most of the world’s methane detectors were on the ground in North America. GOSAT enabled scientists to develop a more geographically diverse understanding of major sources of methane to the atmosphere.

Soon after, the Environmental Defense Fund, which led the development of MethaneSAT, began campaigning for better data on methane emissions. Through its own, on-the-ground measurements, the group discovered that the Environmental Protection Agency’s estimates of leaks from U.S. oil and gas operations were totally off. EDF took this as a call to action. Because methane has such a strong warming effect, but also breaks down after about a decade in the atmosphere, curbing methane emissions can slow warming in the near-term.

“Some call it the low hanging fruit,” Steven Hamburg, the chief scientist at EDF leading the MethaneSAT project, said during a press conference on Friday. “I like to call it the fruit lying on the ground. We can really reduce those emissions and we can do it rapidly and see the benefits.”

But in order to do that, we need a much better picture than what GOSAT or other satellites like it can provide.

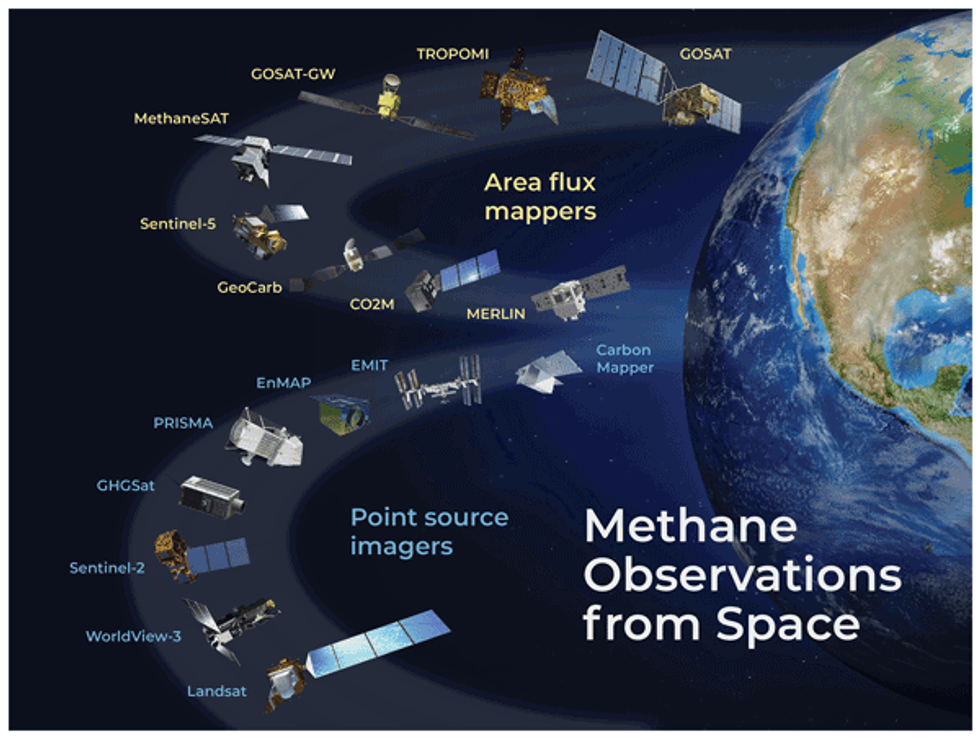

In the years since GOSAT launched, the field of methane monitoring has exploded. Today, there are two broad categories of methane instruments in space. Area flux mappers, like GOSAT, take global snapshots. They can show where methane concentrations are generally higher, and even identify exceptionally large leaks — so-called “ultra-emitters.” But the vast majority of leaks, big and small, are invisible to these instruments. Each pixel in a GOSAT image is 10 kilometers wide. Most of the time, there’s no way to zoom into the picture and see which facilities are responsible.

Point source imagers, on the other hand, take much smaller photos that have much finer resolution, with pixel sizes down to just a few meters wide. That means they provide geographically limited data — they have to be programmed to aim their lenses at very specific targets. But within each image is much more actionable data.

For example, GHGSat, a private company based in Canada, operates a constellation of 12 point-source satellites, each one about the size of a microwave oven. Oil and gas companies and government agencies pay GHGSat to help them identify facilities that are leaking. Jean-Francois Gauthier, the director of business development at GHGSat, told me that each image taken by one of their satellites is 12 kilometers wide, but the resolution for each pixel is 25 meters. A snapshot of the Permian Basin, a major oil and gas producing region in Texas, might contain hundreds of oil and gas wells, owned by a multitude of companies, but GHGSat can tell them apart and assign responsibility.

“We’ll see five, 10, 15, 20 different sites emitting at the same time and you can differentiate between them,” said Gauthier. “You can see them very distinctly on the map and be able to say, alright, that’s an unlit flare, and you can tell which company it is, too.” Similarly, GHGSat can look at a sprawling petrochemical complex and identify the exact tank or pipe that has sprung a leak.

But between this extremely wide-angle lens, and the many finely-tuned instruments pointing at specific targets, there’s a gap. “It might seem like there’s a lot of instruments in space, but we don’t have the kind of coverage that we need yet, believe it or not,” Andrew Thorpe, a research technologist at NASA’s Jet Propulsion Laboratory told me. He has been working with the nonprofit Carbon Mapper on a new constellation of point source imagers, the first of which is supposed to launch later this year.

The reason why we don’t have enough coverage has to do with the size of the existing images, their resolution, and the amount of time it takes to get them. One of the challenges, Thorpe said, is that it’s very hard to get a continuous picture of any given leak. Oil and gas equipment can spring leaks at random. They can leak continuously or intermittently. If you’re just getting a snapshot every few weeks, you may not be able to tell how long a leak lasted, or you might miss a short but significant plume. Meanwhile, oil and gas fields are also changing on a weekly basis, Joost de Gouw, an atmospheric chemist at the University of Colorado, Boulder, told me. New wells are being drilled in new places — places those point-source imagers may not be looking at.

“There’s a lot of potential to miss emissions because we’re not looking,” he said. “If you combine that with clouds — clouds can obscure a lot of our observations — there are still going to be a lot of times when we’re not actually seeing the methane emissions.”

De Gouw hopes MethaneSAT will help resolve one of the big debates about methane leaks. Between the millions of sites that release small amounts of methane all the time, and the handful of sites that exhale massive plumes infrequently, which is worse? What fraction of the total do those bigger emitters represent?

Paul Palmer, a professor at the University of Edinburgh who studies the Earth’s atmospheric composition, is hopeful that it will help pull together a more comprehensive picture of what’s driving changes in the atmosphere. Around the turn of the century, methane levels pretty much leveled off, he said. But then, around 2007, they started to grow again, and have since accelerated. Scientists have reached different conclusions about why.

“There’s lots of controversy about what the big drivers are,” Palmer told me. Some think it’s related to oil and gas production increasing. Others — and he’s in this camp — think it’s related to warming wetlands. “Anything that helps us would be great.”

MethaneSAT sits somewhere between the global mappers and point source imagers. It will take larger images than GHGSat, each one 200 kilometers wide, which means it will be able to cover more ground in a single day. Those images will also contain finer detail about leaks than GOSAT, but they won’t necessarily be able to identify exactly which facilities the smaller leaks are coming from. Also, unlike with GHGSat, MethaneSAT’s data will be freely available to the public.

EDF, which raised $88 million for the project and spent nearly a decade working on it, says that one of MethaneSAT’s main strengths will be to provide much more accurate basin-level emissions estimates. That means it will enable researchers to track the emissions of the entire Permian Basin over time, and compare it with other oil and gas fields in the U.S. and abroad. Many countries and companies are making pledges to reduce their emissions, and MethaneSAT will provide data on a relevant scale that can help track progress, Maryann Sargent, a senior project scientist at Harvard University who has been working with EDF on MethaneSAT, told me.

It could also help the Environmental Protection Agency understand whether its new methane regulations are working. It could help with the development of new standards for natural gas being imported into Europe. At the very least, it will help oil and gas buyers differentiate between products associated with higher or lower methane intensities. It will also enable fossil fuel companies who measure their own methane emissions to compare their performance to regional averages.

MethaneSAT won’t be able to look at every source of methane emissions around the world. The project is limited by how much data it can send back to Earth, so it has to be strategic. Sargent said they are limiting data collection to 30 targets per day, and in the near term, those will mostly be oil and gas producing regions. They aim to map emissions from 80% of global oil and gas production in the first year. The outcome could be revolutionary.

“We can look at the entire sector with high precision and track those emissions, quantify them and track them over time. That’s a first for empirical data for any sector, for any greenhouse gas, full stop,” Hamburg told reporters on Friday.

But this still won’t be enough, said Thorpe of NASA. He wants to see the next generation of instruments start to look more closely at natural sources of emissions, like wetlands. “These types of emissions are really, really important and very poorly understood,” he said. “So I think there’s a heck of a lot of potential to work towards the sectors that have been really hard to do with current technologies.”

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

On Exxon’s Venezuela flipflop, SpaceX’s fears, and a nuclear deal spree

Current conditions: U.S. government forecasters project just one to three major storms in the Atlantic this hurricane season • The Meade Lake Complex, a wildfire that scorched 92,000 acres in southwest Kansas, is now largely contained • Temperatures in Vientiane, the sprawling capital of Laos, are nearing 100 degrees Fahrenheit amid a week of lightning storms.

A years-long megadrought. Reduced snowpack in the northern mountains. Rising water demand from southwestern farms and cities whose groundwater is depleting. It is no wonder the water levels in Lake Mead are getting low. Now the Trump administration is giving the Hoover Dam money for a makeover to make do in the increasingly parched new normal. The Great Depression-era megaproject in the Colorado River’s Black Canyon boasts the largest reservoir capacity among hydroelectric dams. But the facility’s actual output of electricity — already outpaced by six other dams in the U.S. — is set to plunge to a new low if drought-parched Lake Meade’s elevation drops below 1,035 feet, the level at which bubbles start to form damage the turbines. At that point, the dam’s output could drop from its lowest standard generating capacity of 1,302 megawatts to a meager 382 megawatts. Last night, federal data showed the water level perilously close to that boundary, at 1,052 feet. The Bureau of Reclamation’s $52 million injection will pay for the replacement of as many as three older turbines with new, so-called wide-head turbines, which are designed to operate efficiently at levels below 1,035 feet. Once installed, the agency expects to restore at least 160 megawatts of hydropower capacity. “This action ensures Hoover Dam remains a cornerstone of American energy production for decades to come,” Andrea Travnicek, the Interior Department’s assistant secretary for water and science, said in a statement.

Like geothermal, hydropower is a form of renewable energy that President Donald Trump appreciates, given its 24/7 output. Last month, the Department of Energy’s recently reorganized Hydropower and Hydrokinetic Office announced that it would allow nearly $430 million in payments to American hydropower facilities to move forward after stalling the funding for 293 projects at 212 facilities. Last year, the Federal Energy Regulatory Commission proposed streamlining the process for relicensing existing dams and giving the facilities a categorical exclusion from the National Environmental Policy Act. The Energy Department also withdrew from a Biden-era agreement to breach dams in the Pacific Northwest in a bid to restore the movement of salmon through the Columbia River.

Shortly after the U.S. capture of Venezuelan leader Nicolas Máduro in January, Exxon Mobil CEO Darren Woods told CNBC the South American nation would need to embark on a serious transition to democracy before the largest U.S. energy company could invest in production in a country the firm exited two decades ago amid the socialist government’s crackdown. Five months later, he may be changing his tune. On Thursday, The New York Times reported that Exxon Mobil was in talks to acquire rights to start drilling for oil in Venezuela. If finalized, such a deal would mark what the newspaper called “a victory for President Trump, who has declared the country’s vast natural wealth open to American businesses.”

It’s not just Elon Musk’s xAI data centers that brace for the data center backlash that Heatmap’s Jael Holzman clocked last fall as the thing “swallowing American politics.” In its S-1 filing to the Securities and Exchange Commission ahead of one of the country’s most anticipated stock market debuts this year, SpaceX warned that mounting public skepticism over AI could harm the growth of America’s leading private space firm. “If AI technologies are perceived to be significantly disruptive to society, it could lead to governmental or regulatory restrictions or prohibitions on their use, societal concerns or unrest, or both, any of which could materially and adversely affect our ability to develop, deploy, or commercialize AI technologies and execute our business strategy,” the company disclosed in the filing, a detail highlighted in a post on X by Transformer editor Shakeel Hashim. “Our implementation of AI technologies, including through our AI segment’s systems, could result in legal liability, regulatory action, operational disruption, brand, reputational or competitive harm, or other adverse impacts.”

Sign up to receive Heatmap AM in your inbox every morning:

Yesterday, I told you that corporate energy buyers last year inked deals for more nuclear power than wind energy. But if you needed more proof that, as Heatmap’s Katie Brigham called last summer, “the nuclear dealmaking boom is real,” just look at this week:

Separately, this week saw two projects take big steps forward:

It’s been the year of Chinese automotives. Ford’s chief executive admits he can’t get enough of his Xiaomi SU7. Chinese auto exports are booming. And now Beijing’s ultimate automotive champion, BYD, is accelerating talks to enter Formula 1. On Thursday, the Financial Times reported that the company had met with former Red Bull Racing chief Christian Horner in Cannes. “Following talks between Stella Li, executive vice-president at BYD, and Horner last week, BYD intends to hold further meetings with senior figures involved in F1 and at the FIA, the governing body,” the newspaper reported.

China’s hydrogen boom continues. The country’s electrolyzers are quickly going the way of batteries and solar panels by securing global export deals that reflect their efficiency and competitive prices. On Thursday, Hydrogen Insight reported that Chinese manufacturer Sungrow Hydrogen inked a deal to supply a 2-megawatt alkaline electrolyzer to a Spanish cement facility. That same day, another Chinese manufacturer, Hygreen Energy, announced an agreement to supply a 1.3-megawatt system to a green hydrogen project in Nova Scotia.

With both temperatures and electricity prices rising, many who are using less energy are still paying more, according to data from the Electricity Price Hub.

In 135 years of record-keeping, Tampa, Florida, has never been hotter than it was last July.

Though often humid, the city on the bay is typically breezy, even in summer. But on July 27, it broke 100 degrees Fahrenheit on the thermometer for the first time ever; two days later, it hit its highest-ever heat index, 119 degrees. The family of Hezekiah Walters, the 14-year-old who died of heat stroke during football practice in Tampa in 2019, urged neighbors at a local CPR certification event to take the heat warnings seriously. Local HVAC companies complained about the volume of calls. Area hospitals struggled to keep their rooms and clinics comfortable. Experts later said the record temperatures were made five times more likely by climate change.

But according to data from Heatmap and MIT’s Electricity Price Hub, Tampa Electric customers used 14% less electricity in July 2025 than they did in the same month of 2020, which was Tampa’s previous hottest July on record — about 216 kilowatt-hours per household less, roughly the equivalent of running a central AC a couple hours fewer per day for an entire month. Tellingly, Tampa Electric raised rates over that period by 84%, with the average bill growing from $111 to $190 per month.

Though there are many instances in many places around the country where usage has dropped as rates rose, the correlation doesn’t necessarily mean people were rationing their electricity. Climate-related factors like anomalously cool summers can lower summer bills, while energy efficiency upgrades can also result in changes to residential consumption. Southern California Edison customers, for example, used 24% less electricity in 2025 than they did in 2020, at least in part due to the widespread adoption of rooftop solar.

Thanks to recent efforts by the Energy Information Agency to track energy insecurity and utility disconnections, however, we can start to tease out deficiency from efficiency. By cross-referencing that data with rate and usage statistics from the Electricity Price Hub, we find a handful of places like Tampa, where people have seemingly reduced their electricity usage because they couldn’t afford the added cost, even during a deadly heatwave. (Tampa Electric did not return our request for comment.)

The EIA’s tracking program, known as the Residential Energy Consumption Survey, tells a clear story: Across the country, people are struggling to absorb the rising costs of electricity. In 2020, nearly one in four Americans reported some form of energy insecurity, meaning they were either unable to afford to use heating or cooling equipment, pay their energy bills, or pay for other necessities due to energy costs. By 2024, the most recent data available, that number had risen to a third — and two-thirds of households with incomes under $10,000. In 2024 alone, utilities sent 94.9 million final shutoff notices to residential electricity customers.

Since 2020, 98% of the more than 400 utilities in the Heatmap-MIT dataset have raised their rates — more than half of them by greater than 20%; about one in 10 utilities have raised their rates by 50% or more. And 219 of those utilities raised rates even as usage in their service area fell, meaning that as customers used less, they still paid more.

“I don’t feel like [the rates have] ever been all that affordable, but they have steadily increased more and more and more,” Janelle Ghiorso, a PG&E customer in California who recently filed for bankruptcy due to the debt she incurred from her electricity bills, told me. She added: “When do I get relief? When I’m dead?”

The people hit hardest by rate increases tend to be those already struggling the most. For example, about 30% of Kentucky residents reported going without heat or AC, leaving their homes at unsafe temperatures, or cutting back on food or medicine to pay energy bills, per the EIA’s 2020 RECS report. Since then, Kentucky Power has raised rates in the eastern part of the state by 45%, adding about $64 to the average monthly bill in a service area where the median monthly household income can be less than $4,000.

The Department of Energy’s Low-income Energy Affordability Data, which measures energy affordability patterns, actually obscures some of this burden. It reports that for all of Kentucky, annual electricity costs account for about 2% of the state’s median household income, which is about average for the nation. But in Kentucky Power’s Appalachian service area specifically, many households live under 200% of the poverty level, and $15 of every $100 someone earns might go toward their energy costs, Chris Woolery, the residential energy coordinator at Mountain Association, a nonprofit economic development group that serves the region, told me. “The situation is just dire for many folks,” he said.

Kentucky Power is aware of this; its low-income assistance charge has grown by 110% since 2020, the Heatmap-MIT data shows. Woolery also noted that the utility agreed to voluntary protections against disconnections, such as a 24-hour moratorium during extreme weather, in a rate case settlement with the Kentucky Public Service Commission. The commission rejected the proposal, but the utility kept the protections anyway, Woolery told me.

Customers in other areas are not so lucky.

In states like Oklahoma, where one in three households reported energy insecurity in 2020, rates rose about 30% from 2020 to 2025, according to our data. Per the EIA survey, Oklahoma’s monthly disconnection rate is more than three times the national average. Oklahoma doesn’t have the highest electricity rates in the country — far from it. But median incomes there are low enough that even moderate rate increases leave some with hard choices.

Interestingly, in bottom-income-quartile states, where median household incomes are below $81,337, only about 30% of utilities show a pattern of rising bills and falling electricity usage, which would suggest energy rationing. The other 70% of utilities show the opposite effect: usage is rising despite electricity rates becoming a bigger burden of customers’ incomes. In Kentucky Power’s service area, for example, bills may be up $64 a month, but usage remained essentially flat.

“Think of it this way: The electric company goes to the front of the line,” Mark Wolfe, the executive director of the National Energy Assistance Directors Association, a policy group for administrators of the Low-Income Home Energy Assistance Program, told me of how households triage their bills. If you need to buy something from the grocery store, the drug store, or pay your electricity bill, then “the utility goes to the front of the line because they can shut off your power, which causes lots of other problems.”

Wolfe added, “Plus, if you’re really in dire straits, you can go to the food bank. You can’t go to the ‘other’ utility company.”

Even as resource-strapped households put a higher share of their income toward electricity, they’re also least able to afford energy efficiency upgrades like newer appliances, smart thermostats, or solar panels. The pattern is prevalent in places with extreme climates, such as Louisiana, Mississippi, and Alabama, where turning off the AC in the middle of summer could mean death. It shows up most starkly among the most extreme rate examples in our data set, like the utilities serving remote Alaska villages — despite astronomical electricity prices, usage hasn’t fluctuated much because its customers are already using it as little as they can afford. The elderly and other individuals living on fixed incomes are also often unable to cut their electricity usage beyond what little they’re already using.

In middle-income states like Florida, roughly 60% of the utilities in our dataset show rising bills and falling electricity use — more than twice the rate we see in the lowest-income states. While the poorest Americans have already reduced their electricity use to the bare minimum and are cutting groceries and medicine in order to keep the heat and AC on, in places like Tampa, where the median income is $96,480, the electricity rate shocks have caused even middle- and even high-earning households to start worrying about their bills. According to a new survey released Tuesday by Ipsos and the energy policy nonprofit PowerLines, 74% of respondents with household incomes over $100,000 said they are worried about their utility bills increasing.

“People are seeing their utility bill as one of the few things that changes so much month to month, that is so unpredictable, and that they don’t have any control over,” Charles Hua, the founder and executive director of PowerLines, told me.

Wolfe, the executive director at NEADA, agreed, saying that for the first time, the association has begun hearing from families with incomes above the threshold who need assistance. “An extra $100 a month for a family, but they’re middle class — that shouldn’t push them over the edge,” at least in theory, Wolfe said. But for those with no flexibility in their budgets, anything additional or unpredictable “pushes them close to the edge — from going from middle class to lower middle class — and I think that’s why this affordability crisis is becoming such an issue.”

We can also see this phenomenon in the explosion of line items on utility bills going toward funding assistance programs. Appalachian Power Co.’s low-income surcharge, for instance, is up 3,200% for customers in Virginia; Puget Sound Energy’s low-income program is up 970% for customers in Washington; and PacifiCorp Oregon’s low-income cost-recovery charge, up 879%.

The EIA data, too, bears this out: Florida had one of the highest rates of people reporting they were “unable to use air conditioning equipment” due to costs in the RECS data, and in 2024, there were 186,202 disconnections in the state in July alone — every one of which would have meant people no longer had the power to run their ACs. (FPL and Duke Energy Florida also show usage declines as rates rose, although neither raised rates as much as Tampa.)

The data also shows places where higher-income earners have aggressively pursued efficiency upgrades to lower their usage. In the LA Department of Water and Power service area in California, usage is down more than 11% overall between 2020 and 2025, one of the biggest drops in our dataset. But the lower usage is more evenly distributed month to month, indicating that things like solar adoption and efficiency programs are likely behind the drop, rather than cost pressures. (Rates there still rose more than 28%, or about $15 per month.)

Even doing everything right wasn’t enough to save customers in the end — households that cut their electricity use still saw their bills rise by an average of $20 a month, our data shows.

Perhaps most concerning, though, is the relentless upward trajectory. PowerLines reports that utilities have submitted $9.4 billion in new requests in the first quarter of 2026 alone. Heatmap and MIT’s numbers show that 79% of utilities raised rates in 2025, and 55% have raised them again already this year.

But the advocates I talked to stressed that utilities have more agency than they get credit for. Take Kentucky Power, for example, with its voluntary disconnection protections. “It just shows that you don’t necessarily have to make disconnections to be financially solvent,” Woolery of the Mountain Association pointed out. Or take Ouachita Electric in Arkansas, which passed a 4.5% rate decrease after investing in efficiency upgrades in consumers’ homes through a pay-as-you-save model.

But that’s the rare exception. For most customers, relief is not obviously on the way. Signs increasingly point to the imminent onset of a super El Niño, which could bring punishing, climate-change-intensified heat waves across the United States. The July 2025 record in Tampa will almost certainly not stand; someday, it’ll be the second-hottest summer, or the third. In a few decades, it might even look cool.

And still there will be bills to pay.

Rob talks with UCLA law professor Ann Carlson about her fascinating new book, Smog and Sunshine.

We live in a time of unheralded environmental victories. Dolphins and whales swim in New York and San Francisco harbors. Lead has been eliminated globally in gasoline for cars and trucks. And Southern California has cleaned up its air.

That last one is more important than you might think. On today’s episode of Shift Key, Rob is joined by Ann Carlson, a professor of environmental law at UCLA and the former acting head of the National Highway Traffic Safety Administration. She's also the author of a new book, Smog and Sunshine: The Surprising Story of How Los Angeles Cleaned Up Its Air, which was released last month by the University of California Press.

Ann and Rob discuss why cleaning up LA’s air was so important to cleaning up the world’s air. They chat about why LA initially misdiagnosed the causes of its terrible air pollution, how it got them right, and what we can learn from the city’s eventual inspiring success.

Shift Key is hosted by Robinson Meyer, the founding executive editor of Heatmap News.

Subscribe to “Shift Key” and find this episode on Apple Podcasts, Spotify, Amazon, or wherever you get your podcasts.

You can also add the show’s RSS feed to your podcast app to follow us directly.

Here is an excerpt from their conversation:

Ann Carlson: We should talk more about the Clean Air Act itself because it’s a pretty extraordinary piece of legislation — hard to imagine something like that passing today.

Robinson Meyer: As you are a professor of environmental law, I can’t think of a better topic to talk about. So one, there’s a few nuances that are important. The first is that California is early to air pollution law, so it’s beginning to explore how to regulate cars by the time that the Clean Air Act passes. But the second is this distinction that you’ve begun to draw in this conversation between technology following versus technology forcing regulation, where California had adopted technology following regulation, and that made it kind of captive to the car companies.

Can you talk a little bit about why the Clean Air Act is different and why it was different? And did people understand maybe how different it was when they were writing it?

Carlson: I think they did understand how different it was. And what they did was, instead of focusing on whether technology was available or what was possible to demand of auto companies based on that technology, they focused on public health. And the basic overarching idea in the Clean Air Act is, we are going to set standards that protect public health. We’re not going to worry about cost. We’re not going to worry about technological availability. We’re going to tell manufacturers, for example, you cut pollutants by 90% by 1975 and 1976, depending on the pollutant. We understand there’s no technology. Go out and invent it. That’s the technology-forcing part of the statute.

Of course, the auto manufacturers say they can’t do it. Lee Iacocca famously says that Ford will stop manufacturing vehicles if the Clean Air Act passes. Ford continues to manufacture vehicles to this day. He, of course, was engaged in hyperbole, but that gives you some sense for just how intense the opposition was and how kind of panicked the manufacturers were. But that technology-forcing statute, again, combined with California’s authority to regulate, set off this arms race to really figure out how do we cut pollutants dramatically.

You can find a full transcript of the episode here.

Mentioned:

Ann Carlson’s new book: Smog and Sunshine: The Surprising Story of How Los Angeles Cleaned Up Its Air

This episode of Shift Key is sponsored by ...

Heatmap Pro brings all of our research, reporting, and insights down to the local level. The software platform tracks all local opposition to clean energy and data centers, forecasts community sentiment, and guides data-driven engagement campaigns. Book a demo today to see the premier intelligence platform for project permitting and community engagement.

Music for Shift Key is by Adam Kromelow.