You’re out of free articles.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

Sign In or Create an Account.

By continuing, you agree to the Terms of Service and acknowledge our Privacy Policy

Welcome to Heatmap

Thank you for registering with Heatmap. Climate change is one of the greatest challenges of our lives, a force reshaping our economy, our politics, and our culture. We hope to be your trusted, friendly, and insightful guide to that transformation. Please enjoy your free articles. You can check your profile here .

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Subscribe to get unlimited Access

Hey, you are out of free articles but you are only a few clicks away from full access. Subscribe below and take advantage of our introductory offer.

subscribe to get Unlimited access

Offer for a Heatmap News Unlimited Access subscription; please note that your subscription will renew automatically unless you cancel prior to renewal. Cancellation takes effect at the end of your current billing period. We will let you know in advance of any price changes. Taxes may apply. Offer terms are subject to change.

Create Your Account

Please Enter Your Password

Forgot your password?

Please enter the email address you use for your account so we can send you a link to reset your password:

With the ongoing disaster approaching its second week, here’s where things stand.

A week ago, forecasters in Southern California warned residents of Los Angeles that conditions would be dry, windy, and conducive to wildfires. How bad things have gotten, though, has taken everyone by surprise. As of Monday morning, almost 40,000 acres of Los Angeles County have burned in six separate fires, the biggest of which, Palisades and Eaton, have yet to be fully contained. The latest red flag warning, indicating fire weather, won’t expire until Wednesday.

Many have questions about how the second-biggest city in the country is facing such unbelievable devastation (some of these questions, perhaps, being more politically motivated than others). Below, we’ve tried to collect as many answers as possible — including a bit of good news about what lies ahead.

A second Santa Ana wind event is due to set in Monday afternoon. “We’re expecting moderate Santa Ana winds over the next few days, generally in the 20 to 30 [mile per hour] range, gusting to 50, across the mountains and through the canyons,” Eric Drewitz, a meteorologist with the Forest Service, told me on Sunday. Drewitz noted that the winds will be less severe than last week’s, when the fires flared up, but he also anticipates they’ll be “more easterly,” which could blow the fires into new areas. A new red flag warning has been issued through Wednesday, signaling increased fire potential due to low humidity and high winds for several days yet.

If firefighters can prevent new flare-ups and hold back the fires through that wind event, they might be in good shape. By Friday of this week, “it looks like we could have some moderate onshore flow,” Drewitz said, when wet ocean air blows inland, which would help “build back the marine layer” and increase the relative humidity in the region, decreasing the chances of more fires. Information about the Santa Anas at that time is still uncertain — the models have been changing, and the wind is tricky to predict the strength of so far out — but an increase in humidity will at least offer some relief for the battered Ventura and Orange Counties.

The Palisades Fire, the biggest in L.A., ripped through the hilly and affluent area between Santa Monica and Malibu, including the Pacific Palisades neighborhood, the second-most expensive zip code in Los Angeles and home to many celebrities. Structures in Big Rock, a neighborhood in Malibu, have also burned. The fire has also encroached on the I-405 and the Getty Villa, and destroyed at least two homes in Mandeville Canyon, a neighborhood of multimillion-dollar homes. Students at nearby University of California, Los Angeles, were told on Friday to prepare for a possible evacuation.

The Eaton Fire, the second biggest blaze in the area, has killed 16 people in Altadena, a neighborhood near Pasadena, according to the Los Angeles Times, making it one of the deadliest fires in the modern history of California.

The 1,000-acre Kenneth fire is 100% contained but still burning near Calabasas and the gated community of Hidden Hills. The Hurst Fire has burned nearly 800 acres and is 89% contained and is still burning near Sylmar, the northernmost neighborhood in L.A. Though there are no evacuation notices for either the Kenneth or the Hurst fires, residents in the L.A. area should monitor the current conditions as the situation continues to be fluid and develop.

The 43-acre Sunset Fire, which triggered evacuations last week in Hollywood and Hollywood Hills, burned no homes and is 100% contained.

The Lidia Fire, which ignited in a remote area south of Acton, California, on Wednesday afternoon, burned 350 acres of brush and is 100% contained.

It can take years to determine the cause of a fire, and investigations typically don’t begin until after the fire is under control and the area is safe to reenter, Edward Nordskog, a retired fire investigator from the Los Angeles Sheriff’s Department, told Heatmap’s Emily Pontecorvo. He also noted, however, that urban fires are typically easier to pinpoint the cause of than wildland fires due to the availability of witnesses and surveillance footage.

The vast majority of wildfires, 85%, are caused by humans. So far, investigators have ruled out lightning — another common fire-starter — because there were no electrical storms in the area when the fires started. In the case of the Palisades Fire, there were no power lines in the area of the ignition, though investigators are now looking into an electrical transmission tower in Eaton Canyon as the possible cause of the deadly fire in Altadena. There have been rumors that arsonists started the fires, but investigators say that scenario is also pretty unlikely due to the spread of the fires and how remote the ignition areas are.

Officially, 24 people have died, but that tally is likely to rise. California Governor Gavin Newsom said Sunday that he expects “a lot more” deaths will be added to the total in the coming days as search efforts continue.

Incoming President Donald Trump slammed the response to the L.A. fires in a Truth Social post on Sunday morning: “This is one of the worst catastrophes in the history of our Country,” he wrote. “They just can’t put out the fires. What’s wrong with them?”

Though there is much blame going around — not all of it founded in reality — the challenges facing firefighters are immense. Last week, because of strong Santa Ana winds, fire crews could not drop suppressants like water or chemical retardant on the initial blazes. (In strong winds, water and retardant will blow away before they reach the flames on the ground.)

Fighting a fire in an urban or suburban area is also different from fighting one in a remote, wild area. In a true wildfire, crews don’t use much water; firefighters typically contain the blazes by creating breaks — areas cleared of vegetation that starve a fire of fuel and keep it from spreading. In an urban or suburban event, however, firefighters can’t simply hack through a neighborhood, and typically have to use water to fight structure fires. Their priority also shifts from stopping the fire to evacuating and saving people, which means putting out the fire itself has to wait.

What’s more, the L.A. area faced dangerous fire weather going into last week — with wind gusts up to 100 miles per hour and dry air — and the persistence of the Santa Ana winds during firefighting operations through the weekend made it extremely difficult for emergency managers to gain a foothold.

Trump and others have criticized Los Angeles for being unprepared for the fires, given reports that some fire hydrants ran dry or had low pressure during operations in Pacific Palisades. According to the Los Angeles Department of Water and Power, about 20% of hydrants were affected, mostly at higher elevations.

The problem isn’t a lack of preparation, however. It’s that the L.A. wildfires are so large and widespread, the county’s preparations were quickly overwhelmed. “We’re fighting a wildfire with urban water systems, and that is really challenging,” Los Angeles Department of Water and Power CEO Janisse Quiñones said in a news conference last week. When houses burn down, water mains can break open. Civilians also put a strain on the system when they use hoses or sprinkler systems to try to protect their homes.

On Sunday, Judy Chu, the Democratic lawmaker representing Altadena, confirmed that fire officials had told her there was enough water to continue the battle in the days ahead. “I believe that we're in a good place right now,” she told reporters. Newsom, meanwhile, has responded to criticism over the water failure by ordering an investigation into the weak or dry hydrants.

So-called “super soaker” planes have had no problem with water access; they’re scooping directly from the ocean.

Yes. Although aerial support was grounded in the early stages of the wildfires due to severe Santa Ana winds, flights resumed during lulls in the storms last week.

There is a misconception, though, that water and retardant drops “put out” fires; they don’t. Instead, aerial support suppresses a fire so crews can get in close and use traditional methods, like cutting a fire break or spraying water. “All that up in the air, all that’s doing is allowing the firefighters [on the ground] a chance to get in,” Bobbie Scopa, a veteran firefighter and author of the memoir Both Sides of the Fire Line, told me last week.

With winds expected to pick up early this week, aerial firefighting operations may be grounded again. “If you have erratic, unpredictable winds to where you’ve got a gust spread of like 20 to 30 knots,” i.e. 23 to 35 miles per hour, “that becomes dangerous,” Dan Reese, a veteran firefighter and the founder and president of the International Wildfire Consulting Group, told me on Friday.

Because of the direction of the Santa Ana winds, wildfire smoke should mostly blow out to sea. But as winds shift, unhealthy air can blow into populated areas, affecting the health of residents.

Wildfire smoke is unhealthy, period, but urban and suburban smoke like that from the L.A. fires can be particularly detrimental. It’s not just trees and brush immolating in an urban fire, it’s also cars, and batteries, and gas tanks, and plastics, and insulation, and other nasty, chemical-filled things catching fire and sending fumes into the air. PM2.5, the inhalable particulates from wildfire smoke, contributes to thousands of excess deaths annually in the U.S.

You can read Heatmap’s guide to staying safe during extreme smoke events here.

“The bad news is, I’m not seeing any rain chances,” Drewitz, the Forest Service meteorologist, told me on Sunday. Though the marine layer will bring wetter air to the Los Angeles area on Friday, his models showed it’ll be unlikely to form precipitation.

Though some forecasters have signaled potential rain at the end of next week, the general consensus is that the odds for that are low, and that any rain there may be will be too light or short-lived to contribute meaningfully to extinguishing the fires.

The chaparral shrublands around Los Angeles are supposed to burn every 30 to 130 years. “There are high concentrations of terpenes — very flammable oils — in that vegetation; it’s made to burn,” Scopa, the veteran firefighter, told me.

What isn’t normal, though, is the amount of rain Los Angeles got ahead of this past spring — 52.46 inches in the preceding two years, the wettest period in the city’s history since the late 1800s — which was followed by a blisteringly hot summer and a delayed start to this year’s rainy season. Since October, parts of Southern California have received just 10% of their normal rainfall

This “weather whiplash” is caused by a warmer atmosphere, which means that plants will grow explosively due to the influx of rain and then dry out when the drought returns, leaving lots of dry fuels ready and waiting for a spark. “This is really, I would argue, a signature of climate change that is going to be experienced almost everywhere people actually live on Earth,” Daniel Swain, a climate scientist at the University of California, Los Angeles, who authored a new study on the pattern, told The Washington Post.

We know less about how climate change may affect the Santa Anas, though experts have some theories.

At least 12,000 structures have burned so far in the fires, which is already exacerbating the strain on the Los Angeles housing market — one of the country’s tightest even before the fires — as thousands of displaced people look for new places to live. “Dozens and dozens of people are going after the same properties,” one real estate agent told the Los Angeles Times. The city has reminded businesses that price gouging — including raising rental prices more than 10% — during an emergency is against the law.

Los Angeles had a shortage of about 370,000 homes before the fires, and between 2021 and 2023, the county added fewer than 30,000 new units per year. Recovery grants and federal aid can lag, and it often takes more than two years for even the first Housing and Urban Development Disaster Recovery Grants’ expenditures to go out.

My colleague Matthew Zeitlin wrote for Heatmap that the economic impact of the Los Angeles fire is already much higher than that of other fires, such as the 2018 Camp fire, partly because of the value of the Pacific Palisades real estate.

The wildfires may “deal a devastating blow to [California’s] fragile home insurance market,” Heatmap’s Matthew Zeitlin wrote last week. In recent years, home insurers have left California or declined to write new policies, at least partially due to the increased risk of wildfires in the state.

Depending on the extent of the damage from the fires, the coffers of California’s FAIR Plan — which insures homeowners who can’t get insurance otherwise, including many in Pacific Palisades and Altadena — could empty, causing it to seek money from insurers, according to the state’s regulations. As Zeitlin writes, “This would mean that Californians who were able to buy private insurance — because they don’t live in a region of the state that insurers have abandoned — could be on the hook for massive wildfire losses.”

First and foremost, sign up for all relevant emergency alerts. Make sure to turn on the sound on your phone and keep it near you in case of a change in conditions. Pack a “go bag” with essentials and consider filling your gas tank now so that you can evacuate at a moment’s notice if needed. Read our guide on what to do if you get a pre-evacuation or an evacuation notice ahead of time so that you’re not scrambling for information if you get an alert.

The free Watch Duty app has become a go-to resource for people affected by the fires, including friends and family of Angelenos who may themselves be thousands of miles away. The app provides information on fire perimeters, evacuation notices, and power outages. Its employees pull information directly from emergency responders’ radio broadcasts and sometimes beat official sources to disseminating it. If you need an endorsement: Emergency responders rely on the app, too.

There are many scams in the wake of disasters as crooks look to take advantage of desperate people — and those who want to help them. To play it safe, you can use a hub like the one established by GoFundMe, which is actively vetting campaigns related to the L.A. fires. If you’re looking to volunteer your time, make a donation of clothing or food, or if you’re able to foster animals the fire has displaced, you can use this handy database from the Mutual Aid Network L.A. There are also many national organizations, such as the Red Cross, that you can connect with if you want to help.

The City of Los Angeles and the Los Angeles Fire Department have asked that do-gooders not bring donations directly to fire stations or shelters; such actions can interfere with emergency operations. Their website provides more information about how you can help — productively — on their website.

Log in

To continue reading, log in to your account.

Create a Free Account

To unlock more free articles, please create a free account.

The companies are offering Texas ratepayers a three-year fixed-price contract that comes with participation in a virtual power plant.

Customers get a whole lot of choice in Texas’ deregulated electricity market — which provider to go with, fixed-rate or variable-rate plan, and contract length are all variables to consider. If a customer wants a home battery as well, that’s yet another exercise in complexity, involving coordination with the utility, installers, and contractors.

On Wednesday, residential battery manufacturer and virtual power plant provider Lunar Energy and U.K.-based retail electricity provider Octopus Energy announced a partnership to simplify all this. They plan to offer Texas electricity ratepayers a single package: a three-year fixed-rate contract, a 30-kilowatt-hour battery, and automatic participation in a statewide network of distributed energy resources, better known as a virtual power plant, or VPP.

This structure is novel for the way it packages the electricity rate, the physical battery, and the VPP into one single offering. It’s also the first time that Octopus, the U.K.’s largest retail electricity provider, is deploying standalone home batteries for customers in the U.S. without rooftop solar.

“As the price of batteries has come down and the data center dynamics really take shape, I think we’ve gained conviction that Texas — and frankly the rest of the U.S. — is ripe for deploying batteries at scale in households,” Nick Chaset, CEO of Octopus Energy’s U.S. arm, told me.

Octopus already offers a range of retail electricity plans in Texas’ market, which encourages competition among electricity providers by allowing consumers in most parts of the state to choose their provider. That structure made the state a natural first market for the rollout of this new model.

Participating customers will be able to lease a Lunar battery for $45 a month with no upfront cost and the option to purchase the system at the end of the 10-year battery lease. The program is open to ratepayers regardless of whether they have rooftop solar or are existing Octopus Energy customers.

Lunar will provide the battery hardware and — at least initially — the VPP software used to coordinate this statewide network of home batteries. That could evolve though, as Chaset told me that as the partnership grows, “there’s going to be a really good conversation about what is the right software platform.” Octopus’ platform and subsidiary, Kraken Technologies, manages what the company says is the “the world’s largest virtual power plant of residential assets.”

Lunar will orchestrate the batteries’ charging schedules so that they draw power when electricity prices are low and discharge back to the grid during periods of peak demand, effectively operating as a cheaper, cleaner alternative to a fossil fuel peaker plant. According to Octopus, customers will see the benefit of those energy arbitrage savings through the fixed price of their three-year contract. Households will also gain resiliency benefits — in the event of a power outage, their battery can keep the lights on and critical appliances running for as long as a day or so.

One of the biggest draws, Chaset told me, may just be the plan’s three-year term. In Texas, he said, it’s common for households to sign up for six- to 12-month fixed-rate electricity plans, which exposes them to significant price swings in between renewals. “This is as much as anything about stability,” he told me. “Oftentimes what we hear from Texans is yes, they want to save money. But what they don’t want is to feel like every six months they’re having to go shop again. They want to just know, this is my plan, I’m getting a fair rate.”

This model could thus gain traction simply by appealing to customers’ desire to reduce decision fatigue, while hopefully, at a much larger scale, demonstrating the outsize impact home batteries can have on the grid. “We believe that is going to be changing how [distributed energy resources] are viewed in a big way, Kunal Girotra, founder and CEO of Lunar Energy and former head of Tesla Energy, told me.

Girotra framed the Octopus partnership as “a blueprint that we hope we can replicate across other markets.” In practice, that would involve the two companies working with utilities in regulated territories to offer this subscription-style home battery to their customers. Octopus can’t serve as the electricity provider in regulated markets, meaning the company would have to add value in other ways. “Because we have so many different regulatory constructs, there’s going to be a lot of different flavors of this,” Chaset told me. Octopus did not specify the role it would play in a regulated market, other than to say, “We’re excited to explore how we can partner with utilities to deploy distributed battery systems.”

Unsurprisingly, the two companies are excited to bring their new retail electricity model to data center developers, as they aim to demonstrate “a world where you can see win-wins for the consumer and for this sector of the economy that we have to build,” Chaset told me. The idea is that data centers would help drive the deployment and adoption of distributed energy resources in communities where they plan to operate, which would effectively expand local grid capacity and potentially accelerate grid interconnection timelines — a binding constraint for hyperscalers in the AI race.

“If you are building a data center in Amarillo, Texas, and you want to make sure that the community around you benefits, come to us. We will deploy 25,000 batteries, put one in every single home in that county or in that community,“ Chaset told me. Reaching literally every household is wishful thinking, of course, as participation is voluntary and these programs remain unfamiliar to most consumers. Still, the broader message he’s trying to convey is clear: “Utilities, communities, we’re here to help.”

Current conditions: Illinois far outpaces every other state for tornadoes so far this year, clocking 80, with Mississippi in a distant second with 43 • Western North Carolina’s Blue Ridge Mountains face high wildfire risk during the day and frost at night • A magnitude 7.4 earthquake off the coast of Honshu, Japan, has raised the risk of a tsunami.

The nonprofit that sets the standards against which tens of thousands of companies worldwide measure their greenhouse gas emissions is secretive and ideologically tilted toward industry. That’s the conclusion of a new whistleblower report on which Heatmap’s Emily Pontecorvo got her hands yesterday. The problems at the Greenhouse Gas Protocol “are systemic,” and the nonprofit “seems to be moving further away from its commitment to accountability,” the report said. Danny Cullenward, the economist and lawyer focused on scientific integrity in climate science at the University of Pennsylvania’s Kleinman Center for Energy Policy who authored the report, sits on the Protocol’s Independent Standards Board. Due to a restrictive non-disclosure agreement preventing him from talking about what he has witnessed, he instead relied on publicly available information to illustrate the report. “Not only does the nonprofit community not have a voice on the board,” Cullenward wrote, but the absence of those voices “risks politicizing the work of scientist Board members.” Emily added: “While the Protocol’s official decision-making hierarchy deems scientific integrity as its top priority, in practice, scientists are left to defend the science to the business community.” The report follows a years-long process meant to bolster the group’s scientific credibility. “Critics have long faulted the Protocol for allowing companies to look far better on paper than they do to the atmosphere,” Emily explains. But creating standards that are both scientifically robust and feasible to implement is no easy feat.

The Trump administration’s efforts to paralyze wind and solar permits are once again withering in the cold light of the court room. On Tuesday, U.S. District Judge Denise Casper ordered the end to a series of delays on renewable energy permits, delivering a victory to regional trade groups that had argued the administration was violating the Administrative Procedures Act by holding up approvals. Per Heatmap’s Jael Holzman, the ruling “is a potentially fatal blow” to “key methods the Trump administration has used to stymie federal renewable energy permitting.”

Sign up to receive Heatmap AM in your inbox every morning:

In the race to build North America’s first small modular reactor, GE Vernova Hitachi Nuclear Energy is in the lead. Work is already underway on the world’s first deployment of the American-Japanese joint venture’s 300-megawatt boiling water reactor, the BWRX-300. The project at Ontario Power Generation’s Darlington plant is 38% done, and it’s on track to produce electricity by 2030, said Roger Martella, GE Vernova’s head of government affairs and policy. The remark, which Heatmap’s Matthew Zeitlin highlighted on X, came one day before the energy giant reports its latest earnings.

Meanwhile, one of Barack Obama’s early moves as president was to halt construction of the Yucca Mountain nuclear waste repository, effectively blocking any plan by the federal government to deal with radioactive spent fuel. Since then, no state has stepped up as an alternative; either way, federal law stipulates that the site in Nevada must be the United States’ first permanent tomb for nuclear waste. But what if nuclear waste wasn’t treated as waste at all? That was the Trump administration’s new pitch earlier this year when it invited states to submit applications to host so-called nuclear innovation campuses, sites where reactors, fuel enrichers, and waste recyclers can all set up shop. It’s a good sell. At least 28 states have so submitted applications, according to Exchange Monitor.

Project Vault, the effort the Trump administration set up to create a critical minerals reserve for U.S. manufacturers to weather Chinese trade restrictions on key metals, will soon close its first funding tranche. The program, announced in February, will combine $2 billion in private funding with a $10 billion loan from the Export-Import Bank of the U.S. The project “was not designed to be a stockpile alone,” John Jovanovic, the head of the Ex-Im Bank, told Reuters. “What it was designed to do was actually solve problems that the market faces … What we want to do is let it be dynamic and let it help try to solve a bunch of these problems.”

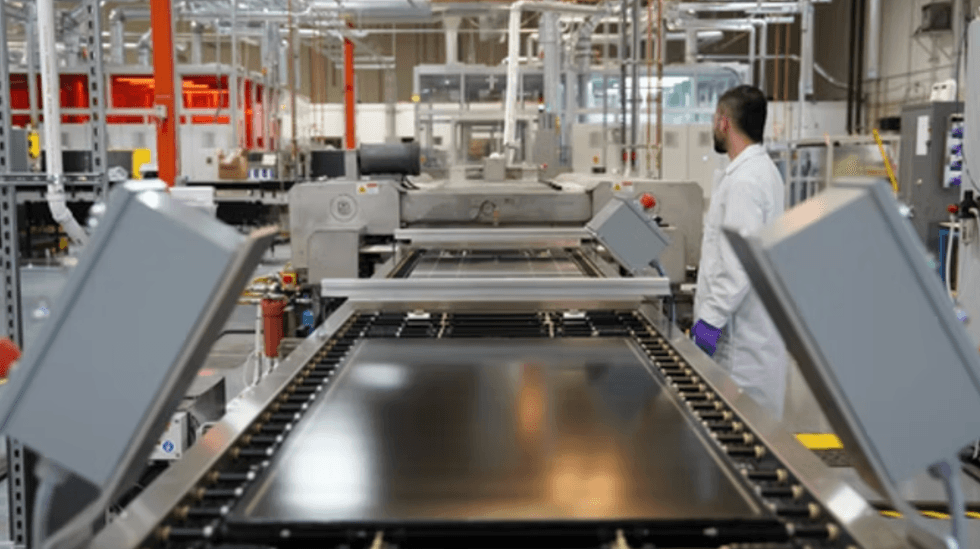

“Perovskites hold a place of honor in the pantheon of much-heralded clean energy breakthroughs that have yet to actually arrive, alongside small modular nuclear reactors and solid-state batteries,” Canary Media reporter Julian Spector wrote yesterday. “In theory, these crystal structures could radically improve solar panels’ capabilities by absorbing wavelengths of light that conventional silicon cells can’t catch. But the stunning advances in R&D specimens have yet to infiltrate the cold, hard world of commercial solar manufacturing.” Until now, at least. On Tuesday, the startup Tandem PV officially opened its new factory in Freemont, California. The company aims to produce large panels of glass treated with a photovoltaic perovskite crystal coating that can increase how efficiently panels convert the sun’s rays into electricity by nearly 40%.

“We’re going to emphasize quality and speed over cost,” Tandem PV CEO Scott Wharton told Matthew last year. “If we do this right, then the theory is, we’ve become the next First Solar — that’s our intention. We want to take back solar leadership from China, which is a bold statement, but I think we’re on the journey.”

Over the next three nights, an eye-popping 723 million birds are expected to be migrating across the U.S. In Michigan alone, at least 5.3 million birds are likely to fly overhead. “Most of these birds will be in flight while we sleep. They are guided by stars, the magnetic field, and sounds,” a meteorology account called Michigan Storm Chasers wrote on X. “You can help these birds migrate by turning off any unnecessary outdoor lighting, especially between the hours of 11 p.m. and 6 a.m.!”

A new report shared exclusively with Heatmap documents failures of transparency and governance at the Greenhouse Gas Protocol.

It is something of a miracle that tens of thousands of companies around the world voluntarily report their greenhouse gas emissions each year. In 2025, more than 22,100 businesses, together worth more than half the global stock market, disclosed this data. Unfortunately, it’s an open secret that many of their calculations are far off the mark.

This is not exactly their fault. To aid in the tedious process of tallying up carbon and to encourage a basic level of uniformity in how it’s done, companies rely on standards created by a nonprofit called the Greenhouse Gas Protocol. The group’s central challenge is ensuring that its standards are both credible and feasible — two qualities often in tension in greenhouse gas accounting. The method that produces the most accurate emissions inventory may not always be feasible, while the method that’s easy to implement may produce wildly inaccurate results.

Critics have long faulted the Protocol for allowing companies to look far better on paper than they do to the atmosphere. In 2022, the group began in earnest to try and fix this, starting with an overhaul of its governance. It created a new Independent Standards Board that would oversee and approve updates to each of its accounting rules, and later convened a series of technical working groups to develop the substance of those updates. One such group was updating the method for how companies should account for their electricity use. Another was focused on supply chain emissions.

The working groups would meet regularly to put together proposals and then submit those proposals to the Independent Standards Board for approval. A separate steering committee would then review the board’s decision to ensure that the Protocol’s overall principles had been followed throughout the process and make the final call.

The new structure was meant to “further bolster the credibility and integrity of these standards,” the Protocol wrote. The overhaul was especially timely as governments around the world, including those of the European Union and the state of California, were taking steps to adopt the Protocol’s standards in their own mandatory climate disclosure rules.

But what started as a laudable effort to improve transparency and accountability has turned rancid, some of the participants told me. Scientists are being pitted against industry representatives. Proposals, voting records, and other key documents are being kept from the public eye. Decisions made behind closed doors are going undocumented and undisclosed, kept secret even from the working group members who have devoted significant unpaid time to the cause of developing stronger standards.

These issues are broadly illustrated by the experience of Kate Dooley, a member of the GHG Protocol’s technical working group on forest carbon accounting. Dooley is a political scientist and lecturer at the University of Melbourne’s School of Geography, Earth and Atmospheric Sciences who has worked on issues related to forest carbon accounting for roughly two decades. She joined the 17-person working group in December 2024; the group’s assignment was to resolve a contentious debate over how companies that own or control forests or use forestry products in their supply chains should account for carbon emissions related to their harvesting, land management, and wood product purchases. The group included academics like Dooley, industry representatives from companies such as IKEA, and experts from non-profits including the Natural Resources Defense Council and the American Forest Foundation.

After six months of meetings, however, the members could not reach a consensus. One of the key reasons forest carbon accounting is difficult is that forests can both emit carbon and remove it from the atmosphere. Determining what proportion of those removals are a result of human activity versus what would happen naturally gets complicated quickly. The stakes were high, because even though the GHG Protocol standards are portrayed as neutral accounting exercises, small decisions about how this accounting is performed can create big shifts in incentives for how companies operate.

The forestry group considered two main approaches. One is called the “managed land proxy,” or MLP, and it is the method countries use to report their emissions to the United Nations. This method would allow companies to include all of the carbon that’s being sequestered on their lands in their greenhouse gas inventory. A timber company that cuts down trees, for instance, would count both the emissions released from logging as well as the carbon sequestered by the remaining tree stands and calculate a net result.

The major criticism of this approach is that it’s easy to game and leads to unintuitive results, where forest product companies come out looking like they are removing far more carbon than they are releasing. The method would also enable companies to use the average emissions and removals of an entire region in their calculations, rather than the specific logging and forest management practices of their supply source. Another risk is that companies could simply buy up additional forest land to reduce their emissions on paper while changing nothing about their business practices.

Proponents of this method put forward what they framed as a compromise, called “MLP+,” which attempted to put some guardrails around these issues. Regardless, the scientists in the group argued that it was scientifically incorrect to attribute all forest carbon sequestration that happens within a given tract of land to a company when that carbon removal may be the result of unrelated factors such as elevated CO2 in the air from climate change, or that a previous owner had cut down trees that were now growing back.

The alternative method that the scientists, including Dooley, put forward is called “activities-based accounting.” Rather than take credit for all forest growth, this method would require landowners to account for the growth that would have occurred without human interference and subtract it from their estimate of carbon removals. This method would be more difficult and require further work to fine-tune. It would also have the effect of making corporate forest emissions look much higher on paper.

In a final vote between two proposals, the members split 8 to 7 in favor of MLP+, with two sitting out the vote. The group delivered both proposals to the Independent Standards Board for consideration last spring, but the board could not reach a consensus, either. Ultimately, the organization decided to finalize the land sector standard in January 2026 without any guidance for forest carbon accounting, advising companies to go with whatever method they wanted as long as they disclosed how they did it. It noted that it would soon issue a request for information to gather more stakeholder input on the issue.

By the end of the working group process, the internal dynamics had grown combative. Dooley and other scientists in the group had presented certain scientific papers to support their rebuke of MLP, but another member, Nathan Truitt, the executive vice president of climate funding at the American Forest Foundation, began arguing that the same papers made the opposite point.

“It was this weird, Kafka-eque development,” Dooley said. She responded to the entire group with a long email detailing the last 20 years of debate on the subject, she told me. “I think in that email I accused [Truitt] of industry bias, because there was no other explanation for what he was doing,” she said.

The American Forest Foundation works with private landowners to support sustainable forest product markets. Truitt, for his part, characterized the atmosphere in the working group as toxic. He told me that the scientists did not adequately explain to him why they thought he was interpreting the papers incorrectly. He noted that the foundation is a mission-based nonprofit, and less than 5% of its revenues comes from the forest products industry, but the organization does believe in supporting healthy forest markets. “If landowners can’t generate revenue from appropriate forest management, there won’t be forest there very long,” he said.

But Dooley’s concerns were bigger than just interpersonal challenges. She didn’t understand why none of the explanatory memos or official proposals produced by the working group had been published to the Protocol’s website, when similar documents produced by the other working groups had been made public. (Truitt also was not aware of this until I reached out to him, and was surprised to learn it.)

Initially, the scientists’ full memo on their approach was not even shown to the Independent Standards Board; Dooley told me she had to write to the head of the board and ask that it be shared. It was also odd to her that there was no follow-up from the Independent Standards Board after the proposals had been submitted.

Perhaps one of the strangest elements of the process was that the Greenhouse Gas Protocol had conducted a real-world pilot program of MLP prior to the formation of the working group. There was public documentation of the pilot’s existence, but the outcomes were not published, nor were they shared with the group. Dooley said that someone who had viewed the results told her they decidedly proved the problems with MLP. Her understanding was that almost all of the forest product companies that participated reported huge amounts of net carbon removals, making them appear to have a beneficial impact on the climate, contributing nothing to global emissions. “To me, it’s inexplicable why that pilot study wasn’t shown,” she said.

Months later, in January 2026, Dooley received a document that reframed her experience. It was a formal complaint made by Truitt the previous April that challenged the scientists’ expertise and impartiality, she told me. She also learned that following the complaint, the Independent Standards Board solicited opinions from additional outside scientists on the two proposals. She was shocked that she had been kept in the dark as this was going on.

Dooley emailed the head of the board and other leaders at the Protocol to ask why she and the other scientists weren’t told about the complaint or given a chance to respond. “We write to express concern that this complaint was not initially communicated to those concerned, and to request clarification regarding its handling and any subsequent developments,” the email said. She also inquired about the unpublished proposals and lack of follow-up from the board. She sent the email on January 23. She has yet to receive a response, she said.

“It strikes me as a very bizarre process,” she told me. “It’s unacceptable.”

When I spoke to Truitt about the complaint, he told me he did not mean to suggest that Dooley and the other scientists’ perspective was invalid. On the contrary, Truitt was concerned that there weren’t more experts in the working group, or at least more of the right experts. In 2024, the Intergovernmental Panel on Climate Change had hosted a three-day meeting in Italy specifically about the issues with forest carbon accounting, albeit at the national level. Truitt read the final report that came out of that meeting and didn’t understand why none of the scientists involved were on the Protocol’s technical working group.

Initially he wanted to share this concern with the working group directly, he said, but third-party consultants hired to facilitate the group’s progress advised him to bring it to the Protocol’s staff. He did that, and again asked to share it with his colleagues so that it would at least be in the group’s records, but was instructed not to, he said.

Truitt told me his complaint urged the Protocol to invite some of the experts from the IPCC meeting to join the working group. He said that the head of the Independent Standards Board later told him there was not enough time, but that the board would consult with some of those experts once it had the proposals.

The GHG Protocol did not answer detailed questions I sent them for this story. “We are in the process of addressing, through an independent review, a few concerns relating to work within one of our Technical Working Groups,” a spokesperson told me in an email. “As this is an internal ongoing matter, we cannot comment further but we are committed to addressing any findings appropriately.”

The spokesperson also emphasized that robust debate was central to the standard-setting process, and that the organization is “committed to ensuring that all discussions are conducted in a respectful, transparent and well-facilitated manner, with clear governance structures in place to support balanced and evidence-based outcomes. We value all inputs and feedback on how to improve our multistakeholder processes.”

While Truitt and Dooley vehemently disagree on forest carbon accounting and what went wrong in the working group, they are on the same page about one thing — the Protocol has issues with transparency. A new paper published Wednesday argues that the issues Dooley described are systemic, and warns that the Protocol seems to be moving further away from its commitment to accountability.

The paper’s author is Danny Cullenward, an economist and lawyer focused on the scientific integrity of climate policy, who is currently a senior fellow at the University of Pennsylvania’s Kleinman Center for Energy Policy. Cullenward also sits on the Protocol’s Independent Standards Board and is restricted by a non-disclosure agreement from describing what he has witnessed in the role. His paper draws on publicly available information in an effort not to violate his NDA. (Cullenward has also contributed to Heatmap.)

Part of what drove Cullenward to write the piece were concerns outlined in a complaint he and another board member filed jointly to the Protocol. While Cullenward could not discuss the substance of the complaint, his paper notes that it alleges “violations of the Board’s terms of reference,” and that the violations “undermined the scientific integrity of the Board’s deliberations” over the land sector standard.

“I do not have any confidence that we are going to end up in a place where there is public disclosure about what occurred,” he told me, “and that is concerning.”

His paper critiques the Protocol’s formal complaints process more generally, noting that it does not describe how complaints should be adjudicated. Because the Independent Standards Board is bound by an NDA, filing a complaint is the only means by which members can flag malfeasance. If these complaints are then adjudicated in private, there is no “external mechanism to ensure that the Protocol’s overall governance rules are being followed in practice,” Cullenward writes.

He further highlights two overarching failings at the Protocol. The first is that the group’s two key decisionmaking bodies — the Independent Standards Board and the Steering Committee — are imbalanced. The former has members from industry, academia, and government, but no one from environmental non-governmental organizations. More than half the members on the latter are from the business and financial world, and the Steering Committee does not have a single member from the research community.

Not only does the nonprofit community not have a voice on the board, Cullenward writes, but the absence of those voices “risks politicizing the work of scientist Board members.” While the Protocol’s official decision-making hierarchy deems scientific integrity as its top priority, in practice, scientists are left to defend the science to the business community. If and when contentious scientific issues do arise and the board’s decisions are elevated to the Steering Committee, there is no one on that committee with the training to evaluate the disagreement.

Cullenward also criticizes the Protocol for not publishing records from the Independent Standards Board’s meetings, despite the fact that the board’s governance documents explicitly require the publication of meeting minutes. The board’s votes are done by secret ballot, the report says, so members themselves cannot even see how each other voted. Cullenward calls for this rule to be lifted, for votes to be public, and for board members not to be restricted by NDAs. “A well-functioning organization that follows its own rules does not need to restrict Board members’ legal ability to speak about their experiences,” he writes.

Lastly, Cullenward warns that the Protocol seems to be heading down a path of increasing opacity. Last fall, the group announced that it was planning to harmonize its standards with the International Organization for Standardization, or ISO, a separate, much larger group that writes voluntary standards for all kinds of industries. (To date it has written more than 26,000 standards, applying to everything from screw threads and paper sizes to food safety and electrical grids.) The GHG Protocol published new rules governing this joint work, which, unlike the technical working group rules, do not require members’ names be public or a balanced representation of stakeholders.

One of these joint working groups has already been convened, and while the GHG Protocol published the names of the members it nominated to the group on its website, the ISO-nominated members are not listed, and the total group size is unclear. It’s also unclear what this harmonization process will look like, and whether it will involve another overhaul of all of the standards the Protocol has spent the past several years revising.

I reached out to a few other carbon accounting experts for their thoughts on Cullenward’s paper. Michael Gillenwater, the executive director of the Greenhouse Gas Institute, who is in one of the other technical working groups, told me the concerns raised about bias go back to the origins of corporate climate accounting. The focus has long been on “what companies want to report and claim versus what is technically fit for the evolving range of purposes that the GHG Protocol has been and is newly being used for,” he said.

Matthew Brander, a professor of carbon accounting at the University of Edinburgh who also serves on a technical working group, told me he agrees that commercial interests are overrepresented among the working groups — not just in terms of numbers, but also in the amount of time and resources they can spend to engage and lobby for their preferred outcomes. Despite the Protocol’s claim of being “science-led,” he told me, scientific research is often ignored. Brander was also frustrated with the complaints procedure, telling me that a complaint he submitted did not get a substantive response.

“I don’t think there is ever a perfect way of managing/governing standard-setting processes,” he said in an email, “and commercial interests will very often hold sway.”

While Cullenward told me he thought improving transparency and representation would help alleviate many of his concerns, Dooley was less sure.

“The idea that science speaks as an independent, authoritative voice is a myth,” she said. “It’s actually what my research is about. Lots of science is politicized and can be used to support any side of the debate generally. But the way the process was set up very much leant into that and allowed that to happen, rather than mitigated against that.”